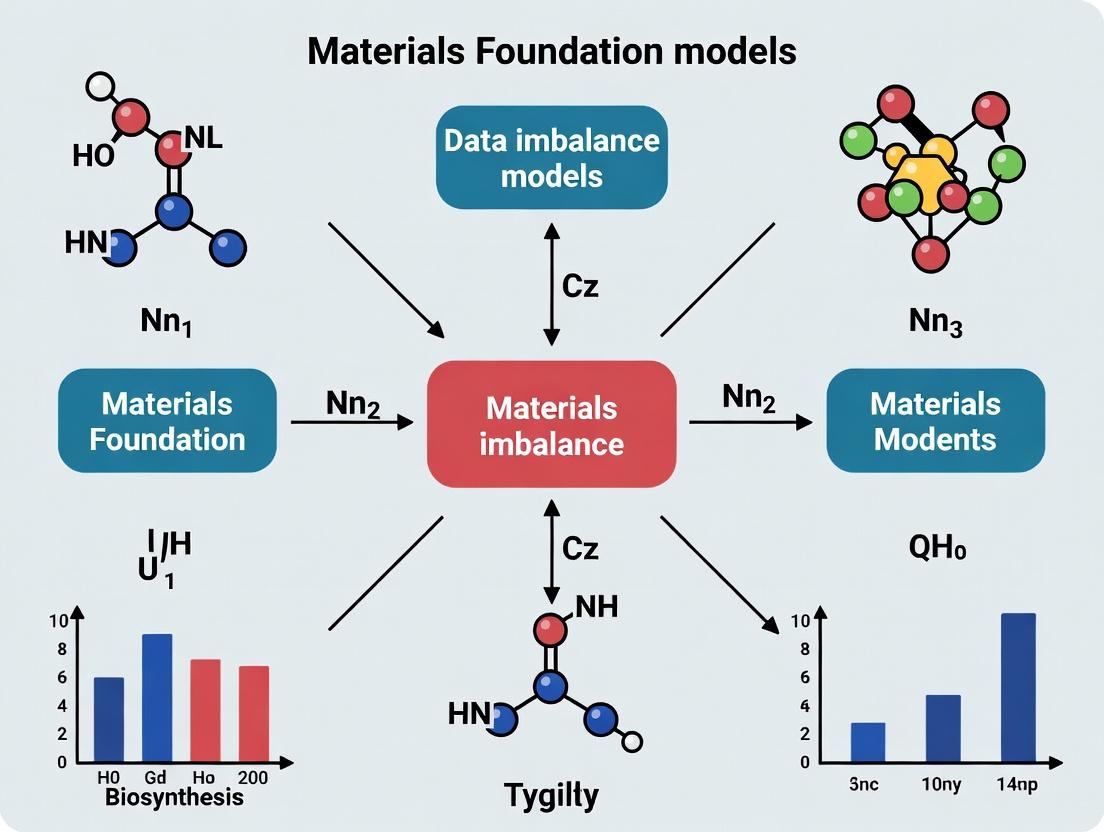

Beyond the Majority: Advanced Strategies for Tackling Data Imbalance in Materials Foundation Models

This article provides a comprehensive guide for researchers, scientists, and development professionals on addressing the pervasive challenge of data imbalance in materials science foundation models.

Beyond the Majority: Advanced Strategies for Tackling Data Imbalance in Materials Foundation Models

Abstract

This article provides a comprehensive guide for researchers, scientists, and development professionals on addressing the pervasive challenge of data imbalance in materials science foundation models. It explores the root causes and impact of skewed datasets on model performance, details cutting-edge methodological approaches for mitigation and application, offers troubleshooting and optimization techniques for real-world scenarios, and presents frameworks for rigorous validation and comparative analysis. The guide synthesizes current best practices to enable the development of robust, generalizable models capable of accelerating discovery in biomaterials and therapeutic development.

The Imbalance Imperative: Understanding Data Skew in Materials AI

Troubleshooting Guides & FAQs

Q1: In my materials property prediction model, performance is excellent for common semiconductors but fails catastrophically for ultrawide-bandgap materials. Is this a data imbalance issue? A1: Yes, this is a classic case of property extreme imbalance. Your training set likely lacks sufficient high-bandgap examples, causing the model to interpolate poorly for extreme values.

- Diagnosis: Plot the distribution of your target property (bandgap) across your training dataset. You will likely see a heavy concentration in the 0.5-2.0 eV range with a long, sparse tail beyond 4 eV.

- Solution: Implement target-aware data stratification. Split your data not randomly, but by ensuring each bin of the bandgap distribution is represented in your training set. Consider synthetic oversampling techniques like SMOTE for the high-bandgap region, but beware of physical unrealisticity.

Q2: My foundation model for predicting catalytic activity shows high overall accuracy, but inspection reveals it never correctly identifies "top-tier" catalysts (activity > 99%). What's wrong? A2: This indicates a severely imbalanced class distribution at the high-performance tail. The model optimizes for the majority (average catalysts) and ignores the rare, critical class of high-performance materials.

- Diagnosis: Create a confusion matrix focused on the high-activity class. You will see near-zero recall for that class.

- Solution: Employ a cost-sensitive learning protocol. Assign a higher misclassification penalty for the rare "top-tier" class during training. This forces the model to pay more attention to these examples. Pair this with strategic undersampling of the majority mid-performance class.

Q3: When fine-tuning a materials foundation model on my dataset of polymer membranes for gas separation, performance is erratic. How can I diagnose if data split imbalance is the cause? A3: Erratic performance often stems from non-representative data splits, where key sub-classes or property ranges are absent from the training fold.

- Diagnosis: Use the SCIKIT-LEARN

StratifiedShuffleSplitwith a binned version of your primary target (e.g., CO2 permeability). Before training, verify that the distribution of bins is similar across training, validation, and test sets. - Solution: Adopt a "stratified k-fold" cross-validation scheme based on binned target properties or key material descriptors (e.g., polymer functional group). This ensures each fold is a microcosm of the full dataset's variability.

Q4: I am curating a dataset for a foundation model on battery electrolytes. How do I balance the representation of different salt types (e.g., LiPF6 vs. LiTFSI) with the need for diverse solvent combinations? A4: You are facing a multi-dimensional or conditional imbalance. The prevalence of LiPF6 studies creates an imbalance, and its property distribution may be conditional on specific solvents.

- Diagnosis: Create a contingency table (see Table 1) to visualize the joint distribution of salts and solvent families.

- Solution: Use cluster-based sampling. First, cluster your data in descriptor space (e.g., using molecular fingerprints). Then, sample proportionally from each cluster to ensure coverage of the combined chemical space, not just individual dimensions.

Table 1: Hypothetical Distribution of Electrolyte Formulations in Literature

| Salt \ Solvent Family | Carbonates (EC/DMC) | Ethers (DME/DOL) | Sulfones (SL) | Total |

|---|---|---|---|---|

| LiPF6 | 1250 studies | 85 studies | 12 studies | 1347 |

| LiTFSI | 410 studies | 320 studies | 95 studies | 825 |

| LiClO4 | 180 studies | 45 studies | 8 studies | 233 |

| Total | 1840 | 450 | 115 | 2405 |

Q5: For a regression task on formation energy, my mean absolute error (MAE) is low, but the error distribution has heavy tails. Which re-weighting strategy should I use? A5: Heavy-tailed error indicates poor performance on rare or extreme samples. Standard MAE weights all errors equally.

- Solution Protocol: Inverse Frequency Weighting.

- Discretize your continuous formation energy into bins (

k=20). - For each training sample

iwith formation energy in binj, assign a weight:w_i = N / (k * count_j), whereNis total samples,kis bins, andcount_jis samples in binj. - Use these sample weights in your regression loss function (e.g.,

torch.nn.MSELoss(reduction='none')and then manually compute the weighted mean).

- Discretize your continuous formation energy into bins (

- Alternative: Use a quantile loss function (e.g., pinball loss) which allows you to emphasize accuracy at specific extremes (e.g., high stability) of the distribution.

Experimental Protocols

Protocol 1: Benchmarking Model Robustness to Property Extremes

Objective: Quantify a model's degradation in predictive performance for materials with out-of-distribution (OOD) extreme properties.

- Dataset Preparation: Start with a curated dataset (e.g., from Materials Project). Choose a target property (e.g., bulk modulus).

- Stratified Splitting: Sort data by the target property. Define the "extreme" set as the top and bottom 5th percentile. The "core" set is the middle 90%.

- Training/Testing:

- Scenario A (IID): Randomly split the entire dataset 80/20. Train and test. Record MAE overall and on the natural extremes present in the test set.

- Scenario B (OOD): Train the model exclusively on the "core" set (90% of data). Test it on the held-out "extreme" set (5% low + 5% high).

- Metric: Calculate the Performance Degradation Ratio (PDR):

PDR = (MAE_OOD / MAE_IID). A PDR >> 1 indicates high sensitivity to property imbalance.

Protocol 2: Active Learning for Mitigating Compositional Imbalance

Objective: Iteratively expand a training set to efficiently cover sparse regions of a chemical composition space (e.g., ternary phase diagrams).

- Initialization: Train a base model on an initial, small but diverse dataset.

- Query Loop: For

niterations: a. Uncertainty Sampling: Use the model to predict on a large, unlabeled pool of candidate compositions. Select thekcandidates where the model's predictive uncertainty (e.g., standard deviation from an ensemble) is highest. b. Diversity Constraint: Apply a filter (e.g., cosine similarity < threshold) to ensure selected candidates are not too similar to each other or the existing training set. c. Oracle Labeling: Obtain ground-truth labels for thekselected candidates (via DFT calculation or database lookup). d. Model Update: Add the newly labeled data to the training set and retrain/fine-tune the model. - Evaluation: Monitor model performance on a fixed, balanced test set across iterations. Plot accuracy vs. number of actively acquired samples, targeting rapid improvement for previously poor-performing composition classes.

Visualizations

Diagnosis and Remediation Workflow for Data Imbalance

Active Learning Loop for Imbalanced Data

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Addressing Imbalance | Key Consideration for Materials AI |

|---|---|---|

| SMOTE (Synthetic Minority Over-sampling) | Generates synthetic samples for rare classes in descriptor space. | Can create physically unrealistic or unstable materials if applied naively to compositional vectors. Use domain-constrained variants. |

| ADASYN (Adaptive Synthetic Sampling) | Similar to SMOTE but focuses on generating samples for minority class examples that are harder to learn. | Useful for boundary cases in phase classification, but may amplify noise in experimental property datasets. |

Class Weights (e.g., sklearn class_weight='balanced') |

Automatically adjusts the loss function to give more weight to underrepresented classes during training. | The default implementation is effective for categorical labels. For continuous extreme values, manual inverse-frequency weighting is required. |

| Focal Loss | A dynamic loss function that down-weights easy-to-classify examples, focusing training on hard negatives/positives. | Particularly effective in imbalanced binary classification tasks, such as identifying materials with toxicity or ultra-high strength. |

| Stratified Sampling (via scikit-learn) | Ensures that the relative class (or binned property) frequencies are preserved in all data splits (train/val/test). | Critical first step. Prevents the creation of test sets that are artificially "hard" due to missing entire subcategories of materials. |

| Uncertainty Sampling (Active Learning) | Identifies the data points for which the model is most uncertain, guiding targeted data acquisition. | Maximizes the informational value of expensive simulations/experiments to fill gaps in the data distribution. |

Troubleshooting Guides & FAQs

Q1: Why does my dataset contain so many more oxide compositions than nitrides or sulfides? A: This is a common experimental bias. Oxides are often prioritized due to their relative thermodynamic stability in air, simpler synthesis protocols, and historical research focus. This leads to a severe under-representation of other anion chemistries in public repositories.

Q2: When querying computational databases, I find far more DFT calculations for perovskites than for other crystal structures. How does this skew model training? A: This creates a "structural blind spot." Foundation models trained on such data will have high predictive accuracy for perovskite-formers but poor generalizability to glasses, complex intermetallics, or low-symmetry systems, limiting discovery in unexplored chemical spaces.

Q3: My model fails to predict properties for high-entropy alloys (HEAs) despite performing well on binary alloys. What's the root cause? A: The root cause is data scarcity. Experimental data for HEAs is sparse, expensive to generate, and computationally intensive to simulate. Your model lacks sufficient high-dimensional examples to learn the complex interactions in compositionally complex systems.

Q4: How does the "publication bias" toward positive or significant results affect materials data? A: Journals predominantly publish studies on materials with high performance (e.g., high conductivity, strong catalysts) or novel properties. Data on failed syntheses, non-performing materials, or standard baseline compounds is rarely archived, creating a massively skewed view of chemical space where most points appear "successful."

Q5: Why are there more computational records for elemental properties than for temperature-dependent properties? A: Calculating a single energy-atom at 0K is computationally cheap. Simulating finite-temperature dynamics (phonons, free energy) or defect-dependent properties requires orders of magnitude more resources. This skews datasets toward ground-state, pristine properties and away from realistic operating conditions.

Table 1: Compositional & Structural Bias in Major Materials Databases (Estimated Distribution)

| Data Category | Materials Project (%) | ICSD (%) | OQMD (%) | Primary Source of Skew |

|---|---|---|---|---|

| Oxide Compounds | ~70% | ~75% | ~65% | Experimental stability & historical focus |

| Perovskite Structures | ~12% | ~15% | ~10% | High interest in functional properties |

| Binary/ Ternary Systems | ~85% | ~80% | ~75% | Combinatorial explosion limits higher-order |

| Metallic Alloys (HEAs) | <0.5% | <0.1% | <1% | High experimental/computational cost |

| Computed Band Gaps (Direct) | ~60% | N/A | ~55% | Standard DFT workflow output |

| Temperature-Dependent Data | <5% | <10% | <3% | High computational cost for ab initio MD |

Table 2: Experimental vs. Computational Data Generation Metrics

| Metric | Typical Experimental Dataset (e.g., Battery Cyclability) | Typical Computational Dataset (e.g., DFT Formation Energy) |

|---|---|---|

| Data Points Per Week | 10 - 100 | 1,000 - 100,000 |

| Cost Per Data Point | $100 - $10,000+ | $0.10 - $10 (cloud computing) |

| Dimensionality (Features) | High (multi-faceted characterization) | Lower (idealized structures) |

| Failure/Synthesis Data | Rarely published or shared | Often not calculated (only stable phases) |

| Coverage of Space | Deep on specific systems | Broad but shallow and idealized |

Detailed Experimental Protocols

Protocol 1: Generating a Balanced Electrode Material Dataset Objective: To systematically create charge-discharge cycling data for both high-performing and under-performing cathode compositions to mitigate success-only bias.

- Design of Experiment: Use a ternary composition space (e.g., Li-Ni-Mn-Co-O). Create a grid sampling 50 compositions, explicitly including regions known from literature to have poor cyclability.

- Synthesis: For each composition, synthesize via solid-state reaction (precursor powders ball-milled, calcined at 900°C for 12h in air).

- Electrode Fabrication: Mix active material, carbon black, and PVDF binder (80:10:10 wt%) in NMP to form slurry. Cast onto Al foil, dry at 120°C under vacuum, and punch into 12mm discs.

- Electrochemical Testing: Assemble CR2032 coin cells in an Ar-filled glovebox (<0.1 ppm O2/H2O) with Li metal anode and 1M LiPF6 in EC/DMC electrolyte. Cycle cells between 2.5-4.3V at C/10 rate for 100 cycles.

- Data Curation: Record initial capacity, capacity retention at cycle 100, Coulombic efficiency, and voltage profile for ALL compositions, regardless of performance. Annotate with exact synthesis conditions.

Protocol 2: Active Learning for Computational Data Imbalance Objective: To strategically compute DFT properties in sparse regions of a phase diagram.

- Initial Seed: Start with a small, random set of 50 formation energy calculations for a ternary system (e.g., Ag-Cu-Ze).

- Model Training: Train a Gaussian Process Regressor (GPR) on the seed data to predict formation energy and its uncertainty.

- Query Strategy: Use the "expected improvement" acquisition function to select the next 20 compositions with either: a) predicted low stability (exploring negatives) or b) highest prediction uncertainty (exploring unknowns).

- Iteration: Compute DFT for the queried points, add them to the training set, and retrain the GPR. Repeat for 10 cycles.

- Output: A final dataset enriched with data from under-sampled, potentially unstable regions of the phase space, reducing the skew toward only stable compounds.

Visualizations

Title: Sources and Impact of Imbalance in Materials Data

Title: Active Learning Workflow to Mitigate Data Skew

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Addressing Data Imbalance

| Item/Category | Function & Relevance to Imbalance |

|---|---|

| High-Throughput Experimentation (HTE) Robotics | Automates synthesis and characterization to generate large, systematic datasets that include "negative" results, reducing cherry-picking bias. |

| Active Learning Software (e.g., COMBO, AMPL) | Implements intelligent query algorithms to guide next experiments/calculations towards sparse regions of chemical space, balancing datasets. |

| Failed Experiment Logs (Electronic Lab Notebooks) | Mandates structured recording of all synthesis attempts and characterization outputs, creating vital data on non-performing materials. |

| Benchmark Datasets (e.g., Matbench) | Provides standardized, curated test sets covering diverse tasks to evaluate model performance across both data-rich and data-poor domains. |

| Inverse Design Platforms (e.g., GNoME, CSS) | Generates novel, theoretically stable candidates outside of known databases, proposing targets to fill compositional gaps. |

| Data Augmentation Tools | Applies symmetry operations, trivial rotations, and mild noise to crystal structures to artificially increase sample size for under-represented classes. |

| Federated Learning Frameworks | Enables model training on distributed, proprietary datasets (e.g., from industry) without sharing raw data, accessing broader, less biased data pools. |

| Synthetic Data Generators (Classical Force Fields) | Uses fast simulations (MD, MC) to approximate properties for vast numbers of structures, providing preliminary data for sparse regions before DFT. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My materials foundation model performs excellently on validation data but fails to predict properties for novel, rare-element compounds. What is the likely cause and how can I troubleshoot this?

A: This is a classic sign of dataset imbalance and poor generalization. The model has overfit to majority classes (common elements) and cannot extrapolate to the minority tail (rare elements/compounds).

Troubleshooting Protocol:

- Audit Your Training Data: Calculate the frequency distribution of target variables (e.g., element composition, property range). Use the following protocol:

- Perform Stratified Error Analysis: Split your error analysis by data subgroups.

- Group your test/validation predictions by the rarity of the primary element.

- Compare Mean Absolute Error (MAE) or accuracy metrics between "common" and "rare" groups. A significant disparity confirms bias.

Q2: During fine-tuning of a pretrained foundation model on my imbalanced dataset, loss converges quickly but precision for the minority class (e.g., high-efficiency photovoltaic materials) remains near zero. What advanced sampling or loss techniques should I implement?

A: Standard gradient descent is biased by frequency. Implement techniques that adjust the learning signal.

Troubleshooting Protocol:

- Algorithmic Solution Selection Guide: Choose based on your imbalance severity (see Table 1).

- Protocol for Implementing Focal Loss: Focal Loss down-weights easy, majority class examples, focusing training on hard, minority examples.

- Protocol for Synthetic Minority Oversampling (SMOTE) in Materials Data:

- Caution: Use SMOTE only on feature vectors where interpolation in latent space is physically meaningful (e.g., compositional descriptors). Avoid on raw crystal structures.

Q3: How do I quantitatively evaluate if my mitigation strategy for data imbalance is working, beyond overall accuracy?

A: Overall accuracy is misleading. You must use a comprehensive suite of metrics evaluated per class or subgroup.

Troubleshooting Protocol:

- Generate a Multi-Metric Evaluation Table: For each key subgroup (e.g., material class), calculate the following after applying your mitigation strategy. Compare against a baseline model.

- Protocol for Calculating Subgroup Metrics:

Data Presentation

Table 1: Comparison of Imbalance Mitigation Techniques for Materials Foundation Models

| Technique | Category | Best For Imbalance Ratio | Key Hyperparameter | Impact on Generalization | Computational Overhead |

|---|---|---|---|---|---|

| Class-Weighted Loss | Algorithmic | Low (< 10:1) | class_weight (e.g., 'balanced') |

Moderate improvement on minority tail. Risk of over-correcting. | Negligible |

| Focal Loss | Algorithmic | Moderate (10:1 to 100:1) | gamma (focusing parameter) |

Good for focusing on hard minority examples. | Low |

| Random Over-Sampling | Data-Level | Very Low (< 5:1) | Sampling strategy | High risk of overfitting to minority noise. | Low |

| SMOTE | Data-Level | Moderate (10:1 to 50:1) | k_neighbors |

Can improve generalization if features are smooth. Risk of unrealistic interpolations. | Medium |

| Under-Sampling (Cluster-Based) | Data-Level | Very High (> 100:1) | Cluster algorithm, target size | Can improve learning signal but discards majority data. | High (for clustering) |

| Two-Phase Transfer Learning | Hybrid | Extreme (> 1000:1) | Phase 1/2 learning rate | High. Pre-train on broad data, fine-tune with careful re-weighting. | High |

Table 2: Example Evaluation Metrics Before and After Applying Focal Loss (Hypothetical Catalyst Discovery Dataset)

| Material Subgroup (by Central Element) | Support (Samples) | Baseline Model (Weighted BCE) | Model with Focal Loss (γ=2) | ||||

|---|---|---|---|---|---|---|---|

| Precision | Recall | F1 | Precision | Recall | F1 | ||

| Common (e.g., Fe, Co, Ni) | 12,500 | 0.89 | 0.93 | 0.91 | 0.87 | 0.94 | 0.90 |

| Less Common (e.g., Ru, Ir) | 1,200 | 0.62 | 0.45 | 0.52 | 0.65 | 0.67 | 0.66 |

| Rare/Earth-Abundant (e.g., Ce, Mn) | 85 | 0.10 | 0.01 | 0.02 | 0.31 | 0.25 | 0.28 |

| Macro-Average | - | 0.54 | 0.46 | 0.48 | 0.61 | 0.62 | 0.61 |

Mandatory Visualization

Title: Workflow for Diagnosing and Mitigating Data Imbalance

Title: Two-Phase Transfer Learning with and without Imbalance Mitigation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Imbalance-Aware Materials Foundation Model Research

| Item / Solution | Category | Function / Rationale |

|---|---|---|

| Imbalanced-Learn (imblearn) | Software Library | Provides off-the-shelf implementations of SMOTE, ADASYN, cluster under-sampling, and SMOTE variants crucial for data-level interventions. |

| Class-Weighted & Focal Loss | Loss Function | Built into PyTorch (nn.CrossEntropyLoss(weight=...)) and TensorFlow. Critical for algorithm-level correction during model training. |

| SHAP (SHapley Additive exPlanations) | Explainability AI | Quantifies the contribution of each input feature to a prediction. Essential for auditing model bias towards specific majority-class features. |

| Weights & Biases (W&B) / MLflow | Experiment Tracking | Logs hyperparameters, loss curves, and subgroup-specific metrics across hundreds of runs to systematically evaluate mitigation strategies. |

| Matbench / OQMD | Benchmark Datasets | Provides standardized, (often imbalanced) materials datasets for controlled testing of model generalization and fairness. |

| Cluster-Centric Sampling Script | Custom Protocol | Script to perform informed under-sampling by selecting representative majority samples from cluster centers, preserving data manifold structure. |

Welcome to the Technical Support Center. This resource is designed within the context of advancing materials foundation models, where severe data imbalance across key material classes poses significant challenges for model training and prediction accuracy. Below are troubleshooting guides and FAQs addressing common experimental issues in imbalanced domains.

FAQ & Troubleshooting Guides

Q1: In High-Entropy Alloy (HEA) synthesis, my experimental yield of single-phase solid solutions is extremely low compared to the prevalence of intermetallic or multiphase byproducts in the literature. What could be going wrong? A: This reflects the severe compositional imbalance in the HEA phase space, where the vast majority of multi-element combinations do not form stable single-phase structures. Troubleshooting steps:

- Verify Synthesis Protocol: Ensure rapid solidification is used to kinetically trap the solid solution. For arc melting, confirm multiple flipping and remelting steps (>5 times) for homogeneity.

- Check Elemental Selection: Recalculate your parametric criteria (e.g., ΔHmix, δ, VEC). A common error is overlooking atomic size difference (δ > 6.5% often promotes phase separation).

- Characterization Limit: Use high-resolution XRD coupled with Rietveld refinement and TEM to detect minor secondary phases your standard XRD may have missed.

Q2: When testing a novel solid-state electrolyte (SSE), my ionic conductivity measurements are orders of magnitude lower than the top-performing materials reported. How can I diagnose the issue? A: The database for SSEs is highly imbalanced, dominated by low-conductivity compounds. Your issue likely lies in grain boundary resistance or poor densification.

- Protocol Check: Are you performing Electrochemical Impedance Spectroscopy (EIS) on a properly densified pellet? Follow this protocol:

- Pelletization: Apply at least 300 MPa of uniaxial pressure.

- Sintering: Optimize temperature and time to avoid elemental loss (e.g., Li evaporation) and achieve >90% theoretical density. Use a sacrificial powder of the same composition.

- Electrode Application: Apply symmetric blocking electrodes (e.g., Au, sputtered Pt) evenly.

- Data Analysis: Ensure you are fitting the EIS spectrum correctly. The high-frequency intercept on the real axis is the bulk resistance (Rb). The semicircle is often grain boundary resistance (Rgb). A "depressed" semicircle indicates you may be misattributing contributions.

Q3: For porous material (MOF/COF) gas adsorption experiments, my BET surface area is inconsistently low and does not match benchmark isotherms. A: The imbalance between idealized crystal structures and real, defective samples is key.

- Activation Procedure: This is the most critical step. Follow meticulously:

- Solvent Exchange: Exchange synthesis solvent with a low-surface-tension solvent (e.g., acetone) over 24 hours.

- Activation: Use a supercritical CO2 dryer or a gentle thermal vacuum protocol (e.g., ramp to 120°C under dynamic vacuum over 12 hours).

- Degas Time: Insufficient outgassing before analysis leaves pore-filling solvent, collapsing the framework. Extend degas time and monitor pressure stability.

- BET Validity: Apply the Rouquerol criteria. For microporous materials, use the relative pressure (P/P0) range where n(1-P/P0})) increases with P/P0. Applying the BET model outside its valid range inflates results.

Data Presentation: Reported Performance Ranges in Imbalanced Domains

Table 1: Property Distribution in Key Imbalanced Material Classes

| Material Class | Dominant (Majority) Phase/Property | Minority (High-Performance) Target | Typical Reported Range (Minority) | Prevalence Ratio (Est. Majority:Minority) |

|---|---|---|---|---|

| High-Entropy Alloys | Multi-phase/Intermetallic mixtures | Single-Phase FCC/BCC Solid Solution | Yield Strength: 200-1000 MPa | > 100:1 in composition space |

| Solid-State Electrolytes | Low-conductivity compounds (< 10-5 S/cm) | High Li+ Conductivity (> 10-3 S/cm) | Ionic Conductivity: 10-3 - 10-2 S/cm | ~ 50:1 in experimental literature |

| Porous Materials (MOFs) | Structures with low porosity or stability | Ultra-High Surface Area (> 3000 m²/g) | BET Surface Area: 3000 - 7000 m²/g | ~ 20:1 in synthesized examples |

Experimental Protocols

Protocol 1: Synthesis of Single-Phase Cantor-like HEA (Arc Melting)

- Weighing: Precisely weigh pure elements (purity > 99.9 wt%) to equiatomic or near-equiatomic ratios in an Ar-glovebox.

- Melting: Place the mixture in a water-cooled copper hearth. Evacuate the chamber to < 10-3 Pa, then flush with high-purity Ar. Melt the button using a DC arc, with a Ti getter to scavenge residual oxygen.

- Homogenization: Flip and remelt the alloy button at least 5 times.

- Cooling: Allow the final ingot to cool inside the chamber under Ar atmosphere.

- Characterization: Perform XRD (Cu-Kα). For single-phase FCC/BCC, expect sharp, primary peaks without minor phase peaks. Confirm with SEM/EDS mapping.

Protocol 2: EIS Measurement for Solid-State Ionic Conductivity

- Pellet Preparation: Densify 150-300 mg of SSE powder in a 10-mm diameter die under 300-400 MPa for 2 minutes.

- Sintering: Fire the pellet in a covered alumina crucible at the optimal temperature (material-specific) for 2-12 hours.

- Electrode Application: Apply symmetric conductive electrodes (e.g., Au paste, sputtered Pt) on both faces.

- Measurement: Place the pellet in a spring-loaded measurement cell. Run EIS (e.g., 0.1 Hz to 7 MHz, 10 mV amplitude) under inert atmosphere.

- Calculation: Fit the Nyquist plot to an equivalent circuit (e.g., Rb-(Rgb//CPE)). Calculate total conductivity σ = L / (R * A), where L is thickness, A is electrode area, and R = Rb + Rgb.

Visualizations

Title: HEA Single-Phase Synthesis Troubleshooting Flow

Title: Solid-State Electrolyte Pellet Prep and Testing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Featured Experiments

| Item | Function | Example/Specification |

|---|---|---|

| High-Purity Metal Elements | Raw materials for HEA synthesis to avoid impurity-driven phase separation. | >99.9 wt% purity, chunk or wire form (e.g., Al, Co, Cr, Fe, Ni). |

| Argon Gas Purifier | Creates inert atmosphere for oxygen-sensitive synthesis (HEA, SSE). | In-line gas purifier to reduce O₂/H₂O to <1 ppm. |

| High-Pressure Pellet Die | Densifies powder samples into robust pellets for SSE testing. | Uniaxial die, stainless steel, compatible with 10-13 mm diameter. |

| Gold Sputtering Target | Creates thin, uniform blocking electrodes for EIS measurements on SSEs. | 2" diameter, 99.99% purity, for magnetron sputter coater. |

| Supercritical CO₂ Dryer | Activates porous materials by removing solvent without pore collapse. | Critical for MOF/COF activation prior to gas sorption. |

| Reference Electrolyte | Calibration standard for electrochemical cell setup validation. | 1M KCl solution for checking electrode performance. |

Troubleshooting Guides & FAQs

Q1: Our materials discovery dataset has a high proportion of perovskites but very few chalcogenides. The model performs poorly on predicting properties for chalcogenides. Is this data imbalance or noisy labels?

A: This is a classic data imbalance issue, not noise. The model is biased toward the majority class (perovskites). Noisy labels refer to incorrect property values (e.g., an erroneous bandgap measurement) within a material class. To confirm, inspect your dataset's class distribution.

Table 1: Key Differences: Imbalance vs. Noise

| Aspect | Data Imbalance | Label Noise |

|---|---|---|

| Core Problem | Skewed distribution of material classes/property ranges. | Incorrect target values (e.g., DFT-calculated property errors). |

| Primary Symptom | High accuracy on majority/head classes, poor performance on minority/tail classes. | High training error, poor general performance, inconsistent predictions. |

| Diagnostic Check | Plot histogram of material class or property value frequencies. | Audit labels for a subset via expert review or cross-validate with high-fidelity experiments. |

| Common in Materials | Overrepresentation of high-throughput DFT data for certain crystal systems. | Errors from DFT approximations, experimental measurement artifacts. |

Experimental Protocol for Diagnosis:

- Class Distribution Analysis: Calculate and plot the frequency of each material prototype (e.g., using the

pymatgenStructureMatcher) or bins of property values. - Noise Audit: For a random sample (e.g., 100 samples) from both head and tail classes, manually verify labels against source publications or recalculate using a consistent, high-fidelity method (e.g., a specific DFT functional).

- Performance Stratification: Evaluate your model's accuracy separately on the head (top 80% by frequency) and long-tail (bottom 20%) portions of your data.

Q2: What are effective sampling strategies to mitigate the long-tail problem when training a materials property predictor?

A: The goal is to give the model sufficient exposure to tail-class materials.

Table 2: Sampling Strategy Comparison

| Strategy | Mechanism | Pros for Materials Discovery | Cons |

|---|---|---|---|

| Random Oversampling | Duplicates samples from tail classes. | Simple; preserves all original tail data. | Leads to overfitting on exact duplicated compositions/structures. |

| SMOTE (Synthetic) | Creates interpolated synthetic samples in feature space. | Can generate new, plausible materials in underrepresented regions. | Risk of generating physically unrealistic or unstable crystal structures. |

| Weighted Loss | Assigns higher penalty for errors on tail-class samples during training. | No distortion of original data; easy to implement. | May slow convergence; requires careful tuning of class weights. |

| Two-Phase Learning | Train on balanced subset first, then fine-tune on full data. | Builds good general features before exposure to imbalance. | More complex training pipeline. |

Experimental Protocol for Two-Phase Learning:

- Phase 1 - Representation Learning: Create a balanced subset by randomly sampling an equal number of instances from each material class (or property bin). Train the foundation model on this subset until convergence.

- Phase 2 - Fine-Tuning: Initialize the model with Phase 1 weights. Continue training on the full, imbalanced dataset using a weighted loss function (weight inversely proportional to class frequency).

- Validation: Use a stratified validation set that maintains the original imbalance to gauge real-world performance.

Q3: How can we distinguish between a truly rare material with unique properties (tail class) and an outlier due to data corruption or noise?

A: This requires a multi-faceted validation approach.

Experimental Protocol for Outlier vs. Tail Discrimination:

- Structural/Compositional Plausibility Check: Use tools like the

pymatgenStructureclass to check for reasonable bond lengths, coordination environments, and negative Coulomb matrices. Compare to known databases (Materials Project, OQMD). - Local Dense Sampling: In the feature space (e.g., from a pre-trained model's penultimate layer), check if the suspect sample is isolated or part of a small, dense cluster. Isolated points are likely noise; small clusters may be genuine tail classes.

- Property Prediction Consistency: Use an ensemble of models trained with different seeds or initializations. If predictions for a sample vary wildly, it's likely a noisy outlier. Genuine tail samples should have more consistent predictions.

- High-Fidelity Verification: Select the most promising candidate tail materials from the cluster analysis and perform higher-fidelity simulation (e.g., hybrid DFT instead of GGA-PBE) or consult domain literature for experimental evidence.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for Addressing Imbalance & Noise

| Item / Solution | Function | Example / Note |

|---|---|---|

| Pymatgen | Python library for materials analysis. Critical for featurization, structural analysis, and accessing databases. | Use StructureMatcher for grouping prototypes, Composition for stoichiometry analysis. |

| MatMiner | Platform for data mining; provides a vast array of material descriptors (features). | Use to generate robust feature sets that help distinguish tail classes from noise. |

| imbalanced-learn | Python library offering implementations of SMOTE, ADASYN, and various sampling algorithms. | Caution: Apply synthetic sampling in descriptor space, not directly on crystal structures. |

| Weights & Biases (W&B) / TensorBoard | Experiment tracking tools. | Essential for monitoring stratified performance metrics (head vs. tail accuracy) across training runs. |

| High-Fidelity Computation Code | Software for definitive property calculation to audit labels. | VASP, Quantum ESPRESSO for DFT; DLPOLY for molecular dynamics. Used to establish "ground truth" for critical samples. |

| Crystallography Databases | Sources for validating material plausibility. | Materials Project, Inorganic Crystal Structure Database (ICSD), OQMD. |

Workflow & Conceptual Diagrams

Diagnosis and Mitigation Workflow for Imbalance and Noise

The Long-Tail Problem in Materials Data

Balancing the Scales: Modern Techniques for Imbalanced Materials Data

Technical Support Center: Troubleshooting Guides and FAQs

FAQ: Core Concepts & Strategic Choice

Q1: In our materials discovery dataset, the 'high ionic conductivity' class is rare. Should I use SMOTE, ADASYN, or undersampling? A: The choice depends on your dataset size and the nature of the rarity.

- SMOTE is preferred when you have a moderate number of minority samples (e.g., 50-100) and want to create a balanced dataset without losing majority class information. It generates synthetic samples along line segments between existing neighbors.

- ADASYN is better when the minority class distribution is highly complex and some sub-regions are harder to learn. It focuses on generating more synthetic data for minority samples that are difficult to classify.

- Informed Undersampling (e.g., NearMiss, Tomek Links) is suitable for very large datasets where computational efficiency is a concern, and when the majority class contains redundant or noisy examples that can be removed without significant information loss.

Q2: My model trained on SMOTE-generated data shows excellent validation accuracy but fails terribly on real-world, imbalanced test data. What happened? A: This indicates overfitting to the synthetic data distribution. SMOTE can create unrealistic samples if the minority class is very small or clustered, leading to artificial decision boundaries. The model learns patterns that do not generalize.

- Troubleshooting Steps:

- Apply Cross-Validation Correctly: Ensure you apply SMOTE only on the training fold within each CV iteration, never on the entire dataset before splitting. This prevents data leakage.

- Use Hybrid Approaches: Combine SMOTE with informed undersampling of the majority class (e.g., SMOTEENN) to create more naturalistic clusters.

- Evaluate with the Right Metrics: Stop using accuracy. Monitor precision, recall, F1-score, and especially the Area Under the Precision-Recall Curve (AUPRC) on a hold-out test set that reflects the true imbalance.

- Visualize: Project your synthetic and real data into 2D (using PCA or t-SNE) to check if SMOTE creates plausible samples in feature space.

Q3: When using ADASYN, I get a "MemoryError" on my large molecular descriptor dataset. How can I resolve this? A: ADASYN's density estimation step can be computationally intensive.

- Solutions:

- Dimensionality Reduction: First, apply PCA or feature selection to reduce the number of features before oversampling.

- Batch Processing: Implement a custom batch-wise ADASYN. Split the minority class into manageable chunks, apply ADASYN to each, and then combine.

- Switch Algorithm: Consider using Borderline-SMOTE, which has a similar goal but lower computational overhead, or use Random Undersampling on the majority class first to reduce overall size.

Q4: How do I handle categorical features (e.g., crystal system, presence of specific functional groups) when applying SMOTE? A: Standard SMOTE is designed for continuous features.

- Recommended Approach: Use the SMOTENC (SMOTE for Nominal and Continuous) variant. It calculates the median standard deviation for continuous features and uses a different distance measure (e.g., value difference metric) for categorical features when finding k-nearest neighbors. After interpolation, the synthetic categorical feature value is set to the most frequent category among the neighbors.

Experimental Protocols for Validation

Protocol 1: Correct Cross-Validation with SMOTE Objective: To evaluate model performance without data leakage from synthetic sample generation.

- Split the raw, imbalanced dataset into a fixed Hold-Out Test Set (20-30%), reflecting natural imbalance. Set aside.

- On the remaining data (Training/Validation pool), initiate a Stratified K-Fold Cross-Validation loop (e.g., k=5).

- For each fold: a. Isolate the k-1 training folds. b. Apply the chosen oversampling (SMOTE/ADASYN) only to the training folds to balance them. c. Train the model on the resampled training set. d. Validate on the untouched, imbalanced validation fold (the k-th fold).

- Calculate performance metrics (Precision, Recall, F1, AUPRC) across all validation folds.

- Final Model Training: Apply SMOTE to the entire Training/Validation pool. Train the final model. Evaluate conclusively on the Hold-Out Test Set from Step 1.

Protocol 2: Evaluating the Impact of Different Strategies Objective: To quantitatively compare the efficacy of different data-level strategies for a specific materials informatics task.

- Define a fixed evaluation metric (Primary: AUPRC; Secondary: F1-Score of the minority class).

- Define a fixed base classifier (e.g., Random Forest with set hyperparameters).

- Prepare the dataset with a fixed train/validation/test split.

- Apply the following strategies only to the training set:

- Baseline (No resampling)

- Random Oversampling

- SMOTE

- Borderline-SMOTE

- ADASYN

- Random Undersampling

- Tomek Links

- Combined (e.g., SMOTETomek)

- Train the base classifier on each resampled training set.

- Evaluate each model on the same, untouched validation set.

- Record metrics in a comparison table. Select the top 2-3 strategies for final testing on the hold-out set.

Table 1: Comparative Performance of Resampling Strategies on a Hypothetical Solid-State Electrolyte Screening Dataset Dataset: 10,000 samples; Minority Class ("High Conductivity") = 400 samples (4%). Base Model: Gradient Boosting.

| Resampling Strategy | Precision (Minority) | Recall (Minority) | F1-Score (Minority) | AUPRC | Training Time (s) |

|---|---|---|---|---|---|

| No Resampling (Baseline) | 0.72 | 0.31 | 0.43 | 0.48 | 12.1 |

| Random Oversampling | 0.65 | 0.78 | 0.71 | 0.69 | 14.5 |

| SMOTE (k_neighbors=5) | 0.70 | 0.82 | 0.76 | 0.75 | 18.3 |

| ADASYN | 0.68 | 0.85 | 0.76 | 0.76 | 22.7 |

| NearMiss-2 Undersampling | 0.75 | 0.65 | 0.70 | 0.70 | 8.2 |

| SMOTE + Tomek Links (Hybrid) | 0.71 | 0.83 | 0.77 | 0.75 | 20.1 |

Visualizations

Title: Correct SMOTE Validation Workflow to Prevent Data Leakage

Title: SMOTE Synthetic Sample Generation Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Libraries for Data-Level Imbalance Handling

| Item | Function | Typical Use Case |

|---|---|---|

| imbalanced-learn (Python) | Core library offering SMOTE, ADASYN, NearMiss, Tomek Links, and all hybrid methods. | Primary tool for implementing all discussed strategies in a Python environment. |

| scikit-learn | Provides the base estimators, metrics (precisionrecallcurve), and data splitting utilities essential for the validation protocols. | Model training, hyperparameter tuning, and rigorous performance evaluation. |

| SMOTENC variant | Extension of SMOTE within imbalanced-learn to handle mixed data types (continuous and categorical). |

Crucial for materials data containing both numeric descriptors and categorical labels. |

| Seaborn / Matplotlib | Visualization libraries for creating Precision-Recall curves, distribution plots before/after resampling, and 2D data projections (PCA/t-SNE). | Diagnosing the effect of resampling and presenting results. |

| Pandas & NumPy | Data manipulation and numerical computation backbones. | Loading, cleaning, and preprocessing the experimental dataset. |

Troubleshooting Guide & FAQs

General Implementation Issues

Q1: My cost-sensitive model converges but performs worse than a naive classifier on the minority class. What could be the cause? A: This is often due to over-aggressive cost weighting. High misclassification costs can destabilize gradients. Troubleshooting Steps: 1) Verify your cost matrix values. Start with small multiples (e.g., 2-5x) of the inverse class frequency. 2) Implement gradient clipping. 3) Use a validation set with a balanced metric (e.g., F1-score, Matthews Correlation Coefficient) for early stopping, not just training loss.

Q2: When using Focal Loss, my training loss becomes NaN after a few epochs. How do I fix this? A: NaN values typically stem from numerical instability in the loss computation, especially with extreme class imbalances and the modulating factor. Troubleshooting Steps: 1) Add a small epsilon (e.g., 1e-8) to the predicted probabilities in the log calculation. 2) Experiment with a lower focusing parameter (γ). Start with γ=1.0 or 2.0 instead of the original paper's 2.0-5.0. 3) Ensure your model's final layer initialization is appropriate for your task (e.g., bias initialization to reflect prior class probabilities).

Q3: How do I choose between cost-sensitive learning and Focal Loss for my materials dataset? A: The choice depends on your problem formulation and infrastructure. Use Cost-Sensitive Learning if: You have domain knowledge to specify precise misclassification costs (e.g., the cost of missing a promising high-entropy alloy is 10x the cost of a false positive). You are using algorithms like Random Forest or SVM that natively support class weights. Use Focal Loss if: You are training a deep neural network and want an automated way to down-weight easy negatives. Your imbalance is extreme (e.g., >1:1000). You lack clear domain costs and need a robust general solution.

Performance & Validation Issues

Q4: My model's recall for the rare phase is high, but precision is terrible. How can I balance this? A: This indicates the model is being too aggressive in predicting the minority class. Troubleshooting Steps: 1) In cost-sensitive learning, adjust the cost matrix: reduce the false positive cost relative to the false negative cost for the rare class. 2) In Focal Loss, increase the α balancing parameter for the majority class or decrease γ to reduce the focus on hard examples. 3) Post-process predictions by increasing the decision threshold for the minority class and validate using a Precision-Recall curve.

Q5: During k-fold cross-validation, my performance metrics vary wildly. Is this normal for imbalanced data? A: Yes, high variance is common because the rare class may be distributed unevenly across folds. Solution: Use stratified k-fold validation to preserve the class ratio in each fold. For very small minority classes, consider repeated stratified k-fold or bootstrapping to obtain more stable performance estimates.

Table 1: Performance Comparison on Imbalanced Materials Datasets (Hypothetical Study)

| Model Architecture | Dataset (Imbalance Ratio) | Accuracy | Balanced Accuracy | Minority Class F1-Score | Loss Function |

|---|---|---|---|---|---|

| Standard CNN | Crystal Systems (1:15) | 0.94 | 0.76 | 0.45 | Cross-Entropy |

| CNN + Class Weight | Crystal Systems (1:15) | 0.91 | 0.83 | 0.62 | Weighted CE |

| CNN + Focal Loss (γ=2.0) | Crystal Systems (1:15) | 0.90 | 0.87 | 0.71 | Focal Loss |

| Standard CNN | Perovskite Stability (1:50) | 0.98 | 0.51 | 0.12 | Cross-Entropy |

| CNN + SMOTE | Perovskite Stability (1:50) | 0.96 | 0.78 | 0.55 | Cross-Entropy |

| CNN + Focal Loss (γ=3.0) | Perovskite Stability (1:50) | 0.95 | 0.85 | 0.68 | Focal Loss |

Table 2: Typical Hyperparameter Ranges for Algorithm-Level Approaches

| Approach | Key Hyperparameter | Recommended Starting Value | Purpose & Effect |

|---|---|---|---|

| Cost-Sensitive Learning | Cost Multiplier (Minority Class) | 2-10 x inverse class freq. | Directly penalizes misclassification of rare instances. Higher value increases recall. |

| Focal Loss | Focusing Parameter (γ) | 2.0 | Controls how much easy examples are down-weighted. Higher γ focuses more on hard examples. |

| Focal Loss | Balancing Parameter (α) | Inverse class frequency or 0.25 for minority class | Addresses class imbalance independently of the modulating factor. Can be a list per class. |

Experimental Protocols

Protocol 1: Implementing Focal Loss for a Deep Learning Materials Model

Objective: To train a convolutional neural network (CNN) for classifying crystal structures from XRD patterns with a severe class imbalance using Focal Loss.

Materials & Software: Python 3.8+, PyTorch 1.9+, materials science dataset (e.g., XRD patterns from ICSD), GPU workstation.

Procedure:

- Data Preparation: Load and preprocess XRD patterns (normalize intensity, align 2θ axis). Split data into training (70%), validation (15%), and test (15%) sets using stratified sampling.

- Define Focal Loss Function:

- Model & Training Setup: Initialize your CNN. Set

alphaas a tensor of inverse class frequencies or [0.25, 0.75] for binary imbalance. Setgamma=2.0. Use the Adam optimizer. - Validation & Tuning: Monitor validation set Balanced Accuracy and Minority Class F1-Score. If precision is too low, incrementally increase the

alphafor the majority class. If model struggles to learn, reducegammato 1.0. - Evaluation: Report final performance on the held-out test set using Balanced Accuracy, Macro F1-Score, and generate a confusion matrix.

Protocol 2: Cost-Sensitive Learning with Gradient Boosting for Property Prediction

Objective: To predict the formation energy of stable vs. unstable compounds (binary classification) using a cost-sensitive Gradient Boosting Machine.

Materials & Software: Python 3.8+, scikit-learn 1.0+, imbalanced-learn, Matminer featurized dataset.

Procedure:

- Define Cost Matrix: Establish a cost matrix

CwhereC[i, j]is the cost of predicting classiwhen the true class isj. For stable/unstable prediction, a typical matrix might be: [[0, 1], [5, 0]], meaning missing an unstable compound (false negative) is 5x more costly than falsely labeling a stable one as unstable. - Implement Cost-Sensitive Training: Use the

class_weightparameter insklearn.ensemble.HistGradientBoostingClassifier. Calculate weights asweight_class_i = cost_of_misclassifying_class_i. Alternatively, use theMetaCostprocedure from theimbalanced-learnlibrary to reweight training instances. - Threshold Tuning: After training, do not use the default 0.5 decision threshold. Use the validation set to find the threshold that minimizes the total expected cost:

Predicted_Class = argmin_i sum_j P(j | x) * C[i, j], whereP(j|x)is the predicted probability. - Evaluation: Evaluate using total expected cost on the test set, alongside standard metrics.

Visualizations

Focal Loss Computation Workflow

Choosing an Algorithm-Level Approach

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Experiment | Example / Notes |

|---|---|---|

| Focal Loss Implementation (PyTorch/TF) | Core algorithm to dynamically scale cross-entropy loss, focusing training on hard/misclassified examples. | Use official implementations from torchvision.ops or tensorflow-addons. For custom layers, ensure numerical stability with logit clipping. |

Class Weight Calculators (sklearn.utils.class_weight) |

Computes balanced class weights for cost-sensitive learning in traditional ML models. | compute_class_weight('balanced', classes=np.unique(y_train), y=y_train) |

Imbalanced-Learn Library (imblearn) |

Provides MetaCost and other meta-algorithms for making classifiers cost-sensitive. | Essential for cost-sensitive versions of SVM, k-NN, and decision trees. |

Metrics Library (sklearn.metrics) |

Provides evaluation metrics robust to imbalance: Balanced Accuracy, Matthews Correlation Coefficient (MCC), Cohen's Kappa. | Always prefer MCC over accuracy for binary imbalance. |

| Bayesian Hyperparameter Optimization (Optuna) | Optimizes the delicate hyperparameters (γ, α, cost matrix values) efficiently. | Crucial for finding the optimal focusing parameter γ in Focal Loss without exhaustive grid search. |

| Stratified K-Fold Cross-Validator | Ensures relative class frequencies are preserved in each train/validation fold, giving reliable performance estimates. | Use StratifiedKFold from scikit-learn. Never use standard K-Fold on imbalanced data. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My VAE for generating novel alloy compositions only produces blurry and non-diverse outputs. What could be the issue? A: This is a common "posterior collapse" or "KL vanishing" problem. The model ignores the latent space. Implement a cyclical annealing schedule for the KL divergence term. Start with a weight (β) of 0, increase it linearly to 1 over the first 50% of training epochs, then reset it to 0 and repeat. This allows the encoder to learn meaningful representations before being regularized.

Q2: When using a pre-trained materials foundation model (like MatBERT) for transfer learning, my fine-tuned GAN fails to converge. How do I debug this? A: First, freeze all layers of the foundation model and use it only as a feature extractor for your discriminator. Ensure the learning rate for the GAN components is at least 10x higher than that of the frozen features. Use gradient clipping (max norm = 1.0) in both generator and discriminator. Check for mode collapse by monitoring the Fréchet Distance (FID) between real and synthetic minority class feature vectors.

Q3: My synthetic minority crystal structures, generated by a GAN, are physically invalid (e.g., unrealistic bond lengths). How can I enforce physical constraints? A: Integrate a rule-based or ML-based validator into the training loop. Use a post-processing step with a Conditional Variational Autoencoder (CVAE) where the condition is a validity score. Alternatively, add a penalty term to the generator's loss function based on the output of a separately trained property predictor (e.g., a graph neural network that predicts stability).

Q4: During transfer learning, the performance on the original majority classes drops drastically after generating and adding synthetic data. How do I mitigate this catastrophic forgetting? A: Implement Elastic Weight Consolidation (EWC). Compute the Fisher Information Matrix for the important parameters of the original model on the majority class data. During training on the combined (original + synthetic) dataset, add a regularization term that penalizes changes to these important parameters. The loss becomes: Ltotal = Lnew + λ * Σi Fi * (θi - θold,i)².

Q5: How do I quantitatively assess if my synthetic minority data is improving the materials foundation model's performance, beyond simple accuracy? A: Use a suite of metrics. Track precision, recall, and F1-score for the minority class specifically. Use the Matthews Correlation Coefficient (MCC) for a balanced view. Most critically, employ the Area Under the Precision-Recall Curve (AUPRC), which is more informative than ROC-AUC for imbalanced datasets. Perform a t-test on the AUPRC scores from 5 different runs with and without synthetic data.

Table 1: Impact of Synthetic Data on Model Performance for Imbalanced Polymer Discovery Dataset

| Model & Data Strategy | Minority Class F1-Score | Overall MCC | AUPRC | FID (Synthetic vs. Real Minority) |

|---|---|---|---|---|

| Baseline (No Synthetic Data) | 0.18 ± 0.04 | 0.32 | 0.21 | N/A |

| + SMOTE | 0.31 ± 0.03 | 0.41 | 0.38 | N/A |

| + VAE-Generated Data | 0.42 ± 0.05 | 0.53 | 0.45 | 35.2 |

| + Transfer GAN Data (Our Method) | 0.57 ± 0.03 | 0.62 | 0.59 | 12.7 |

Table 2: Comparison of Generative Models for Minority Phase Diagram Data

| Model | Training Time (hrs) | Validity Rate (%) | Diversity (Avg. Pairwise Distance) | Compatibility with Transfer Learning |

|---|---|---|---|---|

| Standard GAN | 48 | 65.1 | 0.74 | Low |

| WGAN-GP | 52 | 78.3 | 0.81 | Medium |

| Conditional VAE | 24 | 92.5 | 0.61 | High |

| Progressive Growing GAN | 76 | 84.7 | 0.89 | Medium |

| Pre-trained GAN w/Adapter | 18 | 88.2 | 0.83 | High |

Experimental Protocols

Protocol 1: Generating Synthetic Minority Electrolyte Compositions using a Transfer-Learning-Augmented GAN

- Data Preparation: Curate a dataset from the Materials Project. Majority class: stable electrolytes (90%). Minority class: high-conductivity, metastable electrolytes (10%). Represent each material as a 200-dimension feature vector using the pre-trained MatScholar model.

- Pre-training: Train a Deep Convolutional GAN (DCGAN) on the majority class data until convergence (FID < 20).

- Adapter Integration: Remove the final layer of the pre-trained generator. Insert a lightweight, trainable adapter module (two fully-connected layers with ReLU). Freeze all original DCGAN weights.

- Minority Generation Fine-tuning: Train only the adapter module using feature vectors from the minority class. Use a feature-matching loss for the generator: L = ||E[f(D(real_minority))] - E[f(D(G(z)))]||², where f is an intermediate layer output of the discriminator.

- Validation: Pass generated compositions through a DFT-based stability checker (e.g., pymatgen's phase analysis). Synthesize top-5 candidates for physical validation.

Protocol 2: Integrating Synthetic Data into a Materials Foundation Model for Imbalanced Classification

- Base Model: Start with a pre-trained Crystal Graph Convolutional Neural Network (CGCNN).

- Synthetic Data Augmentation: Generate synthetic minority samples using the trained Transfer GAN from Protocol 1. Mix with real data at a 1:1:2 ratio (realmajority : realminority : synthetic_minority).

- Elastic Weight Consolidation (EWC) Fine-tuning:

- Compute diagonal Fisher Information Matrix F for CGCNN on the original balanced training set.

- Define new loss: L(θ) = Lclassification(θ) + (λ/2) * Σi Fi * (θi - θ_i)², where θ are the original parameters.

- Set λ = 500 and train for 50 epochs with a reduced learning rate (1e-5).

- Evaluation: Test on a held-out, naturally imbalanced test set. Report per-class metrics and AUPRC.

Mandatory Visualizations

Transfer Learning GAN Workflow for Materials Data

EWC Fine-tuning to Prevent Catastrophic Forgetting

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Resources for Synthetic Data Generation in Materials Science

| Item | Function & Purpose | Example/Resource |

|---|---|---|

| Pre-trained Foundation Model | Provides robust feature representation of materials, enabling meaningful latent space exploration for generative models. | MatBERT, CGCNN, MEGNet, Jarvis. |

| Generative Model Framework | Core architecture for creating new, synthetic data samples. | PyTorch Lightning / TensorFlow with libraries like pytorch-gan-metrics for FID. |

| Physical Validation Software | Filters generated candidates for thermodynamic stability and synthesizability. | pymatgen (PhaseDiagram), ASE, VASP/Quantum ESPRESSO for DFT. |

| Differentiable Descriptor | A representation that allows gradient-based optimization of material structures/properties. | SOAP, Smooth Overlap of Atomic Positions (via dscribe library). |

| Imbalanced Learning Library | Provides advanced sampling and loss functions for handling class imbalance. | imbalanced-learn (SMOTE, ADASYN), TensorFlow Addons (Focal Loss). |

| High-Throughput Experimentation (HTE) Interface | Bridges digital generation to physical synthesis for validation loop. | Chemspeed, Labcyte Echo for automated synthesis of predicted candidates. |

Troubleshooting Guides & FAQs

Q1: When implementing RUSBoost for imbalanced materials data, the ensemble's performance on the minority class (e.g., a rare catalytic property) degrades despite increasing the number of weak learners. What is the root cause and solution?

A: This often stems from excessive under-sampling in the RUSBoost algorithm, which can lead to a critical loss of informative majority class instances needed to define decision boundaries. The boosting process then amplifies errors from these impoverished training subsets.

- Solution: Adjust the

sampling_strategyparameter in the RUSBoost implementation to retain a higher percentage of the majority class (e.g., 0.5 instead of 0.1). Complement this by increasing the number of boosting iterations (n_estimators) and reducing the learning rate (learning_rate) to allow for more gradual, stable learning. Validate using a metric like Matthews Correlation Coefficient (MCC) or the F2-score, which is more sensitive to minority class performance.

Q2: My hybrid pipeline (SMOTE + Random Forest) works well on hold-out test sets but fails dramatically when deployed on new, external experimental datasets. How can I diagnose and fix this generalization failure?

A: This indicates overfitting to the artificial data distribution created by the sampling method. SMOTE may generate noisy or unrealistic synthetic samples in high-dimensional feature spaces, which the ensemble memorizes.

- Solution: First, apply rigorous cross-validation with grouped splits (by material batch or synthesis protocol) to prevent data leakage. Second, replace SMOTE with a variant like SVMSMOTE or KMeansSMOTE, which are more cautious about the region where synthetic data is generated. Third, integrate a feature selection step prior to sampling to reduce dimensionality. Lastly, consider moving to a probability calibration method like Platt scaling for the final ensemble to improve probability estimates on new data.

Q3: In a bagging ensemble with cost-sensitive decision trees for predicting polymer stability, computational cost is prohibitive. What are the most effective strategies to reduce training time without significant performance loss?

A: The primary levers are reducing the dimensionality of the feature space and optimizing the base estimator.

- Protocol: 1) Feature Pre-screening: Use a fast, univariate filter method (e.g., ANOVA F-value) to remove low-variance and non-predictive descriptors. 2) Base Estimator Simplification: Limit

max_depthof each cost-sensitive tree and increasemin_samples_leaf. 3) Subsampling: Instead of bootstrapping, use a fixed, smaller random subset (e.g., 50%) of the pre-processed data for each bagging iteration. 4) Parallelization: Ensuren_jobs=-1is set to utilize all CPU cores. The table below quantifies the expected trade-offs.

Table 1: Impact of Optimization Strategies on Ensemble Performance & Speed

| Strategy | Expected Training Time Reduction | Potential Impact on MCC | Recommended For |

|---|---|---|---|

| Feature Pre-screening (50% cut) | 60-75% | Low (0.01-0.03 decrease) | High-dimensional descriptor sets (>500 features) |

| Simplified Base Trees (max_depth=10) | 40-60% | Moderate (0.05-0.10 decrease) | Large datasets (>100k instances) |

| Subsampling (50% without replacement) | 50% | Variable (Needs validation) | Very large datasets where bootstrapping is costly |

| All Combined | 85-90% | Moderate-High (Requires careful tuning) | Rapid prototyping and hyperparameter search |

Q4: How do I choose between a hybrid method (e.g., SMOTEBagging) and a boosting method (e.g., RUSBoost/CostBoost) for my imbalanced materials dataset?

A: The choice depends on the nature of the imbalance and computational constraints.

- Decision Protocol: Follow this diagnostic workflow:

- Compute Dataset Metrics: Skew ratio (#majority/#minority) and number of total samples.

- If Skew > 100 and samples < 10,000: Prefer RUSBoost. Its aggressive under-sampling is computationally efficient and often effective for extreme imbalance.

- If Skew < 100 and feature space is complex/non-linear: Prefer a hybrid method like SMOTEBagging or Balanced Random Forest. It reduces variance and models the minority class space more thoroughly.

- If misclassification costs are quantifiable (e.g., cost of missing a novel battery material): Use Cost-sensitive Boosting, explicitly assigning higher weights to the minority class.

- Always validate using time-series or group-wise cross-validation to avoid optimistic bias.

Experimental Protocols

Protocol 1: Benchmarking Ensemble Methods for Imbalanced Materials Data

Objective: Compare the performance of RUSBoost, SMOTEBagging, and Balanced Random Forest on a dataset of solid-state electrolyte candidates with imbalanced conductivity labels.

- Data Preparation: Split data 70/15/15 (train/validation/test) stratified by label. Apply standardization (Z-score) to all continuous features based on training set statistics.

- Model Training: For each algorithm, perform a grid search on the validation set:

- RUSBoost:

n_estimators: [100, 200, 500];learning_rate: [0.01, 0.1, 1];sampling_strategy: [0.1, 0.3, 0.5]. - SMOTEBagging:

n_estimators: [50, 100];max_samples: [0.5, 0.7]; SMOTEk_neighbors: [3, 5]. - Balanced Random Forest:

n_estimators: [100, 200];max_depth: [10, 20, None];class_weight: 'balanced_subsample'.

- RUSBoost:

- Evaluation: Report on the independent test set: Precision-Recall AUC, F1-score (for minority class), and Matthews Correlation Coefficient (MCC).

- Statistical Significance: Perform a paired 5x2-fold cross-validation t-test on the MCC metric across the best-found models.

Protocol 2: Implementing a Custom Cost-Sensitive RUSBoost for High-Throughput Screening

Objective: Optimize an ensemble to maximize recall for rare, high-activity catalysts without an untenable drop in precision.

- Define Cost Matrix: Assign misclassification costs. Example: False Negative (missed catalyst) cost = 10, False Positive cost = 1.

- Algorithm Modification: Modify the boosting step of RUSBoost to update instance weights based on the defined cost matrix instead of just classification error.

- Training: Use a rolling temporal validation (train on earlier experimental batches, validate on later ones). Tune the

sampling_strategyto maintain a minimum pool of majority class examples for boundary learning. - Threshold Tuning: Post-training, adjust the decision threshold on the validation set to achieve the desired operating point on the Recall-Precision curve.

- Deployment: The final model outputs a "cost-adjusted score"; materials scoring above the tuned threshold are flagged for validation.

Diagrams

Diagram 1: Hybrid Ensemble Workflow for Imbalanced Data

Diagram 2: Algorithm Selection for Imbalanced Materials Data

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Implementing Hybrid/Ensemble Methods

| Item (Software/Package) | Function | Key Parameter(s) to Tune |

|---|---|---|

| imbalanced-learn (Python) | Provides implementations of SMOTE, its variants (ADASYN, SVMSMOTE), and ensemble wrappers like RUSBoost, BalancedBagging. | sampling_strategy, k_neighbors (SMOTE), replacement (RUSBoost). |

| Scikit-learn | Foundational library for base estimators (Decision Trees, SVMs), bagging (RandomForest), and evaluation metrics. | class_weight, max_depth, n_estimators, max_samples. |

| XGBoost / LightGBM | Efficient gradient boosting frameworks with built-in cost-sensitive learning via scale_pos_weight and class_weight. |

scale_pos_weight, max_depth, learning_rate, subsample. |

| CUDA-enabled Hardware | GPU acceleration for training large ensembles on high-dimensional materials descriptors (e.g., from DFT or graph neural networks). | Memory allocation, number of threads. |

| Matthews Correlation Coefficient (MCC) | A single, informative metric for model selection on imbalanced datasets. Provides a balanced measure between all four confusion matrix categories. | N/A (Evaluation Metric). |

| SHAP (SHapley Additive exPlanations) | Post-hoc explainability tool to interpret ensemble predictions and identify key material features driving minority class identification. | masker, background_data. |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: After applying SMOTE to my Matbench dataset, my model's performance on the test set decreased significantly. What went wrong?

A: This is a common issue when synthetic samples are generated across improper decision boundaries. Ensure you apply SMOTE only to the training fold during cross-validation, not to the entire dataset before splitting. Data leakage from the test/validation set into the synthetic generation process will create an unrealistic performance estimate. Use the imblearn.pipeline.Pipeline to integrate SMOTE safely within the CV loop.

Q2: When using the OQMD, how do I handle the severe imbalance between abundant and rare material phases? A: The OQMD's natural distribution is heavily skewed. We recommend a hybrid strategy:

- Algorithmic Approach: First, apply Random Under-Sampling (RUS) on the majority classes (e.g., binary oxides) to a reasonable threshold.

- Loss Function Approach: Follow this with a Weighted Random Sampler in your DataLoader or use a class-weighted loss function (e.g.,

nn.CrossEntropyLoss(weight=class_weights)). The weights should be the inverse of the class frequency. - Validation: Always maintain a hold-out test set with the original, unmodified distribution to evaluate real-world performance.

Q3: My foundation model pretrained on imbalanced data shows poor zero-shot performance on rare material classes. How can I mitigate this during pretraining? A: This is a core thesis challenge. Mitigation must occur at the pretraining stage:

- Informed Sampling: Use Inverse Probability of Sampling (IPS) weighting when sampling batches from your large-scale database (e.g., OQMD). This gives rare classes a higher probability of being included in a batch.

- Gradient Modulation: Implement a gradient penalty or use Focal Loss, which down-weights the loss for well-learned (abundant) classes, forcing the model to focus on harder, rarer samples.

- Proxy Tasks: Design pretraining proxy tasks that are inherently balanced, such as denoising or masked token prediction, rather than property prediction which may be skewed.

Q4: I get a memory error when trying to compute class weights for a massively imbalanced dataset. What is a workaround? A: For extremely large datasets (common in OQMD), avoid computing weights on the entire set. Use a streaming approximation:

Experimental Protocols & Data

Protocol 1: Benchmarking Imbalance Techniques on Matbench Dielectric

- Objective: Compare the efficacy of different imbalance mitigation techniques on a known skewed dataset.

- Methodology:

- Load the

Matbench_Dielectricdataset. - Define a 5-fold cross-validation split using the provided scaffold split.

- For each mitigation technique (Baseline, SMOTE, RandomUnderSampler, Class-Weighted Loss), instantiate a pipeline with a fixed model (e.g., Random Forest or DNN).

- Train and validate exclusively on the training fold indices.

- Evaluate on the untouched test fold using F1-score (macro-averaged) and Balanced Accuracy.

- Aggregate results across all 5 folds.

- Load the

Protocol 2: Evaluating a Mitigated Pipeline on OQMD Stability Prediction

- Objective: Assess whether imbalance mitigation improves the identification of novel, potentially stable rare phases.

- Methodology:

- Query the OQMD for formation energy and stability flags (e.g.,

stability< 0.1 eV/atom). This creates a highly imbalanced binary target. - Split data temporally by publication date to prevent data leakage (e.g., train on data before 2020, test on 2020+).

- Apply the hybrid RUS + Weighted Loss strategy from Q2 during training.

- Train a crystal graph neural network (CGCNN) or ALIGNN model.

- Evaluate using Precision-Recall AUC and recall at high precision, as these are more informative than ROC-AUC for imbalanced tasks.

- Query the OQMD for formation energy and stability flags (e.g.,

Table 1: Performance Comparison on Matbench Dielectric (5-fold CV Macro F1-Score)

| Mitigation Technique | Model (Random Forest) | Model (LightGBM) | Notes |

|---|---|---|---|

| Baseline (No Mitigation) | 0.62 ± 0.04 | 0.65 ± 0.03 | Poor recall for minority classes. |

| SMOTE | 0.71 ± 0.03 | 0.74 ± 0.02 | Significant improvement but increased runtime. |

| Random Under-Sampling | 0.68 ± 0.05 | 0.70 ± 0.04 | Good improvement, faster than SMOTE. |

| Class-Weighted Loss | 0.73 ± 0.02 | 0.76 ± 0.02 | Best performance for tree-based methods. |

Table 2: OQMD Stability Prediction Results (Temporal Split)

| Training Strategy | Precision-Recall AUC | Recall at Precision=0.8 | Novel Stable Materials Found (Top 100) |

|---|---|---|---|

| Standard Training | 0.31 | 0.05 | 7 |

| RUS + Weighted Loss | 0.45 | 0.18 | 23 |

Visualizations

Diagram Title: ML Pipeline with Imbalance Mitigation Steps

Diagram Title: Balanced Pretraining for Materials Foundation Models

The Scientist's Toolkit: Essential Research Reagents & Software

| Item/Category | Function in Imbalance Mitigation | Example/Note |

|---|---|---|

| Imbalanced-Learn (imblearn) | Core Python library offering SMOTE, ADASYN, RandomUnderSampler, and pipeline integration. | Use imblearn.pipeline.make_pipeline to prevent data leakage. |

| Class-Weighted Loss Functions | Modifies the loss calculation to penalize misclassification of rare classes more heavily. | PyTorch: torch.nn.CrossEntropyLoss(weight=weights). For Focal Loss, use torchvision.ops.sigmoid_focal_loss. |

| Weighted Random Sampler | Ensures each batch during training has a balanced representation of classes. | PyTorch: torch.utils.data.WeightedRandomSampler. Critical for foundation model pretraining. |

| Matbench & OQMD Loaders | Provides standardized, curated access to benchmark materials datasets with defined splits. | from matminer.datasets import load_dataset. Use OQMD API with pymatgen and oqmd.org. |

| Evaluation Metrics (scikit-learn) | Metrics that are robust to imbalance, moving beyond simple accuracy. | sklearn.metrics.balanced_accuracy_score, precision_recall_curve, f1_score(average='macro'). |

| CGCNN/ALIGNN Frameworks | Graph neural network architectures specifically designed for crystal structures. | These are the standard models for predictive tasks on materials like those in OQMD. |

| Hyperparameter Optimization | Systematically search for optimal mitigation parameters (e.g., sampling strategy, loss weights). | Use Optuna or Ray Tune to optimize for Balanced Accuracy or PR-AUC. |

Diagnosing and Fixing Pitfalls in Imbalanced Model Training

Technical Support Center

FAQs and Troubleshooting for Performance Metric Analysis in Imbalanced Materials & Drug Discovery Datasets

Q1: My high-AUC-ROC model is failing to identify any rare, high-value material candidates. What's wrong? A: A high Area Under the Receiver Operating Characteristic Curve (AUC-ROC) can be misleading under severe class imbalance, as it is insensitive to changes in the class distribution. The ROC curve plots the True Positive Rate (Recall) against the False Positive Rate. When the negative class (e.g., common materials) is vast, a large number of false positives can be hidden, inflating the FPR denominator and making the metric seem robust. You are likely missing True Positives (rare candidates). Troubleshooting Guide:

- Immediate Action: Switch primary evaluation to Precision-Recall (PR) analysis.

- Diagnosis: Calculate the AUC-PR (Area Under the Precision-Recall Curve). For imbalanced datasets, AUC-PR directly focuses on the performance of the positive (minority) class and will drop significantly if your model is poor at finding true rare candidates.

- Protocol: Use the following Python snippet to compare:

- Interpretation: A large gap (e.g., AUC-ROC=0.95, AUC-PR=0.30) is a major red flag confirming the issue.

Q2: After adjusting the decision threshold to improve recall of a rare catalytic property, my model's precision collapsed. How do I analyze this trade-off? A: You are observing the fundamental Precision-Recall trade-off. Focusing on a single threshold or metric (like F1 at a default 0.5 threshold) is insufficient. Troubleshooting Guide:

- Immediate Action: Generate and analyze the full Precision-Recall Curve.

- Diagnosis: Plot Precision vs. Recall across all decision thresholds. Identify the "knee" or point where precision drops precipitously.

- Protocol:

- Calculate precision and recall for a range of thresholds.

- Plot the PR curve.

- Calculate the F1 score across thresholds:

F1 = 2 * (precision * recall) / (precision + recall). - Identify the threshold that maximizes F1 or aligns with your project's cost-benefit tolerance (e.g., "Recall must be >0.8").

- Visualization: See the Precision-Recall Trade-off Analysis diagram below.

Q3: The Matthews Correlation Coefficient (MCC) for my multi-class formulation screening model is near zero, but accuracy is high. What does this indicate? A: MCC is a balanced measure that considers all four confusion matrix categories (TP, TN, FP, FN). A value near zero suggests your model's predictions are no better than random, despite high accuracy—a classic sign of evaluation failure on imbalanced data where the majority class dominates the accuracy score. Troubleshooting Guide:

- Immediate Action: Examine the per-class performance and the confusion matrix.

- Diagnosis: High accuracy is likely achieved by correctly predicting only the majority class(es), while failing on minority classes. MCC's sensitivity to all errors reveals this.