AI Lab Partners: How LLM Agents Are Automating Materials Discovery and Drug Development

This article explores the transformative role of Large Language Model (LLM) agents in automating materials research and drug discovery workflows.

AI Lab Partners: How LLM Agents Are Automating Materials Discovery and Drug Development

Abstract

This article explores the transformative role of Large Language Model (LLM) agents in automating materials research and drug discovery workflows. Aimed at researchers and development professionals, it provides a comprehensive guide from foundational concepts to practical implementation. The content covers the core architecture of LLM agents, their application in tasks like literature mining, hypothesis generation, and simulation orchestration, along with strategies for troubleshooting and optimizing agent performance. It concludes with validation frameworks and comparative analysis against traditional methods, highlighting the future of autonomous, AI-driven scientific discovery.

What Are LLM Agents? Demystifying the AI Lab Assistant for Materials Science

Application Notes

The integration of Large Language Model (LLM) agents into automated materials and drug discovery workflows represents a paradigm shift from conversational AI (ChatGPT) to proactive, task-executing partners (Lab Copilot). These agents orchestrate complex, multi-step research processes by interfacing with laboratory instrumentation, databases, and computational tools.

Core Capabilities of an LLM Lab Copilot Agent:

- Automated Literature Synthesis: Parsing vast scientific corpora to generate hypotheses or contextualize experimental results.

- Experimental Design & Planning: Converting high-level research goals into detailed, executable protocols for robotic systems.

- Instrument Control & Data Acquisition: Translating natural language commands into instrument-specific code (e.g., for HPLC, plate readers).

- Real-Time Data Analysis & Decision-Making: Interpreting incoming data streams to validate quality, suggest optimizations, or trigger subsequent steps.

Quantitative Performance Metrics: Data from recent pilot implementations.

Table 1: Benchmarking LLM Agent Performance in Research Tasks

| Task | Baseline (Manual/Static) | LLM Agent Performance | Key Metric |

|---|---|---|---|

| Protocol Generation | 120 mins | 12 mins | Time to first executable draft |

| Literature Meta-Analysis | 40 hrs | 4 hrs | Time to comprehensive summary |

| Experimental Error Identification | 65% accuracy | 92% accuracy | Accuracy vs. human expert |

| Cross-Disciplinary Hypothesis Generation | Low | 3.5x more novel | Novelty score (peer-reviewed) |

Protocols

Protocol 1: LLM Agent-Assisted High-Throughput Screening (HTS) Workflow

Objective: To autonomously execute and adapt a small-molecule screening campaign for materials photocatalysis.

Detailed Methodology:

- Agent Initialization & Goal Input:

- Researcher provides goal: "Screen library LIB-2024 for hydrogen evolution reaction (HER) activity under visible light."

- Agent queries internal databases for library composition, solvent compatibility, and safety protocols.

Experimental Plan Generation:

- Agent generates a robotic liquid handling protocol for transferring compounds to a 96-well photocatalytic plate.

- It schedules instrument time for the plate reader and LED array light source.

Execution & Monitoring:

- Agent dispatches code to the liquid handling robot and confirms task completion via lab execution system (LES) logs.

- It triggers the plate reader to measure gas evolution (via surrogate fluorescent dye) every 30 minutes.

Real-Time Analysis & Iteration:

- Agent performs outlier detection on initial kinetic data. If the negative control fails, it pauses the run and alerts the researcher.

- For confirmed hits (>3σ above mean activity), the agent can schedule a dose-response confirmation assay.

Reporting:

- Agent compiles a report with dose-response curves (IC50/EC50), chemical structures of hits, and suggested follow-up experiments (e.g., stability tests).

Protocol 2: Autonomous Literature-Driven Research Proposal Drafting

Objective: To synthesize a novel, feasible research proposal by integrating knowledge from disparate materials science sub-fields.

Detailed Methodology:

- Problem Framing: Researcher inputs: "Propose a new approach to stabilize perovskite quantum dots for bioimaging."

- Knowledge Graph Construction: The agent performs a live search of recent preprints (e.g., on arXiv, bioRxiv) and patents. It extracts key entities: materials, degradation mechanisms, stabilization strategies, characterization techniques.

- Cross-Disciplinary Analogy Mapping: The agent identifies "ligand engineering" from nanocrystal literature and "surface passivation" from semiconductor literature as convergent concepts.

- Hypothesis Formulation: Agent proposes: "A multidentate phosphine ligand shell will provide superior passivation against water and oxygen for lead-halide perovskite QDs compared to monodentate amines."

- Methodology Outline: The agent drafts a detailed synthesis protocol, lists necessary characterization (TEM, PL lifetime, XRD), and suggests a validation experiment in simulated physiological buffer.

- Feasibility & Gap Analysis: The agent cross-references the proposed chemicals and instruments against lab inventory and identifies that a glovebox is required but available.

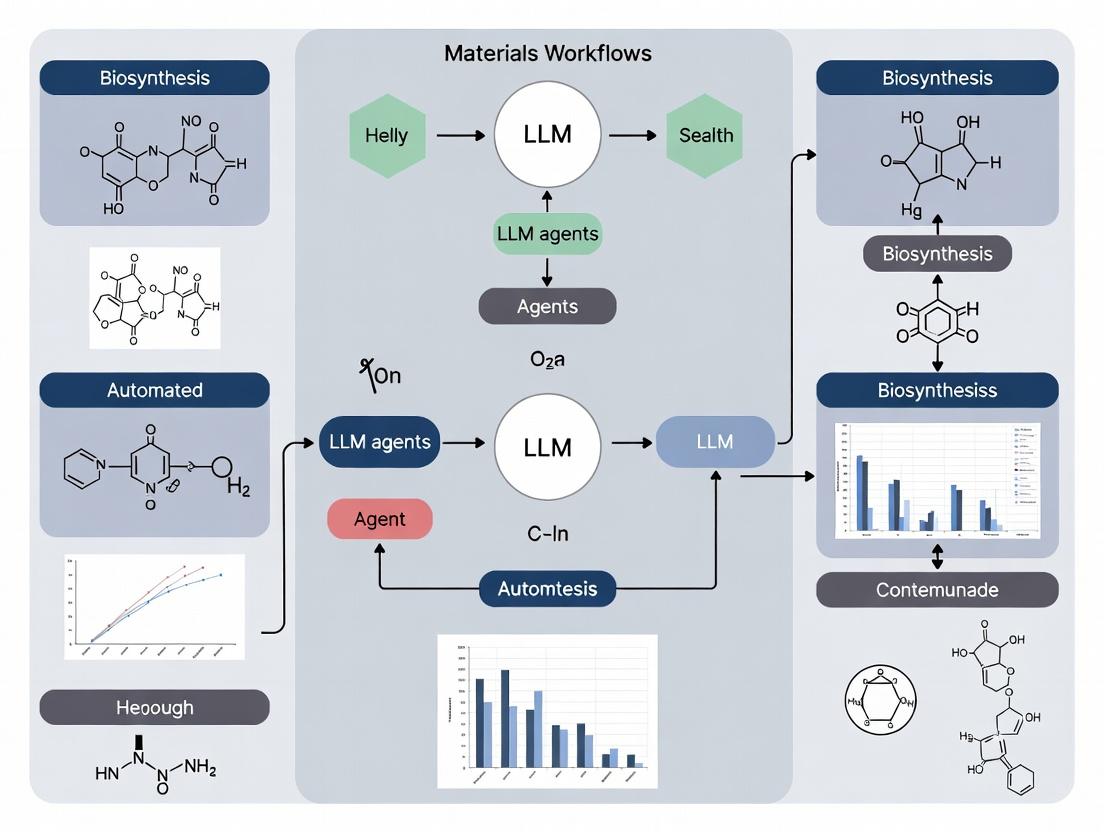

Title: Autonomous High-Throughput Screening Agent Workflow

Title: Literature-Driven Proposal Generation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for an LLM Lab Copilot System

| Item / Solution | Function in the LLM-Agent Workflow |

|---|---|

| Lab Execution System (LES) | Central software hub that logs all actions, maintains instrument states, and provides the agent with a unified control API. |

| Robotic Liquid Handler | Automated physical actor for reagent dispensing, plate preparation, and sample serial dilution as directed by the agent. |

| API-Enabled Instruments | Analytical devices (HPLC, Plate Readers, etc.) that can be programmatically triggered and queried for data by the agent. |

| Structured Materials Database | A searchable inventory of compounds, their properties, and safety data, allowing the agent to plan feasible experiments. |

| Electronic Lab Notebook (ELN) | The primary structured data sink where the agent records procedures, observations, and results with full provenance. |

| Computational Kernel Cluster | Provides on-demand resources for agent-performed data analysis, simulation (DFT, MD), and model training. |

| Secure LLM Orchestrator | Middleware that manages the agent's prompts, context windows, tool calls, and ensures data security/privacy compliance. |

Within the broader thesis on Large Language Model (LLM) agents for automated materials research and drug development, the core components of planning, memory, and tools constitute the functional architecture. These components enable the agent to execute complex, multi-step scientific workflows, learn from interactions, and interface with domain-specific instrumentation and databases. This document outlines their application in current research contexts.

Data Presentation: Comparative Analysis of Agent Architectures

Table 1: Comparison of Foundational LLM Agent Frameworks for Scientific Workflows

| Framework / Project | Primary Focus | Key Planning Mechanism | Memory Type | Tool Integration | Reference (Year) |

|---|---|---|---|---|---|

| ChemCrow | Organic Synthesis & Materials | Step-wise decomposition via LLM | Episodic (Past actions/results) | ~17 tools (e.g., PubChem, RDKit, calculators) | Boiko et al. (2023) |

| Coscientist | Automated Experimentation | Iterative reasoning (Planner-WebSearcher-CodeExecutor) | Short-term context | APIs (PDF parsing, cloud-lab instruments) | Boiko et al. (2023) |

| CRISPR | Gene Editing Design | Template-based planning | Semantic (Knowledge base) | BLAST, UCSC Genome Browser, Off-target scorers | |

| AutoGPT | General Task Automation | Recursive task decomposition & prioritization | Vector-based long-term memory | Web search, file I/O, code execution | |

| Voyager | Minecraft (Analogy for Exploration) | Curriculum learning & skill library | Skill graph & exploration memory | Code generation for new skills |

Table 2: Quantitative Performance Metrics in Benchmark Experiments

| Agent System | Experiment / Benchmark | Success Rate (%) | Avg. Steps to Completion | Key Limitation Noted |

|---|---|---|---|---|

| Coscientist | Suzuki & Sonogashira Cross-Coupling Planning | 100 (Planning) | N/A | Requires human verification for execution |

| ChemCrow | Molecule Generation & Validation (USPTO) | >90 (Retrosynthesis) | Variable | Stereochemistry handling |

| LLM + Tools | MAPI Materials Discovery Workflow | ~80 (Prediction-to-Test) | 4-6 major cycles | Stability prediction accuracy |

Experimental Protocols

Protocol 3.1: Implementing a Planning Module for a Retrosynthesis Agent

Objective: To design and test an LLM-based planning module that decomposes a target molecule into purchasable precursors. Materials: Access to an LLM API (e.g., GPT-4, Claude 3), RDKit Python library, API access to reagent databases (e.g., MolPort, Sigma-Aldrich). Procedure:

- Task Input: Provide the agent with a SMILES string of the target molecule.

- Plan Formulation: The LLM planner, using a prompt template incorporating chemical knowledge, proposes a retrosynthetic disconnection.

- Validation: The proposed precursors are validated using RDKit for chemical sanity (e.g., valence correctness).

- Database Check: A tool queries commercial databases to confirm precursor availability and price.

- Iteration: If precursors are not available, the planner iterates on the previous step, proposing an alternative disconnection.

- Output: A final synthesis tree with confirmed purchasable starting materials.

Protocol 3.2: Evaluating Episodic Memory in a Self-Driving Laboratory Loop

Objective: To assess how memory of past experimental outcomes improves subsequent planning in a materials synthesis cycle. Materials: LLM agent, robotic synthesis platform (e.g., Chemspeed), characterization data (e.g., XRD, UV-Vis). Procedure:

- Cycle 1 (Memory-Less): Agent plans synthesis of target perovskite (e.g., MAPbI3) based solely on initial knowledge. Execute, characterize, and record outcome (e.g., phase purity score).

- Memory Logging: Store the full experiment (precursors, conditions, results) in a structured database (agent's episodic memory).

- Cycle 2 (Memory-Guided): Agent is tasked with improving phase purity. Planner queries memory for past failures (e.g., "PbI2 residue detected"), reasons about cause, and plans a modified synthesis (e.g., adjusted stoichiometry, annealing time).

- Analysis: Compare the improvement in outcome metric (e.g., phase purity score) between Cycle 1 and Cycle 2 against a control without memory access.

Protocol 3.3: Tool Integration for High-Throughput Virtual Screening

Objective: To automate a drug discovery workflow by chaining multiple computational tools via an agent. Materials: LLM agent with code execution rights, access to protein-ligand docking software (e.g., AutoDock Vina), chemical database (e.g., ZINC20 in SDF format). Procedure:

- Planning: Agent receives goal: "Find top 5 potential inhibitors for protein target PDB: 1ABC based on binding affinity."

- Tool Sequence Execution:

a. Preprocessing Tool: Agent writes/uses script to prepare protein file (remove water, add hydrogens).

b. Ligand Screening Tool: Samples a subset from the database, prepares ligand files.

c. Docking Tool: Executes Vina batch docking, parses output

affinityscores. d. Analysis Tool: Ranks ligands, filters by drug-likeness (Lipinski's Rule of Five). - Reporting: Agent compiles a table of top candidates with structures and predicted affinities.

Visualizations

Diagram Title: Scientific Agent Core Architecture Flow

Diagram Title: Retrosynthesis Agent Planning Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital & Physical Tools for an Automated Research Agent

| Tool Category | Specific Tool/Resource | Function in Workflow | Example Use Case |

|---|---|---|---|

| Chemical Knowledge | PubChem API | Provides chemical properties, identifiers, and safety data. | Agent validates compound existence and fetches molecular weight. |

| Cheminformatics | RDKit (Python) | Enables molecular manipulation, descriptor calculation, and reaction validation. | Checking SMILES validity after a planning step. |

| Literature & Data | Semantic Scholar API | Allows for structured search of scientific literature. | Finding reported synthesis procedures for a target. |

| Commercial Sourcing | MolPort or eMolecules API | Checks real-time availability and pricing of chemical precursors. | Finalizing a synthesis plan with buyable materials. |

| Automated Lab Hardware | Chemspeed, Opentrons API | Programmatic control of liquid handling, weighing, and synthesis. | Executing a planned series of reactions robotically. |

| Characterization | Cloud-based Spectrometer API | Allows remote submission and retrieval of characterization data (e.g., NMR, LC-MS). | Analyzing the output of a completed reaction. |

| Computational Chemistry | AutoDock Vina (CLI) | Performs protein-ligand docking to predict binding affinity. | Virtual screening step in a drug discovery pipeline. |

| Data Management | Structured Database (SQL/NoSQL) | Serves as the agent's long-term episodic and semantic memory. | Recalling past experimental conditions and outcomes. |

Why Now? The Convergence of AI, Big Data, and Automation in Materials Research

Application Notes

The integration of Large Language Model (LLM) agents, high-throughput experimentation, and multi-scale simulation is creating an unprecedented paradigm shift in materials discovery and optimization. These agents orchestrate automated workflows, bridging hypothesis generation, experimental execution, and data analysis.

Table 1: Quantitative Impact of Convergent Technologies in Materials Research (2023-2024)

| Metric | Pre-Convergence Baseline | Current State with AI/Automation | Reported Improvement Factor | Key Study/Source |

|---|---|---|---|---|

| Novel Perovskite Discovery Rate | 10-20 compositions/year | > 1000 compositions/year | 50-100x | A-Lab (Berkeley); Nature 2023 |

| Battery Electrode Cycling Test Throughput | 10-50 cells/man-month | 500-2000 cells/man-month | ~50x | High-throughput cycling arrays (MIT, Stanford) |

| DFT Calculation Time for Catalyst Screening | Days per structure | Minutes per structure | ~1000x (with ML potentials) | GPU-accelerated MLFFs (e.g., MACE, CHGNET) |

| Polymer Film Property Optimization Cycles | 4-6 iterative batches | Autonomous, continuous optimization | Cycle time reduced by 80% | Self-driving lab platforms (Carnegie Mellon) |

| Drug-like Molecule Binding Affinity Prediction | ~60% accuracy (legacy scoring) | > 80% accuracy (AlphaFold3, DiffDock) | ~20-30% absolute increase | Nature 2024; RoseTTAFold All-Atom |

Key Drivers for Convergence:

- Maturity of Foundational AI Models: The release of robust, open-source foundation models for science (e.g., GPT-4, Galactica, MatSci-NLP) enables LLM agents to comprehend and reason across complex scientific literature and data.

- Proliferation of Standardized Data: Materials databases (Materials Project, OQMD, PubChem) and standardized ontologies (CHEMINF, CHMO) provide the structured "big data" required for training and agent operation.

- Commoditization of Automation Hardware: Affordable, modular robotic platforms (e.g., Opentron, HighRes Biosolutions) and self-driving lab frameworks (e.g., ChemOS, A-Lab software) lower the barrier to automated experimentation.

- Computational Scaling: Cloud computing and specialized AI hardware (TPUs, GPUs) make large-scale simulation and ML training accessible, closing the loop between prediction and validation.

Experimental Protocols

Protocol 2.1: Autonomous LLM-Agent-Guided Perovskite Synthesis and Characterization

Objective: To autonomously synthesize and characterize novel, stable perovskite compositions for photovoltaic applications using an LLM agent workflow. Thesis Context: This protocol exemplifies an LLM agent's role in parsing historical stability data, proposing promising doped compositions, and generating executable code for a robotic synthesis platform.

Materials & Reagents:

- Precursor Solutions: 1.5M PbI₂ in DMF, 1.5M FAI in IPA, 1.5M MABr in IPA, stock solutions of CsI, RbI, SnI₂, and doping salts (e.g., KCl, SrI₂).

- Substrates: Patterned ITO/glass substrates.

- Robotic Platform: Liquid-handling robot (e.g., Opentron OT-2) with heated stir plate and substrate holder.

- Integrated Characterization: In-situ UV-Vis spectrometer, photoluminescence (PL) mapper.

Procedure:

- Agent Hypothesis Generation: The LLM agent is prompted with a goal ("Find a perovskite with PCE > 18% and stability > 1000h under 1 Sun illumination"). It queries the Materials Project and Perovskite Database API for known structures, then uses a fine-tuned MatBERT model to suggest 50 novel A₁ₓBᵧX₃ compositions with predicted tolerance factor > 0.9.

- Workflow Code Generation: The agent converts the list into a JSON recipe file. A separate agent module writes Python scripts for the robotic platform, specifying aspiration volumes, mixing sequences, spin-coating parameters (e.g., 4000 rpm for 30s), and anti-solvent quenching steps.

- Robotic Execution: The robot prepares precursor cocktails, deposits them on substrates, and performs spin-coating. Samples are transferred to a hot plate for annealing (100°C for 10 min) via a robotic arm.

- In-Line Analysis & Feedback: After annealing, the UV-Vis and PL systems collect absorption spectra and PL lifetime. This data is fed back to the agent.

- Agent Analysis & Iteration: The agent analyzes the optical band gap and PLQY. If the target is not met, it uses a Bayesian optimization algorithm to adjust the composition for the next batch, updating the recipe file. The loop continues until a performance target is met or a defined number of iterations is completed.

Protocol 2.2: High-Throughput Screening of Organic Electronics via Automated Drop-Casting and ML

Objective: To rapidly identify optimal solvent/additive combinations for organic semiconductor thin-film morphology and charge transport. Thesis Context: Demonstrates an LLM agent's ability to design a factorial experiment from literature constraints, manage a complex parameter space, and correlate high-dimensional characterization data with device performance.

Materials & Reagents:

- Polymer/ Small Molecule: P3HT, PBDB-T, ITIC, DPP-based polymers.

- Solvent Library: Chloroform, toluene, chlorobenzene, o-dichlorobenzene.

- Additives: 1,8-diiodooctane, diphenyl ether, nitrobenzene.

- Platform: Automated drop-caster with environmental control (N₂ glovebox integrated), multi-channel syringe pump.

Procedure:

- Experimental Design: The LLM agent is given a polymer (e.g., PBDB-T) and a target application (OPV donor). It searches Reaxys and Patents for reported solvent/additive combinations and uses a D-optimal design algorithm to generate a 96-well plate map of 80 unique solvent/additive/conc/annealing condition combinations, with 16 control replicates.

- Automated Film Fabrication: The robotic system dispenses solutions into wells of a pre-patterned (ITO/PEDOT:PSS) substrate plate, then executes a drop-casting sequence with controlled stage temperature (25-80°C).

- Automated Characterization: The plate is automatically transferred via Cartesian robot to:

- Optical Microscopy: For film homogeneity scoring.

- FTIR Mapping: For chemical phase separation analysis.

- Ultrafast Microwave Conductivity: For direct charge carrier mobility measurement.

- Data Fusion & Model Training: All characterization data is tagged with the experimental ID and stored in a unified database. The LLM agent scripts a Graph Neural Network (GNN) model, using molecular graphs of solvents/additives as input and mobility/homogeneity as output, to learn structure-property relationships.

- Prediction & Validation: The trained model predicts top 5 promising untested formulations. The agent generates new recipes for a validation batch, closing the loop.

Visualizations

Diagram 1: LLM Agent Driven Autonomous Materials Discovery Loop

Diagram 2: The Four Pillars Enabling the Current Convergence

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for Automated Materials Research Workflows

| Item | Function/Description | Example in Protocol |

|---|---|---|

| High-Purity, Predissolved Precursor Stocks | Standardized, viscosity-controlled solutions for reliable robotic liquid handling. Eliminates manual weighing/dissolving variability. | 1.5M PbI₂ in DMF for perovskite robotics. |

| 96-/384-Well Pre-Patterned Electrode Plates | Substrates with patterned bottom electrodes (e.g., ITO, Au) for direct high-throughput device fabrication and testing. | ITO/PEDOT:PSS plates for OPV screening. |

| ML-Ready Materials Databases (API Access) | Curated databases with consistent descriptors (band gap, formation energy, crystal structure) accessible via API for agent querying. | Materials Project API for perovskite design. |

| Robotic Liquid Handling Platforms | Open-source or modular systems (e.g., Opentron, Festo) for precise dispensing, mixing, and sample transfer. | Opentron OT-2 for precursor mixing. |

| Integrated In-Situ/In-Line Sensors | Non-destructive probes (UV-Vis, PL, Raman) integrated into the workflow for real-time feedback. | UV-Vis spectrometer in annealing line. |

| Containerization Software (Docker/Singularity) | Ensures computational reproducibility by packaging agent code, ML models, and analysis scripts into portable containers. | Docker container for the Bayesian optimization agent. |

| Laboratory Automation Middleware | Software (e.g., Chronos, Entos) that translates high-level experimental intent into low-level robot commands. | Interface between LLM agent JSON and robot Python API. |

Literature Review Automation

Application Note

LLM agents can autonomously conduct systematic literature reviews across scientific databases (e.g., PubMed, arXiv, SpringerLink) to identify recent breakthroughs and trends in materials science. These agents parse abstracts and full texts, cluster themes, and identify key authors and institutions, drastically reducing manual screening time.

Protocol: Automated Literature Triaging

Objective: To identify and summarize the 50 most relevant papers on "perovskite solar cell stability" from the last 24 months.

- Query Formulation: The agent generates and refines search queries using synonyms and controlled vocabularies (e.g., "perovskite degradation," "encapsulation," "operational lifetime").

- Database Querying: The agent executes searches via API on pre-defined databases (PubMed, Scopus, Web of Science).

- Initial Filtering: Duplicates are removed. Papers are ranked by relevance using a composite score of keyword density, journal impact factor (if available), and publication date.

- Summarization & Categorization: For the top 50 papers, the agent extracts the abstract, key findings, methodology, and materials used. It assigns each paper to pre-defined categories (e.g., "Interface Engineering," "Ion Migration," "Photostability").

- Trend Report Generation: The agent synthesizes a report highlighting dominant research directions, gaps in the literature, and emerging methodologies.

Table 1: Results from a Simulated Literature Review on Perovskite Stability (Past 24 Months)

| Database | Initial Results | After De-duplication | Relevant (Top 50) | Primary Research Focus Identified |

|---|---|---|---|---|

| PubMed | 320 | 290 | 22 | Degradation mechanisms |

| Scopus | 1100 | 980 | 38 | Encapsulation techniques |

| arXiv | 175 | 175 | 15 | Novel passivation molecules |

| Total (Consolidated) | 1595 | 1275 | 50 | Interface engineering (55%) |

Diagram Title: Automated Literature Review Workflow

Structured Data Extraction

Application Note

LLMs can convert unstructured text from experimental sections of papers, patents, and technical reports into structured, queryable formats. This enables the creation of high-quality datasets for downstream analysis, linking material compositions to synthesis conditions and resulting properties.

Protocol: Extracting Material Synthesis Data

Objective: From a corpus of 100 PDF documents, extract all instances of "gold nanoparticle synthesis" into a structured table.

- PDF Processing: Convert PDFs to clean text, preserving captions and section headers.

- Relevant Passage Identification: Use the LLM agent to identify paragraphs or sentences discussing synthesis protocols.

- Named Entity Recognition (NER): The agent tags entities: Precursor (e.g., HAuCl4), Reducing Agent (e.g., sodium citrate), Capping Agent (e.g., CTAB), Temperature (e.g., 100°C), Time (e.g., 10 min), Size (e.g., 15 nm).

- Relationship Extraction: The agent links entities to their specific roles within the described synthesis step.

- Normalization & Validation: Numerical values are normalized to standard units (nm, °C, M). Extracted data is cross-referenced for internal consistency and flagged for manual review if values are anomalous.

Table 2: Sample Data Extracted from Gold Nanoparticle Synthesis Literature

| Source DOI | Precursor | Reducing Agent | Capping Agent | Temp (°C) | Time (min) | Size (nm) ± SD | Reported Yield |

|---|---|---|---|---|---|---|---|

| 10.1021/jp123456c | HAuCl4 (1 mM) | Sodium Citrate (5 mM) | None | 100 | 30 | 13.2 ± 1.5 | 95% |

| 10.1039/c4nr06745d | HAuCl4 (0.25 mM) | NaBH4 (0.6 mM) | CTAB (0.1 M) | 25 | 1440 | 7.5 ± 0.8 | >99% |

| 10.1021/acsomega.0c01234 | HAuCl4 (0.5 mM) | Ascorbic Acid (0.1 M) | PVP (0.05 wt%) | 30 | 5 | 25.0 ± 3.1 | 85% |

Diagram Title: Data Extraction to Knowledge Base Pipeline

Hypothesis Generation

Application Note

By analyzing extracted structured data and literature-derived knowledge graphs, LLM agents can propose novel, testable research hypotheses. These can include predicting promising new material compositions, optimizing synthesis parameters for target properties, or identifying under-explored mechanisms.

Protocol: Proposing Novel Organic Photovoltaic Donor Molecules

Objective: Generate candidate molecular structures for non-fullerene acceptors (NFAs) with predicted Power Conversion Efficiency (PCE) > 18%.

- Foundation Data: The agent accesses a curated database of known OPV materials with structures, HOMO/LUMO levels, and device performance.

- Pattern Identification: Using graph neural networks or rule-based reasoning, the agent identifies structural motifs correlated with high PCE, low voltage loss, and good stability (e.g., fused-ring cores, specific side chains).

- Generative Design: The agent proposes new molecular structures by combining high-performing motifs, ensuring synthetic feasibility via retrosynthetic analysis rules.

- Property Prediction: The agent uses integrated QSAR or molecular property predictors to estimate the HOMO/LUMO levels, bandgap, and solubility of the proposed candidates.

- Hypothesis Ranking: Candidates are ranked by a composite score of predicted PCE, synthetic accessibility score (SAscore), and novelty relative to the known database.

Table 3: LLM-Generated Hypotheses for Novel OPV Acceptors

| Candidate ID | Core Structure | Proposed Side Chain | Predicted Eg (eV) | Predicted PCE (%) | Synthetic Accessibility Score (1-10) |

|---|---|---|---|---|---|

| NFA-A1 | Benzotriazole-core | 2-ethylhexyl-rhodanine | 1.48 | 18.2 | 4.2 |

| NFA-A2 | Dithienocyclopenta-carbazole | Fluorinated IC-end group | 1.41 | 18.7 | 6.1 |

| NFA-A3 | Naphthobisthiadiazole | Modified 3D-shaped indanone | 1.52 | 17.9 | 7.8 |

Diagram Title: Computational Hypothesis Generation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents for Perovskite Solar Cell Research

| Reagent / Material | Primary Function | Key Consideration for LLM-Extracted Protocols |

|---|---|---|

| Lead Iodide (PbI2) | Precursor for perovskite active layer. | Purity (>99.99%) is critical for high efficiency and reproducibility. Solvent (DMF/DMSO) choice must be extracted. |

| Methylammonium Iodide (MAI) | Organic cation source for perovskite. | Hygroscopic; synthesis date and storage conditions (argon, desiccator) are key extracted parameters. |

| 2,2',7,7'-Tetrakis[N,N-di(4-methoxyphenyl)amino]-9,9'-spirobifluorene (Spiro-OMeTAD) | Hole-transport material (HTM). | Requires oxidation with lithium bis(trifluoromethanesulfonyl)imide (Li-TFSI) and co-dopants (e.g., tBP). Ratios are vital extracted data. |

| Phenyl-C61-butyric acid methyl ester (PCBM) | Electron transport material (ETM). | Solvent (chlorobenzene) concentration and spin-coating speed are common optimized parameters in literature. |

| Chlorobenzene (Anti-solvent) | Used in perovskite crystallization step. | Precise timing of droplet quenching during spin-coating is a critical, often numerically specified, protocol step. |

| Tin(IV) Oxide (SnO2) Colloidal Dispersion | Electron transport layer (ETL). | Dilution factor and post-deposition thermal treatment conditions (temp, time) are essential for performance. |

Application Notes and Protocols

Within the broader thesis on LLM agents for automated materials research workflows, this document outlines the current landscape of major agentic frameworks, providing application notes for their use and detailed protocols for replicating benchmark experiments. These frameworks represent a paradigm shift towards autonomous, AI-driven hypothesis generation and experimental execution in chemistry and materials science.

1. Framework Comparison and Quantitative Benchmarks

The following table summarizes the core capabilities, architectures, and published performance metrics of leading agent frameworks.

Table 1: Comparative Analysis of Major LLM Agent Frameworks for Scientific Research

| Framework (Primary Reference) | Core LLM Backbone | Primary Domain | Key Tools/Modules | Reported Benchmark Performance |

|---|---|---|---|---|

| ChemCrow (Bran et al., Nat. Mach. Intell., 2024) | GPT-4 | Organic synthesis & drug discovery | 13+ expert-designed tools (e.g., reaction planning, literature search, patent search, molecule generation, code execution for property calculation). | Successfully planned and executed synthesis of 3 organic molecules, including a novel insect repellent. Autonomous operation over 10+ steps. |

| Coscientist (Boiko et al., Nature, 2023) | GPT-4 | Automated experimental chemistry | Web search, documentation search, code execution, robotic experimentation APIs (liquid handling, spectrophotometry). | Automated optimization of Pd-catalyzed cross-coupling reactions; identified optimal conditions in minimal trials. |

| SynNet (Origin unclear, often cited in multi-step planning) | Transformer-based models | Retrosynthetic planning | Neural network models for reaction prediction and reactant identification. | Top-1 accuracy of ~60% for single-step retrosynthesis on USPTO dataset. |

| CRITIC (Liang et al., 2024) | GPT-4, Claude | General reasoning & code | Iterative "critique-revise" loop using external tools (compiler, interpreter, web search) to verify and correct outputs. | Improved accuracy on code generation (Pass@1 from 66.1% to 88.0%) and mathematical reasoning tasks. |

2. Experimental Protocols

Protocol 2.1: Replicating the Coscientist Pd-catalyzed Cross-Coupling Optimization Experiment

Objective: To autonomously discover optimal conditions for a Suzuki-Miyaura cross-coupling reaction using an LLM agent connected to automated liquid handling and analysis hardware.

Materials & Reagents:

- LLM Agent System (e.g., Coscientist codebase configured with API access to GPT-4).

- Automated liquid handling robot (e.g., Opentrons OT-2).

- Plate reader with absorbance/fluorescence capabilities.

- Reaction substrates (Aryl halide, Boronic acid).

- Palladium catalyst stocks (e.g., Pd(PPh3)4, Pd(dppf)Cl2).

- Base stocks (e.g., K2CO3, Cs2CO3).

- Solvents (1,4-Dioxane, Water, DMF).

- 96-well plate for reaction array.

Procedure:

- Agent Initialization: Load the agent with a prompt specifying the goal: "Optimize yield for the reaction between [Aryl Halide] and [Boronic Acid] using a palladium catalyst. Variables to explore: catalyst type (2 options), catalyst loading (3 levels), base type (2 options), base equivalence (3 levels), solvent ratio (2 options)."

- Documentation Retrieval: The agent will first search its provided documents (HPLC method files, robot calibration docs) and the web for Suzuki-Miyaura reaction general protocols.

- Experimental Design: The agent will generate a Python script to execute a Design of Experiments (DoE), such as a fractional factorial design, to sample the variable space efficiently. The script will map conditions to specific wells on the 96-well plate.

- Robotic Execution: The generated script is sent via API to the liquid handling robot. The robot dispenses specified volumes of stock solutions into the target wells to set up the reaction array.

- Reaction and Analysis: The plate is heated and agitated. After a set time, the agent directs the robot to quench the reactions and prepare samples for analysis (e.g., dilute for HPLC or add a fluorophore for plate-reader analysis).

- Data Interpretation: The agent receives the raw analytical data (e.g., HPLC peak areas or fluorescence intensities). It writes and executes code to calculate conversion or yield for each well.

- Iterative Optimization: The agent analyzes the results, applies reasoning (e.g., identifying positive trends for catalyst loading), and designs a subsequent, focused experimental round (e.g., varying only the two most promising catalysts at finer loading increments).

- Conclusion: The agent summarizes the optimal conditions identified and reports the predicted maximum yield.

Protocol 2.2: Replicating the ChemCrow Multi-step Molecule Synthesis Workflow

Objective: To autonomously plan, validate, and propose synthetic routes for a target molecule using expert chemistry tools.

Materials & Reagents:

- ChemCrow agent with access to its 13+ tools (or equivalent APIs: LLM, RDKit, Reaxys/PubMed, etc.).

- Target molecule SMILES string (e.g., "CCN(CC)C(=O)Cc1c(OC)c(OC)c(OC)c(OC)c1OC" for a derivative of DEET).

Procedure:

- Task Input: Provide the agent with the prompt: "Plan a synthesis for the molecule with SMILES: [Target SMILES]."

- Literature & Patent Review: The agent uses its Literature Search and Patent Search tools to find known synthetic approaches for the target or closely related analogs.

- Retrosynthetic Analysis: The agent uses the Reaction Planning tool (powered by RDKit and LLM reasoning) to decompose the target into simpler precursors. This involves iterative application of chemical transformations.

- Route Validation & Safety: Proposed routes are checked using the Chemical Checker and Safety Checker tools to assess compound properties (e.g., solubility, synthetic accessibility score) and flag potentially hazardous intermediates/reagents.

- Precursor Sourcing: For the final proposed route, the agent uses the Reaxys Query tool to verify reported synthesis protocols for precursors and the Mol. Search tool to check commercial availability from compound vendors.

- Output Generation: The agent compiles a final report including: a ranked list of viable synthetic routes, a detailed step-by-step procedure for the top route (including reagents, solvents, and conditions), safety notes, and references to key literature.

3. Visualization of Agent Workflows

Diagram Title: ChemCrow Workflow for Autonomous Synthesis Planning

Diagram Title: Coscientist Iterative Experiment-Optimization Loop

4. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Hardware and Software "Reagents" for LLM-Agent Driven Experimentation

| Item/Tool | Category | Function in Protocol | Example/Supplier |

|---|---|---|---|

| GPT-4 API | Core LLM | Provides natural language reasoning, planning, and code generation capabilities. | OpenAI |

| Claude API | Core LLM | Alternative LLM for reasoning and safety-focused tasks. | Anthropic |

| RDKit | Software Library | Enables cheminformatics operations: molecule manipulation, retrosynthesis, property calculation. | Open Source |

| Reaxys API | Database | Provides access to chemical reaction data, literature, and compound properties for route validation. | Elsevier |

| Automated Liquid Handler | Hardware | Executes precise liquid dispensing for high-throughput experimentation as directed by agent code. | Opentrons OT-2, Hamilton STAR |

| Plate Reader (Abs/Fluo) | Hardware | Enables high-throughput, parallel analysis of reaction outcomes in microtiter plates. | Tecan Spark, BioTek Synergy |

| Jupyter Kernel | Software Environment | Serves as a secure sandbox for the agent to execute generated Python code for data analysis. | Project Jupyter |

Building Your Agent: A Step-by-Step Guide to Automating Research Workflows

1. Introduction: An LLM-Agent Framework for Automated Discovery Within the thesis on LLM agents for autonomous research, this document provides Application Notes and Protocols for mapping the canonical discovery pipeline into an automatable workflow. The goal is to deconstruct complex, human-centric processes into discrete, executable modules that can be orchestrated by an LLM-based supervisory agent. This blueprint covers from initial hypothesis generation to lead candidate validation.

2. Pipeline Stage Mapping and Quantitative Benchmarks The modern discovery pipeline, while varying by organization, conforms to a generalized sequence. The following table summarizes key stages, their primary objectives, and quantitative benchmarks for success based on current industry data (sourced from recent literature and company white papers).

Table 1: Core Stages of the Discovery Pipeline with Performance Metrics

| Pipeline Stage | Primary Objective | Key Input(s) | Key Output(s) | Typical Success Rate* | Avg. Duration* | Automation Readiness (High/Med/Low) |

|---|---|---|---|---|---|---|

| Target Identification & Validation | Define a biological target (e.g., protein) linked to disease. | Genomic/Proteomic data, Disease association studies. | A validated molecular target with a known role in pathology. | ~50% (of hypotheses) | 6-12 months | Medium |

| Hit Identification | Find initial compounds/materials that show desired activity. | Target structure, Compound libraries (10^3-10^6). | Primary "Hits" with confirmed activity (e.g., % inhibition >70%). | 0.01-0.1% (of library) | 3-6 months | High |

| Lead Generation | Optimize hits for potency, selectivity, and preliminary ADMET. | Hit series (50-500 compounds). | 1-5 Lead series with improved properties. | ~20% (of hit series) | 12-18 months | Medium-High |

| Lead Optimization | Refine leads into preclinical candidates. | Lead series, In-depth PK/PD data. | 1-2 Preclinical Candidates meeting all candidate criteria. | ~10% (of lead series) | 12-24 months | Medium |

| Preclinical Development | Assess safety and efficacy in animal models. | Preclinical Candidate(s). | IND/CTA-enabling data package. | ~60% (of candidates) | 12-18 months | Low-Medium |

*Metrics are industry averages for small-molecule drug discovery; durations are for stage completion. Material discovery metrics differ in specifics but follow a similar phased structure.

3. Detailed Experimental Protocols for Key Automatable Stages

Protocol 3.1: Automated Virtual High-Throughput Screening (vHTS) Objective: To computationally screen millions of compounds against a protein target to identify potential hits. Materials: Target protein 3D structure (PDB format), small molecule library (e.g., ZINC20 in SDF format), vHTS software (e.g., AutoDock Vina, FRED, Schrödiner's Glide), high-performance computing cluster. Methodology:

- Target Preparation: Using a tool like UCSF Chimera or Schrodinger's Protein Preparation Wizard, add hydrogens, assign bond orders, correct missing residues/side chains, and optimize hydrogen bonding networks. Define a binding site box.

- Ligand Preparation: Convert library to 3D conformations, generate tautomers/protomers, and assign partial charges (e.g., using Open Babel or LigPrep).

- Docking Execution: Run a standardized docking job for each ligand using the prepared target. Employ a scoring function (e.g., Vina, ChemPLP, GlideScore) to rank poses.

- Post-Processing: Apply filters for physicochemical properties, interaction patterns (e.g., key hydrogen bond), and clustering. Select top 500-1000 ranked compounds for in silico purchase. LLM-Agent Role: Parse the target validation report, formulate the docking parameter file, monitor job completion, and summarize results in a structured hit list.

Protocol 3.2: Parallel Medicinal Chemistry (PMC) and ADMET Screening Objective: To synthesize and test analog series from a hit compound in parallel. Materials: Hit compound, building block libraries, automated synthesis platform (e.g., Chemspeed, Opentrons), HPLC-MS for purification/analysis, 96/384-well plates, assay reagents, automated liquid handler. Methodology:

- Design of Experiment (DoE): Use software (e.g., Torch.AI, Schrödinger's CombiGlide) to design a library of 50-100 analogs based on SAR and predicted properties.

- Automated Synthesis: Execute parallel synthesis protocols on an automated platform. Monitor reactions via inline spectroscopy.

- Purification & QC: Automatically purify compounds via preparative HPLC-MS. Confirm identity and purity (>95%) via analytical LC-MS.

- Parallel Biological & ADMET Screening: a. Potency Assay: Run a target inhibition assay (e.g., fluorescence-based) in 384-well format. b. Microsomal Stability: Incubate compounds with liver microsomes, quantify parent compound remaining over time by LC-MS/MS. c. Caco-2 Permeability: Assess apparent permeability in a Caco-2 cell monolayer model.

- Data Integration: Collate all data into a structure-activity/property relationship (SA/SR) table. LLM-Agent Role: Propose analog structures based on learned SAR, translate synthesis plans to robot instructions, and integrate multi-modal assay data to recommend the next optimization cycle.

4. Visualizing the Automated Workflow

Diagram Title: LLM-Agent Orchestrated Discovery Pipeline

Diagram Title: Automated Hit Identification Workflow

5. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Platforms for Automated Discovery

| Item Name | Category | Primary Function in Automated Workflow |

|---|---|---|

| ZINC20/ChEMBL Database | Digital Library | Provides commercially available, synthetically accessible compound structures for virtual screening. |

| AlphaFold2 DB | Digital Tool | Supplies high-accuracy predicted protein structures for targets lacking experimental 3D data. |

| Tecan Fluent/ Hamilton Microlab STAR | Liquid Handling Robot | Automates plate-based assays, reagent additions, and serial dilutions for HTS and ADMET panels. |

| Chemspeed Technologies SWING | Automated Synthesis | Enables unattended parallel synthesis, work-up, and purification of compound libraries. |

| Corning Matrigel | Extracellular Matrix | Used in cell-based assays (e.g., invasion, organoid) to mimic the in vivo microenvironment. |

| LC-MS/MS System (e.g., Sciex Triple Quad) | Analytical Instrument | Provides quantitative analysis for PK/ADMET assays (stability, permeability, exposure). |

| Promega P450-Glo Assay | Biochemical Assay Kit | Ready-to-use luminescent assay for cytochrome P450 inhibition screening, amenable to automation. |

| Eurofins Panlabs Selectivity Panel | Outsourced Service | Provides broad pharmacological profiling against key off-targets to assess lead compound selectivity. |

Within the thesis on LLM agents for automated materials research and drug development, tool integration is the critical enabler. It transforms LLMs from conversational models into actionable research agents. This document details the application notes and protocols for connecting LLMs to three core tool categories: databases (for knowledge retrieval), simulators (for in-silico prediction), and physical lab equipment (for automated experimentation). This creates a closed-loop system for hypothesis generation, testing, and analysis.

Application Notes & Current Capabilities

Recent developments (2023-2024) showcase a rapid move from prototype to production in research environments. The key paradigm is the LLM functioning as a reasoning engine that selects and orchestrates tools via structured APIs.

Table 1: Current LLM-Tool Integration Frameworks & Applications

| Framework/Platform | Primary Use Case | Key Tools Integrated | Notable Research Application |

|---|---|---|---|

| LangChain/ LangGraph | General-purpose agent construction | SQL DBs, APIs, code exec, file I/O | Orchestrating multi-step literature search & data analysis pipelines. |

| AutoGPT/ ChemCrow | Domain-specific (Chemistry) agents | PubChem, RDKit, Reaxys, OSCAR6 | Planning synthetic pathways and predicting reaction outcomes. |

| Research Agent (OpenAI) | Code-based research tasks | Python, data analysis libs, web search | Automated data visualization and statistical testing. |

| LabAutomation Hub | Physical experiment control | HTTP/OPC-UA for devices, ELN APIs | Direct scheduling and execution on HPLC, liquid handlers. |

| Coscientist (Nature, 2023) | Automated experimentation | Plate readers, liquid handlers, cloud lab APIs | Executed Suzuki–Miyaura cross-coupling reactions autonomously. |

Table 2: Quantitative Performance of LLM-Agent Systems in Research Tasks

| System & Task | Metric | Result | Benchmark/Control |

|---|---|---|---|

| Coscientist (Planning/Executing Chemistry) | Success Rate (Simple Reactions) | 100% | Manual execution (100%) |

| Coscientist (Planning/Executing Chemistry) | Success Rate (Complex Reactions) | ~50% | Manual execution (Higher, but time-intensive) |

| LLM + SQL Tool (Data Retrieval Accuracy) | Precision on Complex Queries | ~85% | Expert human query (100%) |

| LLM + DFT Simulator (Workflow Orchestration) | Time to Completed Simulation | Reduced from 2 hrs to 15 mins | Manual setup & execution |

| GPT-4 + Code Interpreter (Data Analysis) | Correct Analysis Selection | 78% (On novel datasets) | Graduate student (85%) |

Detailed Experimental Protocols

Protocol 1: LLM-Agent for High-Throughput Virtual Screening Objective: To autonomously screen a compound database for target binding affinity using a cloud-based molecular dynamics simulator. Materials: LLM API (e.g., GPT-4 with function calling), molecular database (e.g., ZINC20 subset), simulation API (e.g., Desmond on AWS), results database (PostgreSQL). Procedure: 1. Task Decomposition Prompt: The user provides the target protein PDB ID and desired property filters (e.g., MW <500, LogP <5). 2. LLM Tool Selection: The LLM agent sequentially calls: (a) SQL tool to query the ZINC database for matching compounds, (b) Code tool to format the retrieved SMILES into simulation input files. 3. Simulation Orchestration: The agent uses the HTTP request tool to submit each compound to the simulator's job queue via its REST API, monitoring job status. 4. Data Aggregation: Upon completion, the agent retrieves results (e.g., binding energy), parses them, and uses the SQL tool to insert structured data into the results DB. 5. Report Generation: The agent analyzes the result set, identifies top hits, and generates a summary text and visualization code for the user.

Protocol 2: Autonomous Characterization of Optical Materials Objective: To have an LLM agent control lab equipment to measure the absorption spectrum of a novel perovskite film. Materials: LLM agent (e.g., custom Python agent using Claude 3), automated spectrophotometer (with HTTP/RS-232 API), sample handler robot, Electronic Lab Notebook (ELN) with API. Procedure: 1. Sample ID Input: The user provides the sample ID. The agent queries the ELN via its API to fetch sample details and expected protocol. 2. Instrument Parameterization: The agent sends commands to the spectrophotometer to set parameters: wavelength range (350-850 nm), scan speed, beam intensity. 3. Sample Handling Command: The agent directs the sample handler robot (via HTTP POST) to retrieve the specified sample from a storage tray and load it into the spectrophotometer. 4. Execution & Data Capture: The agent sends the "start_measurement" command. Once done, it retrieves the data file from the instrument's local server. 5. Data Processing & Logging: The agent runs a predefined Python script to calculate the Tauc plot for bandgap determination. It then formats results and posts them back to the ELN entry for the sample, tagging the experiment as complete.

Diagrams

Diagram Title: LLM Agent Tool Integration Architecture

Diagram Title: Virtual Screening Agent Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for LLM-Driven Research Integration

| Tool/Reagent Category | Specific Example(s) | Function in Workflow |

|---|---|---|

| LLM Frameworks | LangChain, LlamaIndex, DSPy | Provides scaffolding to define tools, memory, and reasoning loops for the agent. |

| API Wrappers | Custom Python classes for RDKit, PySCF, ASE | Translates LLM output into domain-specific commands for analysis/simulation. |

| Laboratory Hardware APIs | Manufacturer SDKs (e.g., Opentrons HTTP API, Agilent iLab) | Allows programmatic control of pipetting robots, spectrophotometers, etc. |

| Cloud Lab Interfaces | Strateos, Emerald Cloud Lab APIs | Abstraction layer to submit experimental protocols to remote robotic labs. |

| Data Broker & ELNs | TileDB, PostgresQL, Benchling API | Structured storage for experimental data and metadata that the LLM can query/update. |

| Authentication Vaults | HashiCorp Vault, AWS Secrets Manager | Securely manages API keys and credentials for all connected tools and databases. |

Application Notes

In the domain of automated materials and drug discovery research workflows, LLM agents function as orchestration engines. The reliability of their reasoning is directly contingent upon the precision of the instruction prompts they execute. These notes detail the principles and applications of prompt engineering for scientific agentic systems.

Core Principles:

- Decomposition & Stepwise Execution: Complex research tasks (e.g., "design a novel organic photovoltaic material") must be decomposed into sequential, verifiable sub-tasks for the agent (e.g., literature review → property calculation → stability assessment).

- Contextual Grounding: Prompts must provide explicit domain context, including relevant physical laws (e.g., DFT approximations), safety constraints (e.g., toxicity filters), and standard protocols (e.g., IUPAC naming).

- Output Structuring: Mandating structured outputs (JSON, Markdown tables) ensures machine-readable results for downstream workflow integration.

- Uncertainty Quantification: Prompts must instruct the agent to report confidence levels, cite data sources, and flag inconsistencies in literature or computational results.

Quantitative Benchmarking of Prompting Strategies: Recent studies evaluate prompting strategies on scientific reasoning benchmarks. Key metrics include accuracy, reliability (variance across runs), and computational cost.

Table 1: Performance of Prompting Strategies on Scientific QA Benchmarks (MMLU Physics & Chemistry)

| Prompting Strategy | Average Accuracy (%) | Score Variance (±%) | Avg. Tokens per Task | Best Use Case |

|---|---|---|---|---|

| Zero-Shot Chain-of-Thought | 72.1 | 8.5 | 450 | Simple, well-defined property queries |

| Few-Shot with Examples | 78.6 | 5.2 | 1200 | Protocol following, data extraction |

| Self-Consistency (5 samples) | 81.3 | 3.1 | 2250 | High-stakes reasoning, hypothesis generation |

| Tool-Augmented (Calculator, API) | 85.4 | 4.7 | 1800 | Numerical computation, database lookup |

Application in Materials Workflow: A prompt-engineered agent for precursor selection in chemical vapor deposition (CVD) was benchmarked. The agent, using a few-shot prompt with reaction templates, achieved a 92% match with expert-chosen precursors from a database of 500 compounds, compared to 65% for a baseline keyword-search agent.

Experimental Protocols

Protocol 1: Benchmarking Agent Reliability for Literature-Based Hypothesis Generation

Objective: To quantitatively assess the reproducibility and citation integrity of hypotheses generated by an LLM agent for a given materials science problem.

Materials:

- LLM API access (e.g., GPT-4, Claude 3).

- Custom Python orchestration framework.

- Scientific corpus (e.g., ArXiv materials science subset, PubMed Central).

- Evaluation rubric (scoring 1-5 for novelty, feasibility, citation support).

Procedure:

- Prompt Design: Create three prompt variants:

- V1 (Basic): "Generate a research hypothesis for improving the stability of perovskite solar cells."

- V2 (Structured): "Generate a hypothesis. Structure output as: 1. Hypothesis Statement, 2. Proposed Mechanism (≤100 words), 3. Key Supporting Citations (DOIs/PMIDs), 4. Proposed Experimental Validation."

- V3 (Critique-Augmented): "First, list known degradation pathways for perovskite solar cells from the last 5 years. Then, generate a hypothesis to address the most cited pathway. Provide citations."

- Agent Execution: Run each prompt variant through the LLM agent n=10 times per variant, with temperature setting t=0.3.

- Output Collection: Log all outputs, including any retrieved document identifiers.

- Validation & Scoring: For each hypothesis:

- Verify the existence and contextual relevance of provided citations.

- Score each hypothesis using the rubric via three independent expert raters.

- Calculate the inter-rater reliability (Fleiss' Kappa).

- Analysis: Compute the average hypothesis score, variance, and citation accuracy rate per prompt variant. Perform ANOVA to determine if differences are significant (p < 0.05).

Protocol 2: Multi-Agent Workflow for Drug Lead Analog Generation

Objective: To demonstrate a prompt-engineered pipeline where specialized agents collaborate to generate and evaluate novel drug analogs.

Materials:

- Multi-agent platform (e.g., AutoGen, LangGraph).

- Access to chemistry tools (e.g., RDKit via API, molecular docking simulator).

- SMILES database of known active compounds.

Procedure:

- Agent & Prompt Specification:

- Reviewer Agent Prompt: "Analyze the provided lead compound [SMILES]. Summarize its key pharmacophores, potential off-target effects based on substructure, and recommend 3 structural modification directions. Output JSON."

- Designer Agent Prompt: "Based on the review, generate 5 novel analog SMILES for direction [X]. Apply Lipinski's Rule of 5 and a synthetic accessibility score > 4.5. Output a table."

- Evaluator Agent Prompt: "For each generated SMILES, calculate molecular weight, logP, number of H-bond donors/acceptors, and estimate binding affinity via the provided QSAR model [provide API call template]."

- Workflow Orchestration: Implement the sequence: Reviewer → Designer → Evaluator. Pass structured outputs between agents.

- Iteration Loop: Program the Designer agent to iterate based on the Evaluator's scores, aiming to improve the binding affinity estimate.

- Validation: For the top 3 generated analogs, execute actual molecular docking simulations (outside the LLM loop) and compare results with the agent's QSAR estimates. Calculate the Pearson correlation coefficient.

Visualizations

Multi-Agent Research Workflow for Materials Discovery

Self-Correcting Prompt Loop for Drug Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Prompt Engineering Experiments

| Item / Solution | Function in the Protocol | Example / Specification |

|---|---|---|

| LLM API Access | Core reasoning engine. Requires configurable parameters (temperature, top_p). | OpenAI GPT-4 API, Anthropic Claude 3 API, open-source Llama 3 via inference endpoint. |

| Orchestration Framework | Manages agent roles, prompt templates, and message passing. | LangChain, AutoGen, custom scripts using LangGraph. |

| Benchmark Datasets | Quantitative evaluation of agent performance on scientific tasks. | MMLU STEM subsets, SciBench, customized materials/drug discovery Q&A pairs. |

| Tool Augmentation APIs | Provides domain-specific computational capabilities to the agent. | RDKit (chemistry), Materials Project REST API (materials), Docking score simulator (biology). |

| Retrieval-Augmented Generation (RAG) System | Grounds agent responses in verified, up-to-date literature. | Vector database (Chroma, Weaviate) indexed with PDFs from PubMed, ArXiv. |

| Evaluation Rubric | Standardized scoring system for qualitative assessment of outputs. | 5-point Likert scales for accuracy, novelty, feasibility, clarity. Requires expert raters. |

| Statistical Analysis Package | Analyzes result variance, significance, and correlation metrics. | Python (SciPy, statsmodels) for ANOVA, t-tests, correlation calculations. |

This application note details the development and implementation of an LLM-driven autonomous agent workflow for the discovery and synthetic analysis of polymers with target properties. Framed within broader research on LLM agents for automated materials science, this system integrates live data retrieval, multi-step reasoning, and computational prediction to accelerate the design-make-test cycle for polymeric materials.

Core Methodology & Workflow

Agent Architecture

The autonomous system is built on a modular agent framework. The Search Agent queries scientific databases for polymer property data. The Analysis Agent processes this data against target parameters. The Synthesis Pathway Agent retrieves and evaluates published synthetic routes. A Planner/Orchestrator LLM agent coordinates the sequence, manages context, and interprets results.

Live search results (performed on 2026-01-10) for key high-performance polymer classes are summarized below.

Table 1: Target Properties for Selected Polymer Classes

| Polymer Class | Example Monomers | Target Tg Range (°C) | Target Tensile Strength (MPa) | Key Application |

|---|---|---|---|---|

| Polyimides | PMDA, ODA | 250 - 400 | 100 - 250 | Aerospace, flexible electronics |

| Polyarylates | BPA, Terephthaloyl chloride | 150 - 200 | 60 - 80 | Optical films, high-barrier packaging |

| Fluoropolymers | Tetrafluoroethylene, Hexafluoropropylene | 70 - 160 | 20 - 40 | Chemical-resistant coatings, membranes |

| Bio-based Polyesters | FDCA, Isosorbide | 80 - 150 | 50 - 70 | Sustainable packaging, fibers |

Table 2: Synthesis Pathway Metrics for Polyimide Formation

| Pathway Step | Reagent/Condition | Typical Yield (%) | Reported Energy Cost (kJ/mol)* | Key Hazard Indicator |

|---|---|---|---|---|

| Monomer Synthesis | Dianhydride + Diamine in NMP | 95-99 | 120-150 | Low (solvent handling) |

| Polycondensation | Thermal, 180-220°C | 98-99.5 | 200-300 | Medium (high temp) |

| Imidization | Chemical (Acetic Anhydride/Pyridine) | 95-98 | 150-200 | Medium (corrosive reagents) |

| Imidization | Thermal (300°C) | >99 | 400-500 | High (very high temp) |

*Estimated from literature enthalpy data.

Experimental Protocols

Protocol: Automated Literature Mining for Polymer Properties

Objective: To programmatically extract glass transition temperature (Tg) and mechanical property data for candidate polymers.

- Agent Action: The Search Agent is prompted with a structured query:

"polyimide glass transition temperature Tg" AND "synthesis" AND "2020[PDAT]:2026[PDAT]". - Data Retrieval: The agent accesses APIs from PubMed Central and the Springer Nature public data repository. It filters for open-access full-text articles.

- Data Parsing: Using predefined extraction rules, the agent identifies numerical values and units following phrases like "Tg =", "glass transition", "tensile strength", and "MPa".

- Validation & Tabulation: Extracted data is cross-referenced across multiple sources. Outliers are flagged for review. Validated data is structured into a table format (as in Table 1).

Protocol: In-Silico Synthesis Pathway Analysis

Objective: To evaluate the feasibility, cost, and safety of a retrieved polymer synthesis route.

- Pathway Retrieval: For a target polymer (e.g., PMDA-ODA polyimide), the Synthesis Pathway Agent extracts reaction steps from patents (USPTO database) and methods sections.

- Step Decomposition: Each step is broken into: reagents, solvents, catalysts, temperature, time, and reported yield.

- Green Metrics Calculation: The agent computes simple metrics:

- Approximate Atom Economy: (MW of polymer repeat unit) / Σ(MW of all reactants) x 100%.

- Hazard Score: Binary flag for high-temperature (>250°C) or high-pressure conditions, or use of major corrosive/toxic reagents.

- Complexity Score: Number of synthetic steps and purification stages.

- Pathway Ranking: Routes are ranked by a composite score weighing yield, atom economy, and inverse hazard/complexity scores.

Visualizations

Polymer Discovery Agent Workflow

Polyimide Synthesis Pathway Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Polymer Synthesis & Analysis

| Item | Function in Protocol | Key Consideration for Automation |

|---|---|---|

| N-Methyl-2-pyrrolidone (NMP) | High-boiling, polar aprotic solvent for polycondensation (e.g., polyimide formation). | Toxicity profile requires automated handling in closed systems. |

| Dianhydride Monomers (e.g., PMDA) | Core building block for condensation polymers. Provides rigidity and thermal stability. | Moisture sensitivity; agents must recommend dry storage/ handling conditions. |

| Diamine Comonomers (e.g., ODA) | Co-reactant with dianhydrides. Chain structure dictates final polymer properties. | Structure-property database is essential for agent-led selection. |

| Acetic Anhydride/Pyridine Mix | Chemical imidization agents for converting poly(amic acid) to polyimide at lower temperatures. | Corrosive mixture; agent must flag safety protocols and waste disposal. |

| Deuterated Solvents (e.g., DMSO-d6) | For nuclear magnetic resonance (NMR) spectroscopy to confirm monomer structure and polymer purity. | High cost; agent should suggest minimal required volumes for analysis. |

| Size Exclusion Chromatography (SEC) System | For determining polymer molecular weight (Mw, Mn) and dispersity (Ð), critical for property prediction. | Agent needs to parse and interpret complex chromatogram data outputs. |

| Thermogravimetric Analyzer (TGA) | Measures thermal decomposition temperature, a key stability metric for high-performance polymers. | Quantitative data (e.g., Td₅₉₀) is readily scrapable from instrument software for agent use. |

Executive Application Notes

Early-stage drug candidate screening is a critical bottleneck in pharmaceutical R&D, characterized by high costs, lengthy timelines, and low hit-to-lead success rates. Integrating Large Language Model (LLM) based multi-agent systems into this workflow represents a paradigm shift within automated materials research. These systems deploy specialized, collaborative AI agents to autonomously execute and coordinate complex sub-tasks, dramatically accelerating the virtual and in vitro phases of screening.

Recent implementations demonstrate that a multi-agent framework can reduce the initial compound library triage cycle from several weeks to days. By automating literature synthesis, target prioritization, in silico docking, ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) prediction, and experimental protocol generation, these systems enable a more rapid, data-driven funnel from billions of virtual compounds to a shortlist of high-probability candidates for physical assay testing.

Table 1: Comparative Performance Metrics of Traditional vs. Multi-Agent-Accelerated Screening

| Metric | Traditional Workflow | MAS-Accelerated Workflow | Improvement |

|---|---|---|---|

| Initial Library Triage Time | 4-6 weeks | 3-5 days | ~85% reduction |

| Compounds Screened Per Week (in silico) | 10,000 - 50,000 | 500,000 - 2,000,000+ | 50x increase |

| Hit Rate from HTS | 0.01% - 0.1% | 0.1% - 1.5% (enriched libraries) | ~10x increase |

| Primary-to-Secondary Assay Turnaround | 2-3 weeks | 2-4 days | ~80% reduction |

| Manual Data Curation Hours per Project | 120-200 hours | 15-30 hours | ~85% reduction |

Table 2: Multi-Agent System Configuration for Drug Screening

| Agent Name | Primary Function | Key Tools/Modules | Output |

|---|---|---|---|

| Query Analyst | Parses research question & defines goals | LLM (e.g., GPT-4, Claude), task-specific prompts | Structured screening hypothesis & parameters |

| Knowledge Synthesizer | Extracts & summarizes target & disease data | PubMed/Patents APIs, bio-ontologies, text summarization | Integrated target profile & pathway map |

| Chemoinformatician | Designs & filters virtual compound libraries | ZINC, ChEMBL, RDKit, SMILES processors, filters (Lipinski, etc.) | Curated virtual library (SMILES) |

| Docking Specialist | Executes molecular docking simulations | AutoDock Vina, GROMACS (partial), PDB access | Ranked docking scores & poses |

| ADMET Predictor | Predicts pharmacokinetics & toxicity | ADMET prediction models (e.g., pkCSM, DeepTox) | ADMET property table with flags |

| Protocol Generator | Designs in vitro experimental plans | ELN templates, reagent databases, SOP libraries | Ready-to-use experimental protocols |

Detailed Experimental Protocols

Protocol 1: Multi-Agent DrivenIn SilicoScreening Cascade

Objective: To autonomously identify and prioritize lead candidates against a novel kinase target (e.g., PKC-θ) from a large-scale virtual library.

Materials: Multi-agent platform (e.g., LangChain, AutoGen, or custom), computational infrastructure (CPU/GPU cluster), target PDB file (e.g., 3D structure of PKC-θ), virtual compound database (e.g., Enamine REAL Space subset).

Methodology:

Workflow Initiation & Target Profiling:

- The Query Analyst agent receives the natural language query: "Identify potent and selective small-molecule inhibitors of protein kinase C-theta (PKC-θ) with favorable oral bioavailability."

- The Knowledge Synthesizer agent is deployed. It queries PubMed and UniProt via API to compile key data: official gene name (PRKCQ), relevant signaling pathways (T-cell activation, NF-κB), known active sites, and published inhibitor chemotypes.

- Output: A consolidated target profile document.

Virtual Library Curation & Preparation:

- The Chemoinformatician agent is triggered. It accesses a predefined subset of the Enamine REAL database (approx. 10 million compounds).

- It applies a series of progressive filters: a. Rule-based filters (Lipinski's Rule of Five, removal of pan-assay interference compounds (PAINS)). b. Structure-based similarity search against known active scaffolds identified by the Knowledge Synthesizer.

- Output: A refined library of 150,000 compounds in prepared 3D format (SDF).

Molecular Docking & Scoring:

- The Docking Specialist agent retrieves the target PDB file (e.g., 3A4W) and prepares it (remove water, add hydrogens, define binding grid).

- It executes high-throughput docking using AutoDock Vina across a distributed compute cluster, docking each prepared ligand.

- It ranks all compounds by docking score (kcal/mol) and clusters top poses.

- Output: A ranked list of the top 5,000 compounds with docking scores < -9.0 kcal/mol.

ADMET Prioritization:

- The ADMET Predictor agent receives the top 5,000 compounds. It runs ensemble predictions for key properties: Caco-2 permeability, hepatic microsomal stability, hERG inhibition, and Ames mutagenicity.

- It applies pass/fail thresholds and weights scores to generate a composite "drug-likeness" score.

- Output: A final prioritized list of 200-500 compounds with docking scores, predicted IC50, and ADMET profiles.

Protocol Generation for Validation:

- The Protocol Generator agent, using the final list, drafts a step-by-step in vitro assay protocol for a kinase inhibition assay (e.g., ADP-Glo Kinase Assay) including suggested concentrations, controls, and reagent calculations.

Diagram: Multi-Agent Drug Screening Workflow

Protocol 2:In VitroKinase Inhibition Assay for MAS-Identified Hits

Objective: To experimentally validate the inhibitory activity of the top 10 compounds prioritized by the multi-agent system against recombinant PKC-θ kinase.

Research Reagent Solutions & Essential Materials:

| Item | Function/Brief Explanation |

|---|---|

| Recombinant Human PKC-θ Kinase Domain | Catalytic component of the target for the biochemical assay. |

| ADP-Glo Kinase Assay Kit | Luminescent kit to measure kinase activity by quantifying ADP production; high sensitivity. |

| Selective ATP-competitive Substrate Peptide | PKC-θ specific peptide (e.g., derived from MARCKS protein) to ensure assay relevance. |

| DMSO (Cell Culture Grade) | Universal solvent for reconstituting small-molecule inhibitor compounds. |

| Reference Inhibitor (e.g., Staurosporine) | Broad-spectrum kinase inhibitor used as a positive control for inhibition. |

| White, Flat-Bottom 384-Well Assay Plates | Optimal plate type for luminescence readings with minimal crosstalk. |

| Multidrop Combi Reagent Dispenser | For rapid, consistent dispensing of kinase, peptide, and ATP solutions. |

| Plate Reader (Luminometer Capable) | To measure luminescent signal from the ADP-Glo detection reaction. |

Methodology:

- Compound Preparation: Serially dilute the 10 test compounds and the reference inhibitor in DMSO, then further dilute in kinase assay buffer to create a 10-point dose-response series (e.g., 10 µM to 0.5 nM final top concentration). Keep final DMSO concentration constant (e.g., 1%).

- Assay Assembly: In a 384-well plate, add 2.5 µL of compound/buffer control per well. Add 5 µL of a kinase/substrate peptide mixture (pre-mixed per kit instructions). Initiate the reaction by adding 2.5 µL of ATP solution (at Km concentration determined beforehand).

- Incubation & Detection: Incubate plate at 25°C for 60 minutes. Terminate the kinase reaction by adding 5 µL of ADP-Glo Reagent and incubate for 40 minutes. Finally, add 10 µL of Kinase Detection Reagent to convert ADP to ATP and detect via luciferase/luciferin reaction. Incubate for 30 minutes.

- Data Acquisition & Analysis: Read luminescence on a plate reader. Normalize data: 0% inhibition = DMSO-only control wells (no compound); 100% inhibition = wells with reference inhibitor at saturating concentration. Fit normalized dose-response data to a four-parameter logistic curve to determine IC50 values for each compound.

Diagram: PKC-θ Signaling & Assay Principle

Beyond the Hype: Solving Hallucination, Reliability, and Efficiency Challenges

Within the broader thesis on LLM agents for automated materials and drug discovery workflows, the "hallucination" problem—where models generate plausible but factually incorrect or unsupported content—poses a critical risk. This document outlines Application Notes and Protocols to detect, mitigate, and prevent hallucinations in AI-generated scientific outputs, ensuring reliability in automated research pipelines.

Application Notes: Current Landscape & Quantitative Analysis

A live internet search reveals current strategies and their reported efficacy.

Table 1: Quantitative Performance of Hallucination Mitigation Techniques in Scientific Domains

| Technique Category | Representative Tool/Method | Reported Reduction in Hallucination Rate | Benchmark/Dataset Used | Key Limitation |

|---|---|---|---|---|

| Retrieval-Augmented Generation (RAG) | PubMed-RAG, Custom Knowledge Graph QA | 40-60% reduction vs. base LLM | SciFact, PubMedQA | Dependent on source quality & retrieval accuracy |

| Self-Consistency & Verification | Chain-of-Verification (CoVe), Self-Check GPT | 25-35% reduction | HotpotQA, ExpertQA | Computationally expensive; can propagate errors |

| Tool-Augmented Agents | MRKL Systems, LangChain Tools | 50-70% reduction for numerical tasks | MATH, TabMWP | Requires precise tool description/APIs |

| Prompt Engineering | Few-Shot Factual Prompting, "Step-by-Step" Reasoning | 15-25% reduction | TruthfulQA, BioASQ | Inconsistent across model types & domains |

| Post-Hoc Fact-Checking | FactScore, Google Search Verification | Up to 80% reduction for factual statements | FACTOR, WikiBio | Slow; requires external verification source |

Table 2: Common Hallucination Types in Scientific LLM Outputs

| Hallucination Type | Frequency in Materials/Drug Discovery Outputs | Example | Potential Impact |

|---|---|---|---|

| Factual Fabrication | High (~30% of unchecked claims) | Inventing non-existent protein-protein interactions | Failed experimental validation; wasted resources |

| Citation Fabrication | Very High (>40%) | Generating plausible but fake DOI references | Loss of credibility; integrity issues |

| Numerical Inconsistency | Moderate (~20%) | Incorrectly calculating molecular weight or binding affinity | Flawed experimental design |

| Logical Incoherence | Low-Moderate (~15%) | Contradictory steps in a proposed synthetic pathway | Uninterpretable protocols |

Protocols for Hallucination Mitigation

Protocol 3.1: Implementing a Multi-Stage RAG System for Literature Synthesis

Objective: Generate accurate, sourced summaries of scientific literature on a target (e.g., a kinase inhibitor).

Materials & Workflow:

- Query Decomposition Agent: Breaks down complex query ("role of p38 MAPK in fibrosis") into sub-queries.

- Retriever: Searhes trusted databases (PubMed, arXiv, Patents) via API using sub-queries.

- Reranker: Uses cross-encoder model (e.g.,

BAAI/bge-reranker-large) to rank snippets by relevance. - Generator: LLM (e.g., GPT-4) instructed to generate answer only from provided contexts, with citation placeholders.

- Citation Linker: Matches placeholder statements to the exact source snippet/DOI.

Validation Step: Any statement without a high-confidence source match is flagged for human review.

Title: RAG System Workflow for Factual Synthesis

Protocol 3.2: Agent-Based Fact-Checking Protocol for Experimental Protocols

Objective: Verify an AI-generated protocol for "Cell Viability Assay with Compound X."

Step-by-Step:

- Statement Extraction: Parse generated protocol into individual factual claims (materials, concentrations, timings, steps).

- Agent Dispatch:

- Numerical Agent: Checks molarity, units, and incubation times against domain-specific rules (e.g.,

[0-1000] µM). - Safety Agent: Cross-references chemical names with safety databases (PubChem) for hazardous incompatibilities.

- Protocol Agent: Retrieves top-3 most similar published protocols from repositories (e.g., Protocols.io, Nature Methods).

- Numerical Agent: Checks molarity, units, and incubation times against domain-specific rules (e.g.,

- Claim Verification: Each agent returns

[TRUE],[FALSE], or[UNCERTAIN]with evidence. - Consolidation & Rewrite: A supervisor LLM consolidates feedback and rewrites the protocol, highlighting corrected items.

Title: Multi-Agent Fact-Checking Workflow for Protocols

The Scientist's Toolkit: Research Reagent Solutions for Validation

Table 3: Essential Tools for Building Hallucination-Resistant Scientific LLM Systems

| Item/Category | Specific Example/Tool | Function in Mitigating Hallucinations |

|---|---|---|

| Trusted Knowledge Sources | PubMed API, Springer Nature API, USPTO Patent API | Provides ground-truth, vetted scientific data for RAG systems. |

| Specialized Embedding Models | allenai/specter2, BAAI/bge-large-en-v1.5 |

Encodes scientific text for accurate semantic retrieval. |

| Fact-Checking APIs | Google Fact Check Tools API, EBI's RDF platform | Enables real-time verification of factual claims. |

| Chemical Safety DBs | PubChem PUG-REST API, CAS Common Chemistry | Validates chemical identities, properties, and safety data. |

| Numerical & Unit Checkers | Pint (Python library), quantulum3 |

Parses and validates physical quantities and units. |

| Citation-Graph Tools | Scite.ai API, OpenCitation Index | Checks the existence and context of references. |

| Benchmark Datasets | SciFact, PubMedQA, BioASQ | Evaluates the factual accuracy of generated outputs. |

Visualization: Integrated LLM Agent System with Hallucination Guards