Accelerating Discovery: How Active Learning with Foundation Models is Transforming Materials Science and Drug Development

This article explores the paradigm shift in materials discovery driven by the integration of active learning and large-scale foundation models.

Accelerating Discovery: How Active Learning with Foundation Models is Transforming Materials Science and Drug Development

Abstract

This article explores the paradigm shift in materials discovery driven by the integration of active learning and large-scale foundation models. We first establish the foundational concepts of machine learning for materials science and the core principles of active learning cycles. We then detail methodological workflows, from framing discovery queries to model training and experimental design. Practical challenges, including data scarcity, model bias, and integration with high-throughput robotics, are addressed with troubleshooting strategies. Finally, we examine validation protocols, compare key frameworks like Bayesian optimization and deep ensembles, and present benchmark case studies in catalysis and polymer design. This comprehensive guide provides researchers and drug development professionals with actionable insights to leverage these powerful, data-efficient AI techniques.

From Data to Design: Core Principles of Foundation Models and Active Learning in Materials Science

In the context of a broader thesis on active learning for materials discovery, foundation models (FMs) are large-scale machine learning models pre-trained on vast, diverse datasets of materials science information. They encode fundamental relationships between composition, structure, properties, and synthesis. When integrated into active learning loops, these models act as powerful, general-purpose priors, drastically accelerating the iterative "propose-candidate → predict-property → select-experiment" cycle by suggesting promising, novel materials for exploration.

Comparative Analysis of Key Foundation Models

Table 1: Comparative Summary of Featured Materials Foundation Models

| Model Name (Primary Reference) | Core Architecture | Primary Training Data & Scale | Key Output/Capability | Primary Domain Application |

|---|---|---|---|---|

| GPT-type Models (e.g., ChatGPT, GPT-4 adapted for materials) | Transformer Decoder | Massive text corpora (incl. scientific literature, patents, databases). Scale: ~1T+ tokens. | Text generation, instruction following, knowledge Q&A on materials. | Literature synthesis, hypothesis generation, experiment planning, knowledge retrieval. |

| GNoME (Google DeepMind, 2023) | Graph Neural Network (GNN) | The Materials Project, ICSD, OQMD. Scale: ~2.2 million predicted stable crystals. | Stability prediction, crystal structure generation, discovery of novel stable materials. | High-throughput ab initio discovery of inorganic crystals. |

| MatSciBERT (Hutchinson et al., 2021) | Transformer Encoder (BERT) | ~2.68M materials science abstracts from arXiv, PubMed Central, etc. Scale: 110M parameters. | Text embeddings, named entity recognition, relation extraction from text. | Mining unstructured literature for materials knowledge (e.g., synthesis conditions, property data). |

Application Notes & Experimental Protocols

Application Note 1: Integrating GNoME into an Active Learning Loop for Novel Solid-State Electrolyte Discovery

- Objective: To discover novel, lithium-ion conducting solid electrolytes with high stability.

- Active Learning Framework:

- Initial Seed: Start with a database of known solid electrolytes (e.g., from Materials Project).

- Candidate Proposal (GNoME as Proposer): Use GNoME to generate novel, thermodynamically stable crystal structures within a constrained chemical space (e.g., Li-M-X where M=metal, X=O, S, P, Cl...).

- Property Prediction (Surrogate Model): Pass proposed candidates through a specialized, fine-tuned property predictor for Li-ion conductivity (e.g., a GNN trained on DFT-calculated migration barriers).

- Acquisition & Selection: Use an acquisition function (e.g., expected improvement) on the predicted conductivity/stability Pareto front to select the most promising candidates for the next step.

- Experiment/DFT Verification: Perform high-fidelity DFT calculations on the top-ranked candidates to verify stability and compute accurate ionic conductivity.

- Loop Closure: Add verified data (new stable materials, their properties) to the training set for both the surrogate model and to fine-tune GNoME, closing the active learning loop.

Protocol 1.1: High-Throughput DFT Validation of GNoME-Proposed Candidates

- Software: VASP (Vienna Ab initio Simulation Package) or similar DFT code.

- Workflow:

- Structure Import: Convert GNoME-generated CIF files into DFT input files.

- Relaxation: Perform geometric optimization (ionic + cell relaxation) using the PBE functional and a project-augmented wave (PAW) pseudopotential library.

- Stability Analysis: a. Calculate the formation energy: Eform = Etotal - Σ(ni * μi), where μi are elemental chemical potentials referenced from standard phases. b. Compute the energy above the convex hull (Ehull). Candidates with Ehull ≤ 50 meV/atom are considered potentially stable.

- Property Calculation (Ionic Conductivity): a. Use the nudged elastic band (NEB) method to compute the Li-ion migration barrier (Ea). b. Estimate conductivity pre-factor via harmonic transition state theory.

- Key Parameters: Plane-wave cutoff (520 eV), k-point density (≥ 50/Å⁻³), convergence thresholds (energy < 10⁻⁵ eV, force < 0.01 eV/Å).

Application Note 2: Using MatSciBERT for Automated Literature Mining to Inform Synthesis Protocols

- Objective: Extract synthesis parameters for a target material class (e.g., "halide perovskites") to guide experimental synthesis in an active learning campaign.

- Protocol 2.1: Named Entity Recognition (NER) for Synthesis Conditions

- Data Collection: Use the arXiv API to fetch recent abstracts and full-text preprints containing "halide perovskite" and "synthesis".

- Preprocessing: Clean text, split into sentences and tokens.

- Entity Extraction: Load a pre-trained MatSciBERT model fine-tuned on the

matbert-nerdataset (entities include:MAT,PROP,SMT,SOS,DSC). - Run Inference: Process the collected text to tag entities. Focus on

SMT(synthesis method),SOS(starting materials/solvents),DSC(descriptors like temperature, time). - Relation Extraction: Apply a rule-based or fine-tuned model to link the extracted material (

MAT) entity to its associatedSMTandDSCentities (e.g., link "CsPbBr₃" to "spin coating" and "150°C"). - Database Population: Structure extracted (material, synthesis method, parameter, value) tuples into a database for analysis and to set initial conditions for robotic synthesis.

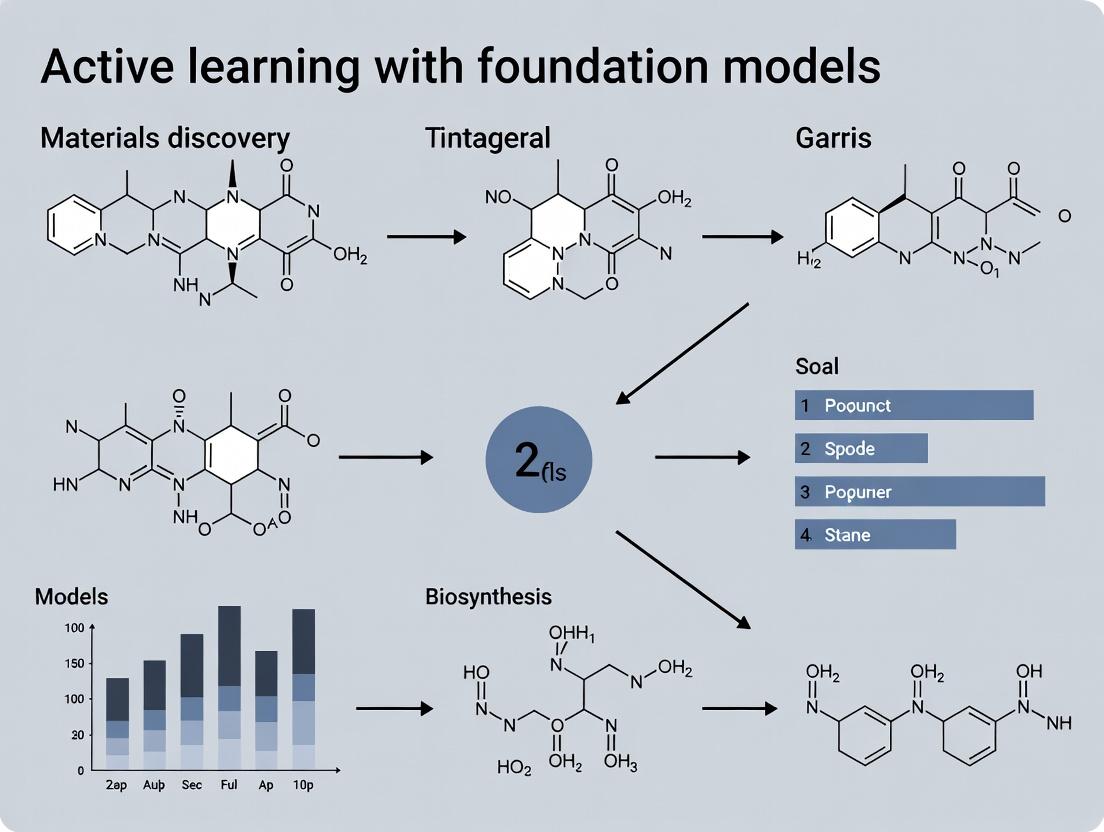

Visualization of Workflows

Active Learning Loop with GNoME for Discovery

MatSciBERT Model Training and Application Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Working with Materials Foundation Models

| Item/Category | Function in Research | Example/Format |

|---|---|---|

| Pre-trained Model Weights | The core foundation model parameters for inference or fine-tuning. | GNoME checkpoints (TensorFlow), MatSciBERT (Hugging Face m3rg-iitd/matscibert). |

| Materials Databases | Source of structured training data and validation benchmarks. | The Materials Project (API), OQMD, ICSD, COD. |

| High-Performance Computing (HPC) Cluster | Required for training large FMs and running high-throughput DFT validation. | CPU/GPU nodes with >1 PB storage, SLURM job manager. |

| DFT Software Suite | For first-principles validation of predicted structures and properties. | VASP, Quantum ESPRESSO, ABINIT. |

| Automated Experimentation (Robotics) Platform | To physically test synthesis and property hypotheses generated by the active learning loop. | Liquid-handling robots, automated spin coaters, high-throughput XRD. |

| Scientific Text Corpora | For training or fine-tuning language-based FMs like MatSciBERT. | arXiv API, PubMed Central, patents (USPTO bulk data). |

| Fine-tuning Datasets | Task-specific labeled data to adapt general FMs. | matbert-ner dataset (for materials NER). |

Active Learning (AL) is a machine learning paradigm where the algorithm selectively queries a human expert (or an expensive computational simulation) to label new data points. Within materials discovery and drug development, this approach is critical due to the high cost of experiments and simulations. Foundation models, pre-trained on vast chemical and materials datasets, serve as powerful priors, making the AL loop significantly more data-efficient for predicting properties like band gaps, ionic conductivity, or binding affinity.

Core Query Strategies: Protocols and Application Notes

Query strategies determine which unlabeled data points are most valuable for the model to learn from next. The choice of strategy depends on the balance between exploration (sampling uncertain regions) and exploitation (refining predictions in promising regions).

| Strategy | Core Metric | Best For | Computational Cost | Key Advantage |

|---|---|---|---|---|

| Uncertainty Sampling | Prediction entropy, margin, or least confidence. | Rapidly improving model accuracy on a specific property. | Low | Simple, intuitive, and effective for classification tasks. |

| Query-by-Committee (QBC) | Disagreement between an ensemble of models (variance). | Mitigating model bias and improving generalizability. | High (requires multiple models) | Reduces dependence on a single model's bias. |

| Expected Model Change | Magnitude of the gradient of the loss function if the point were labeled. | Steering foundation model fine-tuning efficiently. | Very High | Directly targets points that would change the model most. |

| Density-Weighted Methods | Combination of uncertainty and representativeness of the data manifold. | Discovering diverse leads and avoiding outliers. | Medium | Balances information gain with data coverage. |

Protocol 2.1: Implementing Uncertainty Sampling with a Foundation Model

Objective: Select the most uncertain materials from an unlabeled pool for DFT validation. Reagents & Tools: Pre-trained foundation model (e.g., Graph Neural Network for molecules/materials), unlabeled candidate pool, property predictor head.

- Fine-tune Foundation Model: On the current small labeled dataset (L), fine-tune the readout layer(s) of the foundation model for the target property (e.g., formation energy).

- Inference on Pool: For all candidates in the unlabeled pool (U), obtain the model's predictive probability distribution P(y|x).

- Calculate Entropy: For each candidate

i, compute the prediction entropy:H(i) = -Σ P(y=k|x_i) * log P(y=k|x_i)across all possible property value binsk. - Rank & Query: Rank candidates by entropy (highest to lowest). Present the top

n(batch size) candidates to the human expert for labeling via DFT calculation. - Update & Iterate: Add the newly labeled

nsamples toL, retrain/fine-tune the model, and repeat from step 2.

Protocol 2.2: Implementing Query-by-Committee for Materials Screening

Objective: Select candidates where an ensemble of models disagrees most, indicating high model uncertainty. Reagents & Tools: Multiple pre-trained model backbones (or one backbone with multiple random initializations), bootstrap-sampled training data.

- Committee Formation: Create a committee of

Mmodels (e.g., M=5). Each is the same foundation model architecture but fine-tuned on a bootstrap sample (with replacement) of the current labeled setL. - Committee Vote: For each candidate in

U, obtain property predictions from allMcommittee members. - Measure Disagreement: For regression (e.g., predicting adsorption energy), compute the variance of the

Mpredictions. For classification (e.g., stable/unstable), compute the entropy of the committee's vote distribution. - Rank & Query: Rank candidates by disagreement measure (highest to lowest). The top

nare selected for expert evaluation. - Iterate: Update

Land retrain the entire committee on the new data.

The Human-in-the-Loop: Workflow and Integration

The human expert (scientist) is not just a labeler but a curator and validator within the loop.

Diagram Title: The Active Learning Loop for Materials Discovery

Protocol 3.1: Expert-in-the-Loop Curation Protocol

Objective: Integrate expert domain knowledge to override or complement query strategy selections.

- Pre-Screen Visualization: The

ncandidates selected by the AL query are presented to the expert via an interactive dashboard showing predicted properties, structural fingerprints, and nearest neighbors in the latent space. - Expert Override: The expert can:

- Remove candidates deemed physically impossible or synthetically intractable based on heuristics (e.g., unrealistic bond lengths).

- Add candidates from the pool that the query missed but which are structurally or chemically analogous to promising leads (similarity-based augmentation).

- Final Batch Submission: The final, curated batch of candidates is sent for experimental validation or high-fidelity simulation.

- Feedback Logging: All expert actions (removals, additions) are logged to potentially train a future "expert preference" model.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Active Learning with Foundation Models

| Item / Solution | Function in the AL Pipeline | Example Tools / Libraries |

|---|---|---|

| Pre-trained Foundation Model | Provides a rich, general-purpose representation of chemical/materials space. | Matformer, CGCNN, Uni-Mol, ChemBERTa, GPT for Molecules. |

| Active Learning Framework | Implements query strategies, manages the labeled/unlabeled pools, and handles iteration. | modAL, ALiPy, Tesla (internal frameworks), custom scripts with scikit-learn. |

| High-Fidelity Simulator | Acts as the "oracle" or expert to label selected candidates (when experiments are not feasible). | VASP, Quantum ESPRESSO (DFT), GROMACS (MD), DOCK (molecular docking). |

| Data & Model Dashboard | Visualizes the AL progress, candidate structures, and model uncertainty for human experts. | Dash by Plotly, Streamlit, custom web apps with 3D mol viewers (3DMol.js). |

| Automated Workflow Manager | Connects the AL selector to simulation clusters and retrieves results. | FireWorks, AiiDA, next-generation LIMS (Laboratory Information Management System). |

| Representation Library | Converts material/molecule structures into model-ready inputs (graphs, descriptors). | pymatgen, RDKit, ASE (Atomic Simulation Environment). |

Performance Metrics & Evaluation Protocol

Evaluating the AL loop's efficiency is critical for benchmarking strategies.

Table 3: Key Quantitative Metrics for AL Performance

| Metric | Formula / Description | Interpretation |

|---|---|---|

| Learning Curve Area (LCA) | Area under the curve of model performance (e.g., RMSE, MAE) vs. number of labeled samples acquired. | Higher LCA = faster learning. A perfect AL strategy maximizes performance with minimal samples. |

| Discovery Rate | Number of "hits" (e.g., materials with target property > threshold) discovered per 100 queries. | Measures the success efficiency of the loop in finding viable candidates. |

| Average Uncertainty Reduction | Mean reduction in prediction entropy/variance across the pool U after each AL cycle. |

Quantifies how effectively the loop reduces overall model uncertainty. |

| Expert Time Saved | (Number of random selections needed to reach target performance) - (Number of AL selections needed). | Estimates the practical resource savings conferred by the AL strategy. |

Protocol 5.1: Benchmarking AL Query Strategies

Objective: Objectively compare the performance of different query strategies for a given materials discovery task.

- Define Task & Dataset: Start with a fully labeled dataset (e.g., from a public database like Materials Project). Define the target property (e.g., bandgap).

- Simulate Initial State: Randomly select a small seed set

L_0(e.g., 5% of data) as the initial labeled pool. The remainder becomes the unlabeled poolU. - Simulate AL Loop: For each query strategy

S(e.g., Uncertainty, QBC, Random Sampling as baseline): a. At iterationt, train a model onL_t. b. Use strategySto select a batch ofbpoints fromU(using their known labels only for selection, not training). c. "Label" these points by moving them fromUtoL_tto formL_{t+1}. - Measure & Record: At each iteration, record the model's performance (e.g., RMSE) on a held-out test set.

- Repeat & Average: Repeat steps 2-4 multiple times with different random seeds for

L_0. Average the learning curves. - Analyze: Plot the average learning curves for all strategies. The strategy whose curve rises fastest (highest LCA) is most efficient for that task.

Application Notes: Active Learning for Materials Discovery

Active Learning (AL) is a machine learning paradigm that iteratively selects the most informative experiments to perform, thereby accelerating the discovery of novel materials while minimizing resource expenditure. In the context of foundation models—large, pre-trained models on vast scientific corpora—AL provides a strategic query mechanism to fine-tune and guide exploration in uncharted chemical or materials spaces.

Core Mechanism: An AL cycle begins with a small, initial dataset. A foundation model (e.g., trained on crystal structures or molecular properties) makes predictions with associated uncertainty. An "acquisition function" prioritizes candidates (e.g., a specific perovskite composition or organic molecule) where the model is most uncertain or where predicted performance (e.g., photovoltaic efficiency) is high. These candidates are synthesized and tested experimentally. The new, high-value data is then used to retrain/update the model, closing the loop.

Key Advantages:

- Mitigates Data Scarcity: Directly targets data acquisition to build high-value, minimal datasets.

- Reduces Cost: Can achieve peak performance with 10-50% of the data required by traditional high-throughput screening.

- Enables Closed-Loop Automation: Integrates directly with robotic synthesis and characterization platforms for autonomous discovery.

Table 1: Performance Comparison of Discovery Methods

| Discovery Method | Typical Experiments to Find Hit | Relative Cost | Primary Limitation |

|---|---|---|---|

| Traditional Edisonian | 10,000+ | 100% | Highly inefficient, resource-intensive |

| High-Throughput Screening (HTS) | 1,000 - 10,000 | 60-85% | High upfront capital, data may be redundant |

| Passive Machine Learning | 500 - 2,000 | 40-60% | Relies on existing biased datasets |

| Active Learning (AL) Cycle | 50 - 500 | 10-30% | Requires initial seed data & automation integration |

| AL with Foundation Model | 20 - 200 | 5-20% | Complex model training; highest computational upfront cost |

Detailed Experimental Protocols

Protocol 1: Active Learning Cycle for Novel Photocatalyst Discovery

Objective: To discover new organic photocatalysts for hydrogen evolution using a closed-loop AL platform.

Materials & Pre-processing:

- Seed Dataset: Compile 50-100 known organic molecules with measured photocatalytic activity (H₂ evolution rate).

- Representation: Encode molecules as Morgan fingerprints (radius 2, 2048 bits) or using a pre-trained molecular foundation model's embeddings.

- Initial Model: Train a Gaussian Process Regression (GPR) model or a fine-tuned neural network on the seed data to predict activity from representation.

Procedure:

- Candidate Pool Generation: Use a rule-based library (e.g., BODIPY, perylene diimide cores with functional group variations) to generate a virtual library of 10,000 candidate molecules. Encode each candidate.

- Prediction & Acquisition: Use the trained model to predict mean (µ) and uncertainty (σ) for all candidates. Calculate the Upper Confidence Bound (UCB) acquisition score: UCB = µ + κσ, where κ is a tunable parameter balancing exploration (high σ) and exploitation (high µ). Select the top 5-10 candidates with highest UCB scores.

- Experimental Validation:

- Synthesis: Execute automated synthesis of selected candidates via robotic fluidic platform (e.g., Chemspeed).

- Characterization: Purify via automated flash chromatography.

- Testing: Perform standardized photocatalytic hydrogen evolution test in a multi-reactor array. Measure H₂ production via gas chromatography.

- Model Update: Append new experimental data (candidate representation and measured activity) to the training set. Retrain the predictive model.

- Iteration: Repeat steps 2-4 for 5-10 cycles or until a performance target is met (e.g., H₂ evolution rate > 10 mmol g⁻¹ h⁻¹).

Protocol 2: Integrating a Materials Foundation Model for Solid-State Li-Ion Conductor Search

Objective: Leverage a crystal structure foundation model (e.g., Crystal Graph Convolutional Neural Network pre-trained on OQMD) to discover high-conductivity LiₓMᵧZᵀ compositions.

Materials & Pre-processing:

- Foundation Model: Obtain a pre-trained model that outputs embeddings for inorganic crystal structures.

- Seed Data: Assemble a dataset of 100-200 known solid electrolytes with reported ionic conductivity (log σ) at 298K.

- Structure Embedding: For each seed material, use the foundation model to generate a fixed-length feature vector (embedding).

Procedure:

- Fine-Tuning: Add a feed-forward regression head to the foundation model. Train this head on the seed dataset (embeddings -> log σ) while optionally freezing or lightly fine-tuning the base model layers.

- Candidate Proposal: From databases (e.g., Materials Project, ICDD), filter structures containing Li, and possible cation sites (M = P, Ge, Si, etc.) and anions (Z = O, S, Cl). Generate a candidate list of ~5,000 compositions with predicted stable structures.

- Active Query: For each candidate, generate its predicted stable crystal structure (using DFT or a generative model), compute its foundation model embedding, and predict log σ and uncertainty via the fine-tuned model (using dropout or ensemble for uncertainty estimation). Select candidates maximizing Expected Improvement (EI) over the current best conductivity.

- Experimental Validation:

- Synthesis: Prepare selected compositions via solid-state reaction (ball milling precursors, then annealing in sealed quartz tubes under Ar).

- Characterization: Confirm phase purity via XRD. Fabricate pellets by cold pressing and sintering.

- Testing: Measure ionic conductivity via Electrochemical Impedance Spectroscopy (EIS) from 25°C to 100°C.

- Iterative Learning: Add new (composition, embedding, measured log σ) data points. Update the regression head of the model. Repeat from Step 3.

Visualizations

Title: Active Learning Cycle with Foundation Model

Title: Foundation Model Integration in Materials AL

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Reagents & Materials for Active Learning-Driven Discovery

| Item | Function in Protocol | Example/Supplier Notes |

|---|---|---|

| Automated Synthesis Platform | Enables rapid, reproducible synthesis of AL-selected candidates. | Chemspeed SWING, Unchained Labs Junior. Critical for Protocol 1. |

| High-Throughput Characterization Array | Parallel measurement of target properties (e.g., photocatalytic activity, ionic conductivity). | Multi-channel photochemical reactors (e.g., Photon etc.); 8-channel potentiostat for EIS. |

| Pre-Trained Foundation Model | Provides rich, general-purpose representation of materials/molecules, reducing needed seed data. | CGCNN (crystals), ChemBERTa (molecules), Matformer. Often accessed via APIs (e.g., from Hugging Face). |

| Uncertainty Quantification Software Library | Implements acquisition functions (UCB, EI, Thompson Sampling) for candidate selection. | Python libraries: scikit-learn (GPR), Pyro/GPyTorch (Bayesian NN), AX (Adaptive Experimentation). |

| Curated Seed Dataset | Small, high-quality initial data to bootstrap the AL cycle. | May originate from published literature, internal data, or databases (Citrination, Materials Project, PubChem). |

| Virtual Candidate Library | Defines the search space of possible materials for the AL algorithm to query. | Enumerated from reaction rules (e.g., Click Chemistry sets) or from crystal structure prototypes (e.g., from AFLOW). |

Within the thesis on active learning with foundation models for materials discovery, these three key terminologies form the iterative optimization core. Foundation models (pre-trained on vast materials datasets) provide initial predictions of target properties (e.g., bandgap, ionic conductivity, catalytic activity). Bayesian Optimization (BO) efficiently navigates the vast, high-dimensional chemical space to recommend the next most promising candidates for synthesis and testing, guided by the model's quantified uncertainty. This closed-loop, "active learning" paradigm accelerates the discovery of novel materials and drugs by minimizing expensive experimental trials.

Core Terminology: Detailed Application Notes

Bayesian Optimization (BO)

Application Notes: BO is a sample-efficient strategy for global optimization of expensive-to-evaluate "black-box" functions. In materials discovery, the "function" is the real-world experimental measurement of a property for a given material composition or structure, which is costly and time-consuming to obtain.

Protocol for Integration with Foundation Models:

- Initialization: Use a foundation model (e.g., a graph neural network pre-trained on the Materials Project database) to generate predictions for a large, virtual library of candidate materials.

- Surrogate Model Construction: Select a small, diverse subset of candidates for initial experimental validation. Use these (input candidate, experimental output) pairs to train a probabilistic surrogate model (typically Gaussian Process Regression) on top of the foundation model's latent representations. This surrogate maps materials to a probability distribution over the target property.

- Iterative Loop: a. The surrogate model provides a mean prediction (\mu(x)) and an uncertainty estimate (\sigma(x)) for all unevaluated candidates. b. An acquisition function, using (\mu(x)) and (\sigma(x)), selects the next candidate(s) for experiment. c. The candidate is synthesized and characterized. d. The new data point is added to the training set, and the surrogate model is updated. e. The loop repeats until a performance target is met or the experimental budget is exhausted.

Acquisition Functions

Application Notes: Acquisition functions balance exploration (probing regions of high uncertainty) and exploitation (probing regions predicted to be high-performing). They compute a single, easily optimized score for each candidate.

Summary of Common Acquisition Functions:

Table 1: Quantitative Comparison of Key Acquisition Functions

| Acquisition Function | Mathematical Form | Key Parameter | Best For | Tunability |

|---|---|---|---|---|

| Probability of Improvement (PI) | (\Phi(\frac{\mu(x) - f(x^+) - \xi}{\sigma(x)})) | (\xi) (trade-off) | Pure exploitation, finding any improvement | Low |

| Expected Improvement (EI) | ((\mu(x) - f(x^+) - \xi)\Phi(Z) + \sigma(x)\phi(Z)) | (\xi) (trade-off) | General-purpose balance | Medium |

| Upper Confidence Bound (UCB) | (\mu(x) + \kappa \sigma(x)) | (\kappa) (balance weight) | Explicit control of exploration | High |

| Thompson Sampling (TS) | Sample from posterior: (f_t(x) \sim \mathcal{N}(\mu(x), \sigma^2(x))) | Random seed | Parallel candidate selection, meta-learning | Low |

Protocol for Selecting an Acquisition Function:

- Define Goal: If the goal is to find the single best material quickly, use EI. If the goal is to map a performance landscape thoroughly, use UCB with a higher (\kappa).

- Benchmark: Run a short, simulated BO loop (using historical data) comparing the convergence speed of PI, EI, and UCB.

- Calibrate: Tune the parameter ((\xi) or (\kappa)) to achieve the desired exploration/exploitation balance for your specific search space. Use cross-validation on the surrogate model.

- Implement: For parallel synthesis/characterization (batch BO), use a batched variant like q-EI or implement Thompson Sampling.

Uncertainty Quantification (UQ)

Application Notes: Reliable UQ is critical for BO's success. Underestimated uncertainty leads to over-exploitation and stagnation; overestimated uncertainty leads to inefficient random exploration. UQ informs the acquisition function and flags model predictions that require caution.

Sources of Uncertainty in Foundation Model-Guided Discovery:

- Aleatoric: Irreducible noise inherent in experiments (synthesis variance, measurement error).

- Epistemic: Model uncertainty due to lack of data in certain regions of chemical space. This is reducible with more data and is the primary driver for exploration in BO.

Protocol for Uncertainty Quantification in a Surrogate Model:

- Model Choice: Employ a Gaussian Process (GP) surrogate, which natively provides predictive variance (epistemic UQ). For deep learning surrogates, use ensembles (e.g., 5-10 models with different initializations) or Monte Carlo Dropout.

- Kernel Selection: For materials represented as vectors or graphs, use a composite kernel (e.g., Matern + periodic) to capture complex relationships. Validate via marginal likelihood.

- Calibration: After model training, assess calibration: plot predicted confidence intervals vs. empirically observed frequencies (using a held-out validation set). Apply temperature scaling if necessary.

- Propagation: When recommending candidates, report the full predictive distribution (\mathcal{N}(\mu(x), \sigma^2(x))), not just the mean (\mu(x)).

Experimental Protocol: A Standard BO Cycle for Drug-like Molecule Discovery

Aim: To discover a novel organic molecule with maximized inhibitory potency (pIC50) against a target protein.

Materials & Reagents: (See The Scientist's Toolkit below). Foundation Model: Pre-trained ChemBERTa or a GNN on ChEMBL/ZINC. Surrogate Model: Gaussian Process with Tanimoto kernel (for molecular fingerprints).

Step-by-Step Workflow:

- Define Search Space: A virtual library of (10^6) molecules from a feasible chemical reaction set (e.g., Ugi reaction products).

- Initial Design of Experiments (DoE):

- Use the foundation model to encode all molecules.

- Perform k-medoids clustering ((k=20)) in the latent space to select a diverse initial training set.

- Synthesize and assay these 20 molecules for pIC50.

- Surrogate Model Training:

- Train a GP on the (latent representation, pIC50) pairs of the initial 20 molecules.

- Optimize kernel hyperparameters via maximum marginal likelihood.

- Bayesian Optimization Loop (Repeat for 30 cycles): a. Prediction & UQ: Use the trained GP to predict (\mu(x)) and (\sigma(x)) for all unevaluated molecules in the library. b. Acquisition: Compute the Expected Improvement (EI) score for all candidates. c. Selection: Identify the molecule (x^) with the maximum EI score. d. Experiment: Synthesize molecule (x^) and measure its pIC50. e. Update: Augment the training data with ((x^*, pIC50_{obs})) and retrain the GP.

- Validation: Synthesize and test the top 5 molecules identified by BO in triplicate to confirm activity.

Visualizations

Active Learning Loop with Bayesian Optimization

Uncertainty Quantification Components

The Scientist's Toolkit

Table 2: Essential Research Reagents & Solutions for BO-Driven Materials Discovery

| Item/Category | Function & Relevance to Protocol |

|---|---|

| High-Throughput Robotic Synthesis Platform | Enables rapid, automated synthesis of candidate molecules or material compositions identified by the BO loop, crucial for iterative cycles. |

| Standardized Biochemical or Functional Assay Kits | Provides consistent, quantitative measurement of the target property (e.g., enzyme inhibition, ionic conductivity, luminescence) for model training. |

| Chemical Building Blocks / Precursor Libraries | Defines the search space. A well-curated, diverse, and readily available library is essential for feasible experimental validation. |

| Gaussian Process Software Library | Core computational tool for building the surrogate model and performing UQ (e.g., GPyTorch, scikit-learn, GPflow). |

| High-Performance Computing (HPC) Cluster | Required for handling large virtual libraries, training foundation models, and running multiple BO simulations in parallel. |

| Data Management System (ELN/LIMS) | Electronic Lab Notebook/Lab Information Management System to systematically log all experimental outcomes, linking digital candidates to physical results. |

The discovery of functional molecules and materials has undergone a paradigm shift. This evolution, situated within a broader thesis on active learning with foundation models, represents a move from brute-force empirical screening toward a closed-loop, intelligent design cycle. Foundation models, pre-trained on vast scientific corpora and structured data, provide the predictive engine for active learning systems that strategically propose experiments, accelerating the path from hypothesis to validated discovery.

Application Notes

The Conceptual Shift: From HTS to Active Learning Loops

Note 1: Limitations of Traditional High-Throughput Screening (HTS)

- Scope: Despite generating large datasets, HTS explores a minuscule fraction of chemical space (<10^9 compounds screened vs. >10^60 possible drug-like molecules).

- Cost and Throughput: While robotic automation increased throughput, the cost per well and infrastructural investment remain high, with diminishing returns on novel hit discovery.

- Data Quality: Legacy HTS often produced high rates of false positives/negatives due to assay interference, leading to costly downstream validation failures.

Note 2: The Intelligent Guided Discovery Framework

- Core Principle: Integration of foundation model predictions with automated experimentation within an active learning loop.

- Key Advantage: The system learns from each experimental batch, refining its internal model to prioritize candidates with a higher probability of success for the next cycle.

- Thesis Integration: This framework is the practical implementation of the thesis's core argument: that foundation models, when coupled with automated physics-based simulations and robotic experimentation, create a "self-improving" discovery system.

Quantitative Performance Comparison

Table 1: Comparative Metrics of Screening vs. Guided Discovery

| Metric | Traditional HTS (c. 2000-2015) | AI-Guided Discovery with Active Learning (Current) | Data Source / Study Reference |

|---|---|---|---|

| Typical Initial Library Size | 10^5 – 10^6 compounds | 10^2 – 10^4 virtually generated candidates | Industry benchmarks |

| Hit Rate (for defined target) | 0.01% - 0.1% | 5% - 15% (in primary assay) | Recent publications (e.g., Insilico Medicine, 2023) |

| Cycle Time (Design → Test) | Months (sequential) | Days to Weeks (closed-loop) | ATOM Consortium, 2024 reports |

| Required Experimental Runs to Identify Lead | ~500,000 | ~1,000 - 5,000 | Analysis of disclosed campaigns |

| Key Enabling Technologies | Robotics, microfluidics | Foundation Models (ChemBERTa, GNoME), Automated Synthesis (Chemspeed), HT Characterization |

Table 2: Foundation Models for Materials & Molecular Discovery

| Model Name | Primary Domain | Training Data Scale | Typical Application in Active Learning Loop |

|---|---|---|---|

| GNoME (Google) | Inorganic Crystals | ~2.2 million known structures, billions generated | Proposing stable novel crystal structures for synthesis. |

| ChemBERTa | Small Molecules | ~77M SMILES strings from PubChem | Molecular property prediction & initial candidate ranking. |

| MATERIALS PROJECT | Materials Properties | ~150,000 known materials with DFT properties | Providing seed data and validation for generative models. |

| ProteinMPNN | Protein Design | ~180,000 protein structures | Designing binding proteins or enzymes in a single forward pass. |

Detailed Experimental Protocols

Protocol: An Active Learning Cycle for Novel Photocatalyst Discovery

Objective: To discover a novel, high-efficiency organic photocatalyst using a closed-loop active learning system integrating a molecular foundation model.

I. Materials and Reagents

- Robotic Liquid Handling System: (e.g., Hamilton STARlet, Chemspeed SWING) for precise nanoliter-scale reagent dispensing.

- Microplate Reader with Kinetic Capability: (e.g., Tecan Spark) for absorbance/fluorescence monitoring.

- Photo-reactor Array: Custom 96-well LED array with tunable wavelength (Blue: 450 nm) and intensity control.

- Chemical Building Blocks: Diverse set of electron donor, acceptor, and π-conjugated linker amines and aldehydes (≥95% purity).

- Model Substrate: Methyl dihydrofuran-2-carboxylate (or similar redox probe).

- Sacrificial Donor: Triethylamine (TEA) in degassed solvent.

- Solvent: Anhydrous Dimethylformamide (DMF), degassed with Argon for 30 min.

II. Procedure

Step 1: Initialization & Priors

- Define Objective: Search space: conjugated donor-acceptor molecules. Target property: high photo-oxidative quenching rate (

k_q > 1 x 10^9 M^-1 s^-1). - Seed Database: Curate 500 known photocatalyst structures and measured

k_qfrom literature into a structured database. - Fine-tune Foundation Model: Start with a pre-trained ChemBERTa model. Fine-tune on the seed database using a regression head to predict

log(k_q)from SMILES string.

Step 2: Generative Design & Downselection (In Silico)

- Generate Candidate Pool: Use a generative model (e.g., a fine-tuned JT-VAE) conditioned on high

k_qto propose 10,000 novel molecular structures. - Virtual Screening: Pass the 10,000 candidates through the fine-tuned ChemBERTa predictor. Filter for predicted

log(k_q) > 9. Apply synthetic accessibility (SA) score filter (SA < 4). - Diversity Selection: From the top 500 predicted performers, apply a clustering algorithm (e.g., k-means on molecular fingerprints) to select 96 structurally diverse candidates for the first experimental batch.

Step 3: Automated Synthesis & Characterization

- Robotic Synthesis: Execute a robotic Schiff-base condensation for each of the 96 candidates.

- Program liquid handler to dispense 100 µL of 10 mM aldehyde solution (in DMF) to a deep-well plate.

- Add 100 µL of corresponding 10 mM amine solution.

- Seal plate, shake at 500 rpm for 2 hours at 60°C.

- Quality Control: Transfer 5 µL from each well to an analysis plate. Run UPLC-MS (not fully automated, but batch processed) to confirm product formation and purity (>80% threshold).

Step 4: High-Throughput Kinetic Assay

- Assay Plate Preparation: In a black, clear-bottom 384-well plate, the liquid handler prepares assay mix per well:

- 90 µL of 50 µM photocatalyst candidate (from synthesis plate stock).

- 90 µL of 100 µM model substrate.

- 20 µL of 1.0 M TEA (final conc. 100 mM).

- Kinetic Measurement: Plate is loaded into microplate reader integrated with photo-reactor array.

- Protocol: 5 sec baseline read (fluorescence, ex/em specific to substrate), trigger blue LED array for 60 sec, monitor fluorescence decay kinetically at 1 Hz.

- Data Processing: Fit fluorescence decay curves to a first-order kinetic model to derive observed quenching rate constant (

k_obs) for each well.

Step 5: Model Update & Next Cycle Design

- Data Augmentation: Append the 96 new (structure,

k_obs) datapoints to the training database. - Retrain/Update Model: Perform a transfer learning step on the foundation model with the augmented dataset. This is a rapid, automated step.

- Acquisition Function: Use an Upper Confidence Bound (UCB) algorithm to select the next 96 candidates, balancing exploration (diverse structures) and exploitation (high predicted

k_q). - Loop: Return to Step 2. Repeat for 5-10 cycles or until a candidate with

k_q > targetis identified and validated.

Protocol: In-Silico Guided Discovery of Li-Ion Battery Cathodes

Objective: Use the GNoME foundation model and active learning to identify and prioritize novel layered oxide cathode materials (Li_x M_y O_z) with predicted high energy density and stability.

I. Computational Materials Toolkit

- Foundation Model: Pre-trained GNoME graph neural network.

- DFT Computation Cluster: High-performance computing access (e.g., using VASP or Quantum ESPRESSO software).

- Active Learning Manager: Python-based pipeline (e.g., using AMS or custom scripts).

II. Procedure

Step 1: Define Search Space & Target

- Constrain search to composition space: Li (3-12), Transition Metal (TM = Mn, Co, Ni, Fe, Ti) (1-4), O (4-16).

- Target: Predicted energy above hull (decomposition stability) < 50 meV/atom and predicted specific capacity > 250 mAh/g.

Step 2: Initial Candidate Generation & Filtering

- Input composition constraints into GNoME's generation pipeline.

- Receive initial set of ~100,000 candidate crystal structures with GNoME-predicted stability.

- Filter: Select top 1,000 with lowest "energy above hull" prediction.

Step 3: Active Learning DFT Validation Loop

- Batch Selection: From the 1,000, select a diverse batch of 50 materials using composition and structure-based clustering.

- DFT Calculation (Oracle): Perform high-fidelity DFT relaxation and energy calculation for each of the 50 materials. Calculate accurate energy above hull and Li diffusion barriers.

- Model Update: Use the 50 new (composition/structure, accurate DFT property) pairs to fine-tune the GNoME model (or a surrogate model) via transfer learning. This reduces the gap between GNoME's initial prediction and DFT ground truth for the local chemical space.

- Next Batch Proposal: The updated model re-ranks the remaining ~950 candidates. An acquisition function selects the next 50 that are either predicted to be highly stable (exploitation) or where the model's uncertainty is high (exploration).

- Loop: Repeat Steps 3.1-3.4. The model becomes increasingly accurate for the target property within the defined search space.

Step 4: Downselection for Experimental Validation

- After 5-10 cycles (250-500 DFT calculations), analyze the Pareto front of stability vs. predicted capacity.

- Select 5-10 top-ranking, synthetically feasible candidates for experimental synthesis orders.

Mandatory Visualization

Diagram 1: Evolution from HTS to Active Learning Loop

Diagram 2: Photocatalyst Discovery Active Learning Protocol

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Toolkit for Intelligent Guided Discovery Experiments

| Item / Reagent | Function in Protocol | Example Vendor / Product |

|---|---|---|

| Pre-trained Foundation Model | Provides initial predictive power for molecular or materials properties; the "prior knowledge" base. | GNoME (Google), ChemBERTa (Hugging Face), OpenCatalyst (Meta AI). |

| Active Learning Management Software | Orchestrates the loop: manages data, calls models, runs acquisition functions. | AMPL, DeepChem, custom Python (SciKit-learn, PyTorch). |

| Robotic Liquid Handler | Enables reproducible, nanoliter-scale dispensing for synthesis and assay assembly. | Hamilton STARlet, Chemspeed SWING, Opentrons OT-2. |

| Microplate Reader with Kinetic & Luminescence | Measures fast photochemical or biochemical reactions in high-throughput format. | Tecan Spark, BMG Labtech CLARIOstar, PerkinElmer EnVision. |

| Modular Photoreactor Array | Provides controlled, uniform illumination for photochemistry or photocatalyst screening. | Vapourtec Photoredox Array, Hel Photoreactor, custom LED plates. |

| Degassed, Anhydrous Solvents | Critical for air- and moisture-sensitive reactions, especially in organic photocatalysis. | Sigma-Aldrich Sure/Seal, Acros Organics Ampules. |

| Diversified Building Block Libraries | High-purity chemical sets (e.g., amines, aldehydes, boronates) for combinatorial synthesis. | Enamine REAL Space, WuXi AppTec CAST, Sigma-Aldrich Aldrich Market Select. |

| High-Performance Computing (HPC) Cluster | Runs high-fidelity DFT calculations as the "oracle" in computational materials discovery. | Local university clusters, AWS/Azure/Google Cloud, specialized (e.g., SGC). |

| Automated Synthesis Platform (Chemistry) | Fully integrates reaction execution, work-up, and purification for more complex syntheses. | Chemspeed AUTOSELECT, Freeslate CM3, Async Synthesis platforms. |

Building the Pipeline: A Step-by-Step Guide to Implementing Active Learning for Materials Discovery

Within the broader thesis on active learning with foundation models for materials discovery, the critical first step is the precise definition of the discovery objective. This choice determines the architecture of the active learning loop, the curation of training data, and the interpretation of model outputs. The three primary framings are:

- Property Prediction: Mapping a material's structure or composition to its properties.

- Inverse Design: Generating candidate materials that satisfy a set of desired target properties.

- Synthesis Planning: Predicting viable synthesis routes and conditions for a target material.

Comparative Analysis of Discovery Objectives

The table below summarizes the core characteristics, challenges, and typical model architectures for each objective.

Table 1: Comparative Framework for Discovery Objectives

| Aspect | Property Prediction | Inverse Design | Synthesis Planning |

|---|---|---|---|

| Core Question | Given a material (A), what are its properties (P)? | Given target properties (P), what material (A) achieves them? | Given a target material (A), how can it be made (S)? |

| Data Structure | (A → P) pairs. | Implicit or explicit (P → A) mapping. | (A → S) or (Reactants, Conditions → A) pairs. |

| Primary Challenge | Data scarcity & accuracy for diverse properties. | Multimodality & feasibility of generated candidates. | Multi-step reasoning, conditional feasibility. |

| Common Model Types | Graph Neural Networks (GNNs), Transformer Encoders. | Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Diffusion Models. | Sequence-to-sequence models, Transformers, Reinforcement Learning agents. |

| Key Metric | Prediction error (MAE, RMSE) on held-out test sets. | Satisfaction of property targets, novelty, stability (via DFT). | Route validity (literature consensus), experimental success rate. |

| Role in Active Learning Loop | Surrogate model for expensive simulations/experiments. | Proposal generator for acquisition function. | Recommender for closing the synthesis-to-test loop. |

Application Notes & Detailed Protocols

Protocol for Property Prediction with a Crystal Graph Neural Network

Objective: Train a surrogate model to predict the formation energy of inorganic crystals from their CIF files.

Workflow:

- Data Acquisition: Query the Materials Project API for crystals and their DFT-computed formation energies.

- Graph Representation: Convert each crystal's CIF file into a crystal graph using the

pymatgenandmatminerlibraries. Nodes represent atoms, edges represent bonds within a cutoff radius. - Model Training: Implement a CGCNN model. Atom features: atomic number, row, group. Train using Mean Squared Error loss.

- Active Learning Integration: Use model uncertainty (e.g., ensemble variance) to prioritize candidates for subsequent DFT validation.

Protocol for Inverse Design with a Latent Diffusion Model

Objective: Generate novel, stable organic molecules with a target HOMO-LUMO gap.

Workflow:

- Data Preparation: Assemble a dataset of SMILES strings and corresponding DFT-calculated HOMO-LUMO gaps (e.g., from QM9).

- Model Architecture: Train a Diffusion Model in the latent space of a pre-trained Molecular Autoencoder.

- Conditional Generation: Guide the denoising process using a property predictor to steer generation towards the target gap.

- Validation & Feasibility Filtering: Pass generated SMILES through a rules-based filter (e.g., SA score, synthetic accessibility) and a stability predictor before proposing for acquisition.

Protocol for Synthesis Planning with a Transformer Model

Objective: Predict precursor chemicals and reaction conditions for a given target perovskite composition.

Workflow:

- Data Collection: Extract solid-state synthesis recipes from literature databases (e.g., USPTO, text-mined from scientific articles).

- Tokenization: Tokenize target formula, precursors, amounts, and conditions (temperature, atmosphere, time) into a sequence.

- Sequence-to-Sequence Training: Train a Transformer model to map the sequence of the target material to the sequence of the synthesis protocol.

- Active Learning Integration: Prioritize model-predicted synthesis routes for experimental testing. Use experimental outcomes (success/failure) to fine-tune the model.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Foundation Model-Driven Materials Discovery

| Item | Function in Discovery Workflow | Example/Tool |

|---|---|---|

| Materials Databases | Provides structured (A→P) or (A→S) data for training. | Materials Project, OQMD, ICSD, USPTO. |

| Featurization Libraries | Converts raw materials data (CIF, SMILES) into model-ready formats. | pymatgen, RDKit, matminer. |

| Foundation Model Backbones | Core architectures for building task-specific models. | Graph Neural Network libraries (PyTorch Geometric, DGL), Transformers (Hugging Face). |

| Active Learning Orchestrators | Manages the iteration between model prediction and data acquisition. | Custom scripts with scikit-learn/GPy for uncertainty, ModAL framework. |

| High-Throughput Validation | Provides "ground truth" data to close the active learning loop. | DFT codes (VASP, Quantum ESPRESSO), robotic synthesis/characterization platforms. |

| Synthetic Accessibility Scorers | Filters generated molecules for realistic synthesis potential. | RDKit's SA Score, AiZynthFinder. |

| Reaction Condition Databases | Provides empirical data for training synthesis predictors. | ChemSynthesis, text-mined datasets from SciFinder. |

Within an active learning loop for materials discovery, the selection and pretraining of a foundation model is critical. This step determines the model's capacity to encode complex relationships between chemical structure, synthesis conditions, and functional properties. The architecture dictates the efficiency of subsequent fine-tuning and active learning iterations. This document provides Application Notes and Protocols for this phase.

Architectural Comparison for Materials Science

Table 1: Quantitative Comparison of Foundation Model Architectures

| Architecture | Typical # Parameters (Range) | Pretraining Data Requirement (Tokens) | Computational Cost (PF-days, est.) | Key Strengths | Key Limitations for Materials Science |

|---|---|---|---|---|---|

| Transformer Encoder (e.g., BERT) | 110M - 340M | 0.5B - 3B | 10 - 50 | Excellent for property prediction from SMILES/SELFIES. Captures bidirectional context. | Less generative; requires masking strategy for structured outputs. |

| Autoregressive Transformer (e.g., GPT) | 125M - 1B+ | 10B - 500B+ | 50 - 10,000 | Strong generative capabilities for de novo molecule design. Sequential prediction. | Unidirectional context may limit property understanding. Prone to hallucination. |

| Encoder-Decoder Transformer (e.g., T5) | 220M - 3B+ | 5B - 100B+ | 30 - 2,000 | Flexible text-to-text framework. Ideal for tasks like reaction prediction, condition optimization. | Higher computational overhead. Can be data-hungry. |

| Graph Neural Network (GNN) | 1M - 50M | 1M - 10M graphs | 5 - 100 | Native processing of molecular graphs. Captures topological and spatial relationships inherently. | Pretraining strategies (e.g., masking nodes/edges) are less mature than for language models. |

| Vision Transformer (ViT) | 85M - 650M+ | 10M - 100M images | 20 - 500 | Processes microscopy, spectroscopy, or structural image data. Transferable from natural images. | Domain shift from natural to scientific imagery requires careful adaptation. |

Experimental Protocols

Protocol 1: Pretraining a SMILES-based Transformer Encoder

Objective: To create a domain-adapted foundation model from a large corpus of chemical strings. Materials: ZINC-22 database (commercial or research license), curated in-house synthesis databases. Procedure:

- Data Preprocessing: Standardize all SMILES strings using RDKit (

Chem.MolToSmiles(Chem.MolFromSmiles(smi), canonical=True)). Apply a 1,000-character length filter. - Tokenization: Train a Byte-Pair Encoding (BPE) tokenizer (

tokenizerslibrary) on 1M randomly sampled SMILES to create a vocabulary of ~520 tokens. - Model Initialization: Initialize a BERT-style model (e.g., 12 layers, 768 hidden dim, 12 attention heads) using Hugging Face

Transformers. - Pretraining Task: Use Masked Language Modeling (MLM). For each sequence, mask 15% of tokens. Replace 80% with

[MASK], 10% with a random token, and leave 10% unchanged. - Training: Train using AdamW optimizer (lr=5e-5), batch size=1024, for 500k steps. Use a linear warmup for first 10k steps, then cosine decay.

Protocol 2: Pretraining a Graph Neural Network (GNN) on Molecular Graphs

Objective: To learn transferable representations of molecular structure via self-supervision. Materials: PCQM4Mv2 dataset (Open Graph Benchmark), OC20 dataset for inorganic materials. Procedure:

- Graph Construction: For each molecule, generate a graph where nodes are atoms (featurized by atomic number, degree, hybridization) and edges are bonds (featurized by type, conjugation).

- Model Architecture: Implement a 6-layer Graph Attention Network (GATv2) with hidden dimension 256.

- Pretraining Tasks (Multi-Task):

- Context Prediction: Mask a subgraph centered on a node and train the model to predict the surrounding context graph.

- Attribute Masking: Mask 15% of node and edge features (e.g., atom type, bond order) and train the model to reconstruct them.

- Training: Train using the LAMB optimizer (lr=0.001) with gradient clipping for 100 epochs.

Visualization of Workflows

Title: Foundation Model Pretraining & Active Learning Loop

Title: Multi-Task Pretraining for Molecular Models

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Foundation Model Pretraining

| Item / Solution | Function in Experiment | Example Provider / Library |

|---|---|---|

| Large-Scale Molecular Datasets | Provides raw, unlabeled data for self-supervised pretraining. | ZINC-22, PubChemQC, OC20 (OGB), The Materials Project |

| Specialized Tokenizers | Converts discrete molecular representations (SMILES, SELFIES) into model-readable tokens. | Hugging Face tokenizers, smiles-tokenizer, SELFIES library |

| Deep Learning Frameworks | Provides flexible, high-performance environments for building and training large models. | PyTorch (with PyTorch Geometric), JAX (with Haiku/Flax), TensorFlow |

| Pretraining Codebases | Offers reproducible implementations of MLM, contrastive learning, and other pretext tasks. | Hugging Face Transformers, Deep Graph Library (DGL), MATERIALS^2 |

| High-Performance Compute (HPC) | Enables training of billion-parameter models on massive datasets via distributed computing. | NVIDIA A100/H100 GPUs, Google Cloud TPU v4 pods, AWS Trainium |

| Chemical Informatics Toolkits | Performs critical data validation, standardization, and featurization during preprocessing. | RDKit, Open Babel, pymatgen (for inorganic materials) |

In an active learning (AL) loop for materials discovery, the acquisition function is the decision-making engine that selects the most informative or promising candidate from a vast, unexplored chemical space for the next round of experimentation or computation. This step directly addresses the exploration-exploitation dilemma. Exploration prioritizes candidates in uncertain regions of the predictive model's space to improve the model globally, while exploitation prioritizes candidates predicted to have high performance (e.g., highest conductivity, strongest binding affinity) based on current knowledge.

Common Acquisition Functions: Quantitative Comparison

The choice of acquisition function depends on the primary campaign objective. The table below summarizes key strategies.

Table 1: Quantitative Comparison of Common Acquisition Functions

| Acquisition Function | Mathematical Form (Typical) | Primary Goal | Key Hyperparameter(s) | Pros in Materials Discovery | Cons in Materials Discovery |

|---|---|---|---|---|---|

| Upper Confidence Bound (UCB) | μ(x) + β * σ(x) | Balanced trade-off | β (controls balance) | Intuitive, tunable balance. | β requires tuning; assumes Gaussian uncertainty. |

| Expected Improvement (EI) | E[max(0, f(x) - f(x*))] | Find global optimum | ξ (jitter parameter) | Focuses on beating current best (f(x*)). | Can become too exploitative; sensitive to ξ. |

| Probability of Improvement (PI) | P(f(x) ≥ f(x*) + ξ) | Find better than incumbent | ξ (trade-off parameter) | Simple probabilistic interpretation. | Can be overly greedy, gets stuck in local optima. |

| Entropy Search / Predictive Entropy Search | Maximize reduction in entropy of max location | Map optimum location | Requires complex approximation | Information-theoretic, rigorous. | Computationally expensive for high-dimensional spaces. |

| Thompson Sampling | Sample from posterior & maximize | Balanced trade-off | Posterior distribution | Natural, parallelizable. | Requires tractable posterior sampling; can be noisy. |

| Uncertainty Sampling | σ(x) (or variance) | Pure exploration | None | Simplest; good for initial model training. | Ignores performance; inefficient for optimization. |

Experimental Protocols for Acquisition Function Evaluation

Protocol 3.1: Benchmarking Acquisition Functions on a Known Dataset

- Objective: To empirically determine the most efficient acquisition function for a given materials property prediction task.

- Materials: A labeled dataset (e.g., OQMD, Materials Project subset) with target properties. A foundation model for materials (e.g., Matformer, Uni-Mol) used as a feature extractor. A probabilistic surrogate model (e.g., Gaussian Process, Probabilistic Neural Network).

- Procedure:

- Initialization: Randomly select a small seed set (e.g., 1% of data) to train the initial surrogate model.

- AL Loop: For N iterations (e.g., 100): a. The surrogate model makes predictions (μ(x)) and uncertainty estimates (σ(x)) on all points in the unlabeled pool. b. Candidate Selection: Apply each acquisition function (UCB, EI, PI, etc.) in parallel to the pool. Each function selects the top k candidates (batch size) based on its criterion. c. Evaluation: "Oracle" evaluation by retrieving the true property value from the held-out dataset for the selected candidates. d. Model Update: Add the newly labeled candidates to the training set and retrain/update the surrogate model.

- Metric Tracking: For each acquisition function, track the best discovered value (exploitation) and the root mean square error (RMSE) of the surrogate model on a fixed test set (exploration/global model accuracy) vs. the number of iterations.

- Analysis: Plot performance curves. The optimal function shows the steepest ascent to the global maximum for optimization tasks, or the fastest decrease in global RMSE for property mapping tasks.

Protocol 3.2: Deployment in a Virtual Screening Campaign for Organic Photovoltaics (OPVs)

- Objective: To discover novel donor polymer candidates with a predicted power conversion efficiency (PCE) > 15%.

- Materials: A generative chemical language model (e.g., ChemGPT) fine-tuned on polymer SMILES. A QSPR model for PCE prediction with uncertainty estimation capability.

- Procedure:

- Candidate Generation: Use the generative model to create an initial diverse pool of 10,000 virtual polymer candidates.

- Surrogate Model: Train an initial Gaussian Process surrogate model on a small dataset of known polymer-PCE pairs.

- Informed AL Loop: a. Predict PCE (μ) and uncertainty (σ) for the entire virtual pool. b. Acquisition: Use a UCB function with a scheduled β. Initially, β is set high to favor exploration (σ) and diversify the training data for the surrogate model. Every 20 iterations, β is reduced by 10% to gradually shift focus towards exploitation (μ) of high-PCE regions. c. Select the top 50 candidates per batch. d. Update the surrogate model with the new predictions (these would be validated via DFT in a real loop).

- Termination: Stop after 200 iterations or when five consecutive batches yield no improvement in the top predicted PCE.

Visualization of Acquisition Logic and Active Learning Workflow

(Diagram 1 Title: Active Learning Loop with Acquisition Function)

(Diagram 2 Title: Acquisition Function Decision Pathways)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Acquisition Function Design

| Item / Tool | Function in Acquisition Design | Example Solutions / Libraries |

|---|---|---|

| Probabilistic Surrogate Model | Provides the predictive mean (μ) and uncertainty estimate (σ) essential for most acquisition functions. | Gaussian Process (GPyTorch, GPflow), Bayesian Neural Networks (TensorFlow Probability, Pyro), Ensemble Models (scikit-learn). |

| Acquisition Function Library | Pre-implemented, optimized functions for easy benchmarking and deployment. | BoTorch (built on PyTorch), Ax (by Meta), Scikit-Optimize, Trieste. |

| Chemical Foundation Model | Generates meaningful representations or novel candidates for the unlabeled pool. | Matformer (materials), ChemBERTa/ChemGPT (molecules), Uni-Mol (molecules & complexes). |

| High-Throughput Simulation Code | Acts as the "Oracle" in virtual screening to label acquired candidates with high-fidelity properties. | DFT codes (VASP, Quantum ESPRESSO), Molecular Dynamics (LAMMPS, GROMACS), Docking (AutoDock Vina, Gnina). |

| Hyperparameter Optimization Suite | For tuning acquisition parameters (e.g., β in UCB, ξ in EI) and model parameters. | Optuna, Ray Tune, Hyperopt. |

| Visualization Dashboard | To track the AL loop progress, acquisition scores, and model performance metrics in real-time. | Custom Plotly/Dash apps, TensorBoard, Weights & Biases (W&B). |

Application Notes

In the context of active learning (AL) for materials discovery, Step 4 is the critical engine that transforms predictions from a foundation model into validated scientific knowledge. This step closes the loop by feeding high-quality, experimentally confirmed data back into the model for retraining, creating a virtuous cycle of increasingly accurate predictions.

- Primary Function: To validate, challenge, and refine the predictions or hypotheses generated by the AL-guided foundation model (Steps 1-3). This step grounds the digital discovery process in physical reality.

- Core Challenge: Designing feedback loops that are high-throughput, reliable, and yield data in a format directly usable for model improvement. Automation and data standardization are paramount.

- Two Principal Modalities: The implementation differs based on the discovery domain:

- Robotics / Experimental Feedback: For molecular synthesis, formulation, or property measurement (e.g., battery electrolyte conductivity, catalyst activity).

- Density Functional Theory (DFT) / Simulation Feedback: For initial screening of stability, electronic properties, or binding energies before resource-intensive experimental validation.

Table 1: Comparison of Feedback Loop Modalities

| Feature | Robotic Experimental Loop | DFT Simulation Loop |

|---|---|---|

| Primary Goal | Physical synthesis & measurement of target properties. | In silico calculation of quantum mechanical properties. |

| Throughput | Medium-High (10s-100s samples/day). | Very High (100s-1000s of candidates/day). |

| Cost per Sample | High (reagents, equipment, labor). | Low (computational resources). |

| Data Fidelity | Ground Truth (real-world noise, defects, conditions). | Theoretical Approximation (accuracy depends on functional, error ~1-10%). |

| Typical Data Output | Spectra, chromatograms, electrochemical curves, numerical performance metrics. | Total energy, band gap, adsorption energy, reaction pathways, vibrational frequencies. |

| Key Limitation | Scalability of synthesis, characterization bottlenecks. | Systematic errors of DFT, lack of dynamics/solvation effects (unless using MD). |

| Role in AL Cycle | Final validation and generation of training data with highest impact. | Rapid pre-screening to filter implausible candidates, enriching the pool for experiment. |

Protocols for Feedback Loop Integration

Protocol 2.1: High-Throughput Robotic Synthesis and Characterization for Organic Molecule Libraries

Objective: To autonomously synthesize and characterize a batch of 96 organic small molecule candidates predicted by an AL foundation model to have desirable properties (e.g., photovoltaic efficiency, ligand binding).

Materials & Reagents:

- Reagent Solutions: Pre-dissolved building blocks (e.g., aryl halides, boronic acids, catalysts) in DMSO or appropriate solvent, prepared by liquid handling robot.

- Solid Dispenser: For precise weighing of solid reagents/catalysts.

- Liquid Handling Robot: Equipped with temperature-controlled deck and orbital shaker.

- 96-Well Microplate: Reaction plate with seal.

- HPLC-MS System: For reaction monitoring and purity analysis.

- Plate Reader/ Spectrophotometer: For UV-Vis or fluorescence-based property screening.

Methodology:

- Workflow Initialization: The AL campaign manager exports a .csv file of target molecules and required reagents to the robotic scheduling software.

- Automated Liquid Handling:

- The liquid handler aspirates specified volumes of stock reagent solutions and dispenses them into designated wells of the 96-well reaction plate.

- A solid dispenser adds precise milligram quantities of catalyst/powdered reagents.

- Reaction Execution: The plate is sealed, moved to a heated orbital shaker on the deck, and agitated at the specified temperature (e.g., 80°C) and duration (e.g., 16h).

- Quenching & Sampling: The robot adds a quenching solvent to each well. An aliquot from each well is transferred to a separate analysis plate.

- High-Throughput Characterization:

- The analysis plate is injected via an autosampler into an HPLC-MS system running a fast generic gradient.

- Data collected: UV chromatogram (210-400 nm), mass spectrum (ESI+/ESI-), and estimated purity (%).

- Primary Property Screening: For optoelectronic materials, the original reaction plate may be diluted, and absorption/emission spectra collected via a plate reader.

- Data Parsing & Feedback: Custom scripts parse the HPLC-MS and spectral data, extracting key metrics (e.g., success/failure flag, yield estimate, purity, λ_max). This structured data table is appended to the master dataset for AL model retraining.

Diagram 1: Robotic Experimental Feedback Loop

Protocol 2.2: High-Throughput DFT Simulation Workflow for Inorganic Solid-State Materials

Objective: To computationally screen 500 candidate inorganic compositions for thermodynamic stability and band gap, as proposed by an AL model for photocatalysts.

Materials & Reagents (Computational):

- Workflow Manager: Fireworks, AiiDA, or custom Python scheduler.

- Computational Resources: HPC cluster with VASP, Quantum ESPRESSO, or similar DFT code installed.

- Initial Structures: CIF files from materials databases (e.g., Materials Project, OQMD) or generated by symmetry enumeration tools.

- Pseudopotential Libraries: PAW PBE or similar.

Methodology:

- Workflow Initialization: The AL model outputs a list of target compositions and suggested prototype structures. A workflow manager (e.g., AiiDA) creates a directed acyclic graph (DAG) of calculations for each candidate.

- Structure Relaxation:

- Geometry Optimization: Perform a full relaxation of ionic positions and cell vectors using a standard GGA functional (e.g., PBE) with appropriate k-point density and energy cutoff.

- Convergence Check: Ensure forces and stresses are below predefined thresholds (e.g., forces < 0.01 eV/Å).

- Property Calculation:

- Stability Analysis: Calculate the formation energy (ΔH_f) relative to elemental phases. For complex systems, compute the energy above the convex hull.

- Electronic Structure: Perform a static calculation on the relaxed structure. Compute the electronic density of states (DOS) and the band gap (often requiring hybrid functionals like HSE06 for accuracy).

- Optional: Calculate preliminary properties like bulk modulus or vibrational modes.

- Data Extraction & Parsing: Post-processing scripts automatically extract key numerical values from the output files: total energy, ΔH_f, band gap, space group, volume.

- Feedback Generation: The parsed data is formatted into a table. Candidates with negative formation energy (stable) and band gaps within the target range (e.g., 1.5-3.0 eV) are flagged as "successful." All data is fed back to the AL model, which learns to correlate compositional/structural features with stability and band gap.

Diagram 2: DFT Simulation Feedback Loop

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 2: Key Resources for Integrated Feedback Loops

| Item | Function | Example in Protocol |

|---|---|---|

| Liquid Handling Robot | Automates precise dispensing of liquid reagents, enabling reproducible, high-throughput synthesis in microtiter plates. | Protocol 2.1: Dispensing stock solutions for Suzuki-Miyaura coupling reactions. |

| Solid Dispensing Robot | Accurately weighs and dispenses milligram to gram quantities of solid reagents (catalysts, bases, substrates). | Protocol 2.1: Adding Pd catalyst and phosphate base to reaction wells. |

| Automated Synthesis Platform | Integrated system combining liquid handling, solid dispensing, and on-deck reactors (shaker, heater, chiller) for end-to-end reaction execution. | Protocol 2.1: Performing the entire synthesis workflow from a digital recipe. |

| HPLC-MS with Autosampler | Provides unambiguous analysis of reaction outcome: identity confirmation (MS) and purity assessment (UV). | Protocol 2.1: Analyzing all 96 wells of a reaction plate overnight. |

| Workflow Management Software (AiiDA/Fireworks) | Automates, tracks, and reproduces complex computational workflows, managing job submission, data retrieval, and provenance. | Protocol 2.2: Managing 500+ interdependent DFT relaxation and property calculations. |

| High-Performance Computing (HPC) Cluster | Provides the massive parallel computing power required for performing hundreds of DFT calculations within a feasible timeframe. | Protocol 2.2: Executing all DFT simulations. |

| Materials Database (MP, OQMD) | Source of initial crystal structures for DFT calculations and a reference for stability (convex hull) and property validation. | Protocol 2.2: Retrieving prototype structures for new compositions. |

| Automated Data Parser (Custom Scripts) | The Critical Glue. Extracts structured numerical data and metadata from raw instrument/calculation files, formatting it for AL model consumption. | Protocol 2.1 & 2.2: Converting .RAW MS files and VASP OUTPUTs into .csv files of key metrics. |

Application Note 1: Active Learning-Driven Discovery of Non-Precious Metal ORR Catalysts

Context: This protocol applies an active learning (AL) loop with a graph neural network (GNN) foundation model to identify high-performance, non-precious metal catalysts for the oxygen reduction reaction (ORR) in fuel cells, reducing reliance on platinum-group metals.

Key Quantitative Data: Table 1: Performance Metrics of Top AL-Identified Catalytic Formulations vs. Baseline Pt/C

| Catalyst Formulation | Half-Wave Potential (E1/2 vs. RHE) | Kinetic Current Density (jk at 0.9V) [mA cm⁻²] | Mass Activity [A mg⁻¹] | Stability (% E1/2 loss after 10k cycles) |

|---|---|---|---|---|

| Pt/C (Baseline) | 0.91 V | 5.2 | 0.45 | 12% |

| Fe–N–C (AL-3) | 0.88 V | 4.8 | 0.32 | 8% |

| Co–N–C–S (AL-12) | 0.90 V | 5.1 | 0.41 | 5% |

| Fe/Co–N–C (AL-27) | 0.92 V | 6.3 | 0.52 | 3% |

Experimental Protocol: High-Throughput Synthesis & Electrochemical Screening

- Precursor Library Preparation: Using an automated liquid handler, prepare 96-well plates with varying molar ratios of metal salts (Fe, Co), nitrogen-containing ligands (1,10-phenanthroline, bipyridine), and heteroatom dopant precursors (thiourea, phytic acid) in methanol/water solvent.

- Pyrolysis & Activation: Transfer plates to a multi-channel tube furnace. Perform pyrolysis under N₂ at 800°C for 2 hours, followed by a second pyrolysis step under NH₃ at 600°C for 1 hour to increase nitrogen content and porosity. Cool under inert atmosphere.

- Ink Formulation & Electrode Preparation: Automatically mix 2 mg of each catalyst powder with 1 mL of Nafion/ethanol/water solution (0.025 wt% Nafion) and sonicate for 30 min. Using an automated spray coater, deposit thin-film layers onto pre-polished glassy carbon rotating disk electrodes (RDEs) to a uniform loading of 0.6 mg cm⁻².

- High-Throughput Electrochemical Testing: Mount RDEs on a multi-channel potentiostat in a 0.1 M HClO₄ electrolyte saturated with O₂. Perform cyclic voltammetry (CV) from 0.05 to 1.0 V vs. RHE at 50 mV s⁻¹. Record ORR polarization curves from 0.2 to 1.0 V at 10 mV s⁻¹ with rotation at 1600 rpm. Key metrics (E1/2, jk) are automatically extracted and logged to a database for AL model re-training.

Active Learning Workflow Diagram

Title: Active Learning Loop for ORR Catalyst Discovery

The Scientist's Toolkit: Table 2: Key Research Reagent Solutions for ORR Catalyst Screening

| Reagent/Material | Function/Description |

|---|---|

| Fe(AcAc)₃ / Co(AcAc)₂ | Metal precursors for M–N–C site formation. |

| 1,10-Phenanthroline | Nitrogen-rich chelating ligand, promotes M–N₄ coordination. |

| ZIF-8 (Baseline Support) | Metal-organic framework template for high surface area carbon. |

| 0.1 M HClO₄ Electrolyte | Standard acidic medium for PEMFC-relevant ORR testing. |

| Nafion 117 Solution (5 wt%) | Proton conductor for catalyst ink, ensures ionic conductivity. |

| Glassy Carbon RDE (5mm dia.) | Standard substrate for thin-film electroanalysis. |

Application Note 2: Active Learning for Generative Design of Solid-State Battery Electrolytes

Context: This protocol details the use of a generative AL framework, combining a variational autoencoder (VAE) foundation model with molecular dynamics (MD) simulations, to design novel Li-ion solid polymer electrolytes (SPEs) with high ionic conductivity (>10⁻³ S cm⁻¹ at 25°C) and wide electrochemical stability window (>4.5 V).

Key Quantitative Data: Table 3: Properties of AL-Generated Solid Polymer Electrolyte Candidates

| Polymer ID | Backbone Structure | Ionic Conductivity @ 25°C [S cm⁻¹] | Li⁺ Transference Number (t₊) | Electrochemical Window | Predicted σ (MD) [S cm⁻¹] |

|---|---|---|---|---|---|

| PEO (Baseline) | Poly(ethylene oxide) | 2.1 × 10⁻⁶ | 0.18 | 3.9 V | 5.8 × 10⁻⁶ |

| AL-SPE-07 | Poly(vinylene carbonate-co-EO) | 1.4 × 10⁻³ | 0.63 | 4.8 V | 2.1 × 10⁻³ |

| AL-SPE-15 | Nitrile-grafted polysiloxane | 8.9 × 10⁻⁴ | 0.71 | 5.1 V | 7.5 × 10⁻⁴ |

| AL-SPE-22 | MOF-linked polymer network | 3.2 × 10⁻³ | 0.52 | 4.6 V | 2.8 × 10⁻³ |

Experimental Protocol: Synthesis and Characterization of SPEs

- Polymer Synthesis via Ring-Opening Polymerization (ROP): In an Ar-filled glovebox, combine the AL-specified monomer (e.g., vinylene carbonate) with ethylene oxide (EO) at the prescribed molar ratio. Initiate polymerization using 0.1 mol% Sn(Oct)₂ catalyst at 80°C for 48 hours. Terminate with methanol, precipitate in diethyl ether, and dry under vacuum.

- Electrolyte Membrane Fabrication: Dissolve 200 mg of the synthesized polymer and 40 mg of LiTFSI salt (EO:Li = 15:1) in 5 mL anhydrous acetonitrile. Cast the solution onto a PTFE dish. Evaporate slowly under N₂, then vacuum dry at 80°C for 24 hours to form a freestanding membrane (~100 μm thick).

- Electrochemical Impedance Spectroscopy (EIS): Sandwich the SPE membrane between two stainless steel (SS) blocking electrodes in a CR2032 coin cell configuration. Measure impedance from 1 MHz to 0.1 Hz at 25°C using a potentiostat. The bulk resistance (R₆) is obtained from the high-frequency intercept on the real axis. Calculate ionic conductivity: σ = L / (R₆ × A), where L is thickness and A is electrode area.

- Li⁺ Transference Number (t₊) Measurement: Assemble a Li | SPE | Li symmetric cell. Apply a small DC polarization (ΔV = 10 mV) after measuring initial current (I₀) and resistance (R₀). Monitor until steady-state current (Iₛₛ) and resistance (Rₛₛ) are reached. Calculate t₊ = [Iₛₛ(ΔV - I₀R₀)] / [I₀(ΔV - IₛₛRₛₛ)].