Academic vs. Industrial HTE Platforms: A Comparative Guide for Drug Discovery & Development

This article provides a comprehensive comparison of high-throughput experimentation (HTE) platforms in academic and industrial settings, tailored for researchers and drug development professionals.

Academic vs. Industrial HTE Platforms: A Comparative Guide for Drug Discovery & Development

Abstract

This article provides a comprehensive comparison of high-throughput experimentation (HTE) platforms in academic and industrial settings, tailored for researchers and drug development professionals. It explores their foundational philosophies, core methodologies, and unique capabilities. We delve into strategic applications, common troubleshooting scenarios, and key validation metrics, offering insights to help scientists navigate and select the optimal platform for their specific research and development goals, from early discovery to clinical candidate optimization.

Core Philosophies & Design: Understanding the DNA of Academic and Industrial HTE Systems

High-throughput experimentation (HTE) platforms represent a paradigm shift in scientific investigation, accelerating the testing of hypotheses and materials. Their application, however, diverges profoundly based on the mission of the implementing organization. In academia, HTE is an engine for Fundamental Discovery, probing the mechanisms of biology, chemistry, and physics to expand human knowledge. In the pharmaceutical industry, HTE is a tool for Pipeline Value Creation, designed to de-risk, optimize, and accelerate the delivery of therapeutic assets to patients and shareholders. This whitepaper details the technical manifestations of these distinct missions.

Core Mission Comparison

The following table contrasts the defining characteristics of HTE deployment in both spheres.

Table 1: Mission Parameters of Academic vs. Industrial HTE

| Parameter | Academia (Fundamental Discovery) | Industry (Pipeline Value Creation) |

|---|---|---|

| Primary Driver | Novel biological/chemical insight, publication, grant funding. | Project milestones, return on investment (ROI), pipeline velocity. |

| Hypothesis Scope | Broad, exploratory. "What is the mechanism of this phenotype?" | Narrow, focused. "Which of these 10^6 compounds inhibits target X with >100 nM potency and <5 hERG liability?" |

| Experimental Design | Iterative, open-ended, driven by unexpected results. | Highly structured, stage-gated, with predefined success criteria (e.g., IC50, selectivity index). |

| Key Performance Indicators (KPIs) | Publication impact factor, citations, new grants awarded. | Compound attrition rate, cycle time per design-make-test-analyze (DMTA) loop, clinical candidate nomination rate. |

| Risk Tolerance | High. Negative or complex results can be valuable. | Low. Failures are costly; the goal is predictable, interpretable data to guide decisions. |

| Data Emphasis | Depth, mechanistic understanding, reproducibility for the scientific community. | Speed, reproducibility under GxP-like rigor, integration into predictive models (QSAR, ML). |

| Technology Adoption | Early adoption of novel, sometimes unproven, platforms for capability. | Adoption of robust, validated, and scalable platforms with strong technical support. |

Case Study: HTE in Kinase Drug Discovery

The field of kinase inhibitor development provides a clear illustration of these divergent missions.

Fundamental Discovery (Academic Mission): An academic lab uses a HTE phenotypic screen to identify novel kinases involved in an obscure cellular process (e.g., non-canonical autophagy). The goal is to map a new signaling pathway. Pipeline Value Creation (Industrial Mission): A biotech company uses a HTE biochemical screen against a well-validated oncology target (e.g., EGFR T790M) to identify a novel chemical series with a differentiated intellectual property (IP) position and predicted blood-brain barrier penetration.

Experimental Protocols

Protocol A: Academic HTE for Pathway Discovery (Chemical Genetics)

- Library: Use a diverse library of ~5,000 kinase inhibitors with annotated targets (a "toolbox" library).

- Assay: Conduct a high-content imaging screen of a GFP-LC3 reporter cell line under nutrient-starvation conditions. Readout: autophagosome count per cell.

- Primary Screen: Plate cells in 384-well format. Dispense compounds via acoustic droplet ejection (final concentration 1 µM). Incubate for 24h. Fix, stain nuclei, and image.

- Analysis: Identify "hits" that significantly increase or decrease autophagosome counts beyond 3 standard deviations from the median.

- Target Deconvolution: Use affinity purification probes (e.g., kinobeads) coupled with mass spectrometry to identify the physical protein targets of unannotated hits.

- Validation: Employ CRISPRi knockdown/knockout of putative target kinases to confirm phenotypic mimicry.

Protocol B: Industrial HTE for Lead Optimization (SAR Expansion)

- Library: A focused library of ~50,000 analogs derived from a confirmed "hit" series from a primary screen.

- Assay: A panel of HTE biochemical assays: primary target (EGFR T790M) potency, anti-target (hERG, CYP3A4) liability, and selectivity against a panel of 50 representative kinases.

- Primary Screen: Run all compounds at a single concentration (10 µM) in 1536-well format for the primary potency assay. Compounds showing >70% inhibition advance.

- Dose-Response: For advancing compounds, perform 10-point dose-response curves in duplicate for all assay panels.

- Data Integration: Data is automatically uploaded to a centralized database. Potency (IC50), selectivity (Gini score), and early DMPK parameters (e.g., microsomal stability) are modeled simultaneously to guide the next round of chemical synthesis.

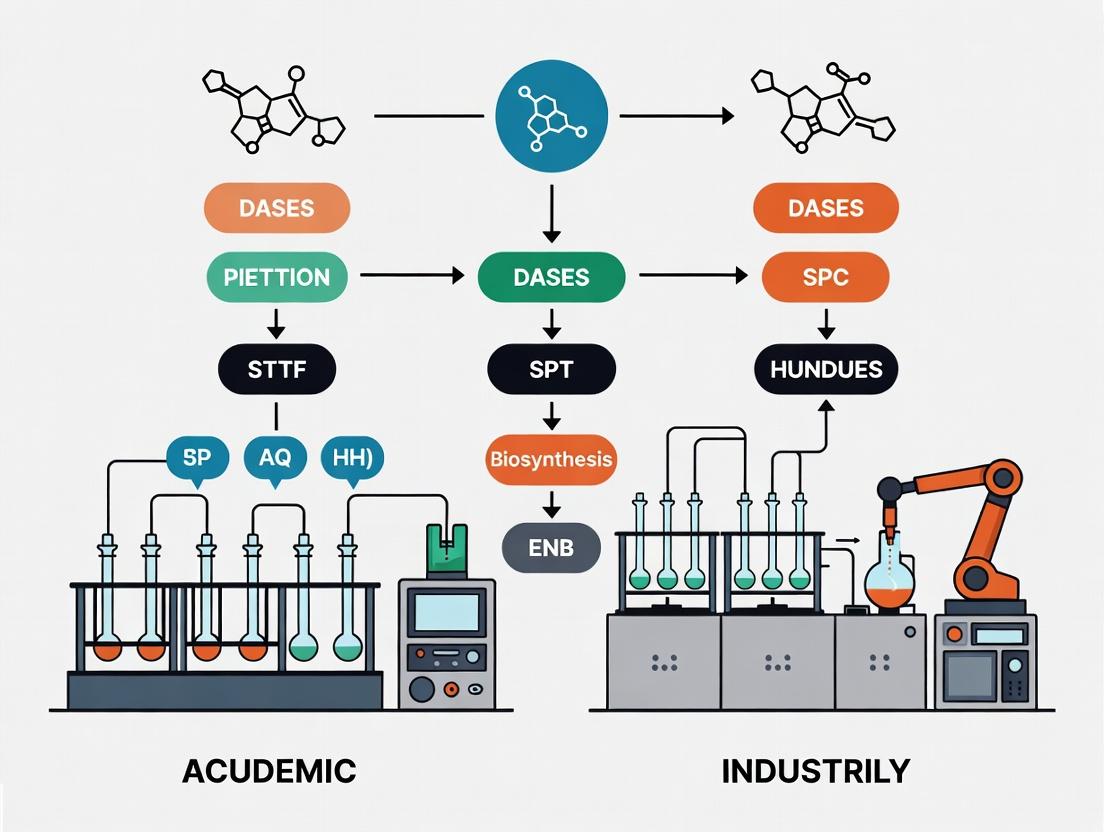

Visualization of Workflows

The Scientist's Toolkit: Essential Reagents & Platforms

Table 2: Key Research Reagent Solutions for Kinase-Focused HTE

| Item | Function in HTE | Typical Use Case |

|---|---|---|

| Kinase-Targeted DNA-Encoded Library (DEL) | Enables screening of billions of compounds in a single tube by tagging each unique chemical structure with a DNA barcode. | Industry: Ultra-high-throughput hit discovery against purified kinase targets. |

| Phospho-Specific Antibodies & Luminescent Probes | Detect phosphorylation events (e.g., p-ERK, p-AKT) in cell-based assays as a proximal readout of kinase activity. | Academia/Industry: High-content or plate-based signaling pathway analysis. |

| Cellular Thermal Shift Assay (CETSA) Kits | Measure target engagement in cells by detecting ligand-induced protein thermal stability shifts. | Industry: Early confirmation of on-target activity; Academia: Target deconvolution. |

| CRISPRi/a Knockdown Pooled Libraries | Genetically perturb thousands of genes (including kinases) in a pooled format for phenotypic screening. | Academia: Systematic identification of kinase regulators in a biological process. |

| Microfluidic Cytometry & Imaging Platforms | Analyze single-cell phenotypes (viability, signaling, morphology) at very high speed and throughput. | Both: Deep phenotypic profiling of compound or genetic perturbations. |

| Cloud-Based SAR Analysis Software | Platforms for visualizing structure-activity relationships, modeling ADMET properties, and collaborative data sharing. | Industry: Critical for integrating HTE data into the DMTA cycle and decision-making. |

Data Outputs and Translation

Table 3: Comparative Output Metrics from Recent HTE Campaigns (Representative)

| Output Metric | Academia (Fundamental Discovery) | Industry (Pipeline Value Creation) |

|---|---|---|

| Throughput (compounds/week) | Moderate (1,000 - 10,000) | Very High (100,000 - 1,000,000+) |

| Primary Data Type | High-content images, genomic/proteomic sequencing data. | Numerical IC50/EC50, selectivity ratios, DMPK parameters. |

| Validation Standard | Orthogonal assays (genetic rescue, biophysical binding). | In vivo pharmacokinetic/pharmacodynamic (PK/PD) efficacy. |

| Public Data Repository | Often deposited in public databases (e.g., PubChem, GEO). | Held as proprietary, confidential business information. |

| Time to Public Dissemination | 12-24 months (post-publication). | 3-10 years (via patent filings or conference abstracts). |

| Ultimate "Product" | Peer-reviewed paper, open-source dataset, trained researchers. | IND application, clinical candidate, new therapy. |

While the missions of academia and industry differ in immediate objectives—knowledge generation versus asset generation—they are symbiotically linked. Academic HTE identifies novel targets and biological principles, feeding the industry pipeline with new opportunities. Industrial HTE, in turn, validates these discoveries in the crucible of therapeutic development and funds future academic research through collaborations and licensing. The most effective modern research ecosystems are those that facilitate the flow of ideas, technologies, and talent across this discovery-value interface, leveraging the unique strengths of HTE in both realms to advance science and medicine.

The evolution of high-throughput experimentation (HTE) platforms is characterized by a fundamental tension between academic and industrial research paradigms. Academic pursuits often prioritize flexibility and open-source development to enable novel, exploratory science. In contrast, industrial drug development necessitates rigorous standardization and GxP (Good Practice) compliance to ensure patient safety, data integrity, and regulatory approval. This whitepaper explores the architectural blueprints required to navigate this dichotomy, providing a technical guide for deploying HTE systems that can bridge both worlds.

Core Architectural Principles: A Comparative Analysis

Table 1: Core Architectural Principles Comparison

| Principle | Open-Source/Flexible Approach | Standardized/GxP-Compliant Approach |

|---|---|---|

| Primary Goal | Maximize innovation, adaptability, and collaboration. | Ensure reproducibility, traceability, and patient safety. |

| Code & Hardware | Open-source licenses (e.g., Apache 2.0, GPL); modular, DIY components. | Validated, version-controlled commercial or internally developed systems. |

| Data Management | Flexible schemas (e.g., NoSQL); open formats (e.g., .h5). | Fixed schemas with audit trails; ALCOA+ principles; often SQL-based. |

| Protocol Execution | Scriptable, user-defined workflows (e.g., Jupyter, Python). | Pre-validated Standard Operating Procedures (SOPs) with electronic signatures. |

| Change Management | Community-driven, rapid iteration. | Formal change control procedures with impact assessments. |

| Cost & Speed | Lower upfront cost; faster initial setup. | High validation cost; slower deployment but reduced operational risk. |

Quantitative Landscape: Platform Adoption & Performance

Recent data (2023-2024) illustrates the measurable impacts of each architectural choice.

Table 2: Quantitative Comparison of HTE Platform Attributes

| Metric | Academic/Open-Source Platforms | Industrial/GxP Platforms | Measurement Source |

|---|---|---|---|

| Mean Time to Deploy New Assay | 2-4 weeks | 12-24 weeks | Industry survey data |

| Mean System Uptime | 92-95% | 99.5%+ (validated requirement) | Platform monitoring logs |

| Initial Hardware Cost (Core System) | $50k - $150k | $500k - $2M+ | Vendor quotations |

| Data Integrity Error Rate | ~0.5-1% (estimated) | <0.1% (validated target) | Audit findings, QC checks |

| Annual Maintenance Cost | 5-15% of initial cost | 15-25% of initial cost (incl. validation) | Financial reports |

Experimental Protocols for Cross-Paradigm Validation

To evaluate platforms bridging both paradigms, the following core validation protocol is essential.

Protocol 1: Cross-Paradigm HTE System Qualification Objective: To assess the performance of a flexible, open-source-derived platform against GxP-aligned reproducibility and data integrity standards. Materials: See "The Scientist's Toolkit" below. Methodology:

- System Specification: Define User Requirements Specification (URS) for both the experimental assay (e.g., cell viability dose-response) and data integrity needs.

- Open-Source Configuration: Deploy a core liquid handler using open-source API (e.g.,

Opentrons API) or framework (e.g.,FAIR Automation). All control scripts shall be version-controlled in Git. - GxP-Layer Integration: Implement a middleware data capture system that logs all instrument actions, environmental conditions (via IoT sensors), and raw data files to a centralized database with immutable audit trails.

- Performance Qualification (PQ):

- Execute a standardized 96-well plate cell viability assay (n=6 plates per run).

- Precision: Calculate intra- and inter-plate CV% for control wells.

- Accuracy: Compare mean IC50 values to a pre-qualified reference method using Bland-Altman analysis.

- Data Integrity Check: Manually introduce an anomaly (e.g., a skipped well). Verify the audit trail and system logs flag the discrepancy.

- Data Analysis: All analysis must be performed via versioned scripts (e.g., Python/R) that take raw data as input and produce outputs without manual intervention.

Architectural Visualizations

Diagram 1: HTE Platform Development Pathways (100 chars)

Diagram 2: Hybrid Data Integrity Workflow (100 chars)

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function in Cross-Paradigm HTE | Example/Specification |

|---|---|---|

| Open-Source Liquid Handler | Provides the flexible, programmable core for assay automation. | Opentrons OT-2, custom-built systems using pyhamilton or dispense libraries. |

| GxP-Compliant LIMS | Ensures sample chain of custody, data integrity, and SOP management. | Benchling, STARLIMS, or a validated ELN instance. |

| Version Control System | Tracks changes to every protocol, script, and analysis, crucial for both collaboration and traceability. | Git (GitHub, GitLab, Bitbucket). |

| IoT Environmental Sensors | Monitors and logs critical lab conditions (temp, humidity) to the audit trail. | Validated, calibrated sensors with digital output. |

| Cell Viability Assay Kit | Standardized biochemical endpoint for performance qualification. | CellTiter-Glo 3D (for 3D models) or equivalent MTT/Resazurin kits. |

| Reference Control Compound | Provides a benchmark for inter-platform accuracy and reproducibility. | Staurosporine (non-specific kinase inhibitor) with well-characterized IC50. |

| Data Analysis Environment | Containerized, script-driven analysis to ensure reproducibility. | Docker/Singularity container with Python (SciPy, Pandas) or R environment. |

The future of high-throughput experimentation lies in architectures that embrace the innovative ethos of open-source development while embedding the rigorous data governance of GxP compliance from the outset. This is achieved not by choosing one paradigm over the other, but by implementing a layered blueprint: a flexible, open-source hardware/scripting core, surrounded by a standardized, validated data integrity layer. This convergent approach, guided by the protocols and tools detailed herein, accelerates translational research while building the essential bridge from academic discovery to industrial drug development.

This technical guide examines the infrastructure paradigms for high-throughput experimentation (HTE) within academic research and industrial drug development. The core thesis posits that academic platforms predominantly leverage modular, flexible benchtop setups to enable broad, exploratory science, while industrial platforms prioritize integrated, robust robotic workcells to achieve reproducible, scaled workflows for pipeline progression. This divergence stems from differing primary objectives: knowledge generation versus process optimization and asset delivery.

Core Architectural Comparison

Modular Benchtop Setups

- Hardware Philosophy: Assembled from discrete, often vendor-agnostic components (e.g., pipettors, plate handlers, readers) connected via open standards (e.g., SLAS/ANSI microplate footprints, USB/GPIB communication).

- Software Philosophy: "Glue" code (Python, Matlab) orchestrates components; data management often involves custom scripts and flat files. High reliance on researcher intervention and scripting expertise.

- Key Advantage: Flexibility; rapid reconfiguration for novel assay types.

- Primary Limitation: Throughput and hands-on time scalability; variability in integration reliability.

Integrated Robotic Workcells

- Hardware Philosophy: Pre-engineered systems with a centralized robotic manipulator (e.g., Cartesian, robotic arm) operating within a secured enclosure. Components are validated as a unified system.

- Software Philosophy: Proprietary, graphical scheduling software (e.g., HighRes Biosolutions, Thermo Fisher Momentum) for end-to-end workflow design, execution, and tracking. Direct integration with LIMS.

- Key Advantage: Robustness, reproducibility, and unattended operation for standardized, high-volume assays.

- Primary Limitation: High capital cost; lower adaptability to radically new protocols.

Table 1: Quantitative Comparison of Representative Platforms

| Feature | Academic-Modular (Example: Opentrons OT-2 + Components) | Industrial-Integrated (Example: Thermo Fisher STREAMLINE AXP) |

|---|---|---|

| Max Throughput (Plates/Day) | 10-40 (Highly variable) | 100-500+ (Consistent) |

| Typical Upfront Cost (USD) | $10k - $100k | $250k - $1M+ |

| Assay Development/Change Time | Days to Weeks | Weeks to Months |

| Mean Time Between Failures (MTBF) | 50-200 hours | 1000+ hours |

| Operator Hands-On Time / Plate | High (5-15 minutes) | Low (<1 minute, largely loading/unloading) |

| Data System Integration | Manual file export/scripting | Automated, direct-to-LIMS/ELN |

Experimental Protocol Case Study: High-Throughput Compound Screening

This protocol highlights the procedural differences in executing a 384-well cell-based viability assay.

Protocol for Modular Benchtop Setup

Objective: Screen 1,000 compounds in triplicate against a cancer cell line. Workflow:

- Plate Replication: Using a standalone plate replicator, transfer compound library from master stock plates to assay plates.

- Cell Seeding: Manual transport of assay plates to a semi-automated electronic multichannel pipettor for cell suspension dispensing.

- Incubation: Plates moved manually to a standalone CO2 incubator.

- Viability Readout: Manual transport to a microplate reader for luminescence measurement.

- Data Transfer: Manual export of .csv files from reader software to a network drive for analysis via custom Python/R scripts.

Diagram 1: Modular Benchtop Screening Workflow

Protocol for Integrated Robotic Workcell

Objective: Screen 100,000 compounds in singlicate against a cancer cell line. Workflow:

- Scheduler Setup: An integrated method is created in the workcell's scheduling software, defining plate movements, timings, and device interactions.

- Batch Loading: An operator loads stacks of empty assay plates, cell suspension reservoirs, tip boxes, and the compound library matrix into designated input bays.

- Unattended Execution: The robotic arm executes the full workflow within the enclosed cell: compound transfer via integrated dispenser, cell seeding, plate movement to an integrated hotel/incubator, timed incubation, transfer to an integrated multimode reader, and readout.

- Automated Data Pipeline: Reader data is automatically parsed, normalized, and pushed to the corporate activity database (e.g., Genedata Screener) via a direct API.

Diagram 2: Integrated Workcell Screening Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Cell-Based High-Throughput Screening

| Item | Function | Typical Example (Vendor) |

|---|---|---|

| ATP-Luminescence Viability Assay | Quantifies metabolically active cells via luciferase reaction with cellular ATP. Core readout for proliferation/cytotoxicity. | CellTiter-Glo 3D (Promega) |

| 384-Well, Tissue-Culture Treated Microplates | Provides sterile, optically clear vessel with surface treatment for cell adherence. Standardized footprint for automation. | Corning 384-well Black, Clear Bottom |

| DMSO-Tolerant Compound Library | Small molecules pre-dissolved in DMSO, formatted in 384-well source plates for liquid handling. | 100nL pre-spotted library (e.g., Echo-qualified) |

| Automation-Compatible Tip Boxes | Sterile, low-retention pipette tips in racks designed for automated pick-up. Critical for volume precision. | 10 µL Tips in SLAS-ANSI footprint (Beckman, Labcyte) |

| Cell Dissociation Reagent | Enzymatic (non-trypsin) solution for gentle detachment of adherent cells to create uniform single-cell suspensions for dispensing. | Accutase (Sigma) |

| Automated Liquid Handling Buffer | Low-foam, high-surfactant PBS used in bulk dispensers to prevent clogging and ensure droplet consistency. | BioTek Certified Wash Buffer |

Within the ongoing discourse on academic versus industrial high-throughput experimentation (HTE) platforms, the choice of iterative workflow design is a fundamental differentiator. Two predominant paradigms exist: hypothesis-driven screening, rooted in mechanistic biological inquiry, and campaign-oriented screening, optimized for industrial-scale lead discovery and optimization. This technical guide delineates the core principles, experimental architectures, and applications of each approach, providing a framework for researchers and drug development professionals to align methodology with strategic objectives.

Foundational Principles and Comparative Analysis

Hypothesis-Driven Screening

This approach is characterized by the formulation of a specific, mechanistic biological hypothesis prior to experimentation. The workflow is an iterative cycle of hypothesis generation, targeted experimental design, data analysis, and hypothesis refinement. It is deeply integrated with foundational biology and is prevalent in academic and early-discovery industrial research where understanding mode-of-action is critical.

Campaign-Oriented Screening

This approach prioritizes the systematic, high-volume interrogation of chemical or biological space against one or more assay endpoints. The primary goal is to generate actionable data (e.g., structure-activity relationships, SAR) for a defined project campaign, such as lead series identification or ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) profiling. Throughput, reproducibility, and data uniformity are key.

The following table summarizes the core quantitative and qualitative differences:

| Parameter | Hypothesis-Driven Screening | Campaign-Oriented Screening |

|---|---|---|

| Primary Objective | Test/refine a mechanistic biological model | Generate SAR or optimize compounds for a campaign goal |

| Experimental Design | Customized, variable assays per iteration | Highly standardized, uniform assay protocols |

| Throughput Scale | Low to Medium (10s - 1000s of data points) | Very High (10,000s - 1,000,000s of data points) |

| Key Success Metric | Biological insight, model confirmation | Hit rate, potency, ligand efficiency, project milestone attainment |

| Data Analysis Focus | Statistical significance, pathway mapping | Robust statistical thresholds (e.g., Z’>0.5), trend analysis across libraries |

| Typical Platform Context | Academic Core Facilities, Translational Research Labs | Industrial HTS and Lead Optimization Centers |

Experimental Protocols and Methodologies

Protocol 1: Hypothesis-Driven CRISPR Knockdown Screen for Pathway Validation

Objective: To validate the hypothesis that "Inhibiting the KEAP1-NRF2 pathway sensitizes NSCLC cells to ferroptosis inducers."

- Design: A focused siRNA or CRISPR library targeting ~100 genes in the oxidative stress response and ferroptosis pathways is designed.

- Cell Preparation: NSCLC cell lines (e.g., A549) are transduced with the lentiviral CRISPR library at a low MOI to ensure single integrations.

- Assay Execution: Cells are split into two arms: treated with a sub-lethal dose of a ferroptosis inducer (e.g., 500 nM RSL3) or DMSO vehicle. Cells are cultured for 5-7 population doublings.

- Sample Processing: Genomic DNA is harvested from both arms. The integrated sgRNA sequences are amplified via PCR and prepared for next-generation sequencing (NGS).

- Data Analysis: NGS reads are aligned to the library. sgRNA depletion or enrichment in the treated vs. control arm is calculated using algorithms like MAGeCK or RSA. Hits are genes whose knockdown significantly alters cell viability specifically in the treated arm.

Protocol 2: Campaign-Oriented HTS for a Kinase Inhibitor Program

Objective: To identify novel, potent inhibitors of EGFR L858R/T790M mutant from a 300,000-compound diversity library.

- Assay Development & Miniaturization: A robust, homogenous time-resolved fluorescence (HTRF) kinase activity assay is developed and miniaturized to 1536-well plate format. Key parameters (Z’ factor, signal-to-background) are optimized to >0.7 and >5, respectively.

- Automated Screening: Compound libraries are acoustically transferred (nL volumes) into assay plates. Assay reagents are dispensed via bulk dispensers. Plates are incubated and read on a plate-based imager.

- Primary Data Processing: Raw fluorescence values are normalized to high (100% inhibition) and low (0% inhibition) controls on a per-plate basis. Percent inhibition is calculated for all wells.

- Hit Identification: Compounds exhibiting >50% inhibition at 10 µM are flagged as primary hits. Hits are triaged based on chemical structure, potential pan-assay interference compounds (PAINS) filters, and cross-referencing with historical assay data.

- Confirmation & Progression: Primary hits are re-tested in dose-response (10-point, 1:3 serial dilution) in the primary assay and a counterscreen against wild-type EGFR. Confirmed hits with desired selectivity profile progress to the next campaign phase (e.g., hit-to-lead chemistry).

Visualizing Workflow Architectures

Title: Hypothesis-Driven Iterative Workflow

Title: Campaign-Oriented Screening Workflow

Title: KEAP1-NRF2 Pathway in Oxidative Stress

The Scientist's Toolkit: Essential Research Reagent Solutions

| Reagent / Material | Function in HTE | Typical Application |

|---|---|---|

| CRISPR-Cas9 Knockout Library (e.g., Brunello, GeCKO) | Enables genome-wide or targeted loss-of-function screening. | Hypothesis-driven screens to identify gene essentiality or drug-gene interactions. |

| Phospho-Specific Antibodies (HTRF/AlphaLISA Compatible) | Quantifies specific protein phosphorylation states in a homogenous, miniaturized format. | Campaign-oriented profiling of kinase inhibitor potency and selectivity in cellular assays. |

| Recombinant Purified Target Protein | Provides the primary target for biochemical activity assays. | Essential for primary HTS campaigns and mechanistic enzymology studies. |

| DNA-Barcoded Compound Libraries | Allows for pooled screening of compounds via next-generation sequencing readout. | Enables ultra-high-throughput cellular screening at reduced cost in campaign modes. |

| Cell Painting Reagent Set (Dyes) | A multiplexed fluorescence assay capturing multiple morphological features. | Used in hypothesis-driven phenotyping or campaign-oriented profiling for mechanism-of-action studies. |

| 3D Spheroid/Organoid Culture Matrices | Provides a more physiologically relevant microenvironment for cell-based assays. | Increasingly used in both paradigms for translational relevance, especially in oncology. |

| Nucleic Acid Transfection Reagents (High-Throughput) | Enables efficient, parallel delivery of siRNAs, plasmids, or CRISPR ribonucleoproteins. | Critical for hypothesis-driven functional genomics screens in arrayed formats. |

High-Throughput Experimentation (HTE) has become a cornerstone of modern research in chemistry, biology, and drug discovery. The fundamental ethos governing data and knowledge dissemination, however, diverges sharply between academic and industrial contexts. This guide examines the technical and operational implications of Open Publication versus Proprietary IP Management within HTE platforms, focusing on workflows, data handling, and strategic outcomes. Academia often prioritizes rapid, open dissemination to advance collective knowledge and secure funding, while industry must protect investments and maintain competitive advantage through controlled IP.

Comparative Analysis: Core Principles and Quantitative Impact

Table 1: Quantitative Comparison of Open vs. Proprietary Data Cultures in HTE

| Metric | Open Publication (Academic Model) | Proprietary IP (Industrial Model) |

|---|---|---|

| Typical Data Release Timeline | 6-24 months post-experiment | Indefinitely restricted or never publicly released |

| Average Cost per HTE Campaign (USD) | $50,000 - $200,000 (Grant-funded) | $500,000 - $5,000,000+ (Internal R&D) |

| Citation Impact (Avg. Citations/Paper) | 15-30 (for foundational methodology papers) | Not applicable (internally tracked as "inventions") |

| Patent Output Ratio | ~0.5 patents per major project | 5-20+ patents per major project |

| Data Repository Usage | >80% use public repos (e.g., PubChem, Zenodo) | <10% use public repos; rely on internal databases |

| Collaboration Rate (External) | High (60-80% of projects involve multiple institutions) | Low to Moderate (20-40%, often via controlled partnerships) |

| Primary Validation Metric | Peer review & reproducibility | Lead optimization success & projected ROI |

Experimental Protocols in Contrasting Data Cultures

Protocol 3.1: Open HTE for Novel Catalyst Screening (Academic)

Objective: To identify efficient photocatalysts for C–H functionalization and publish full datasets.

- Library Design: A diverse array of 384 commercially available organometallic complexes and organic dyes is selected based on computational diversity analysis.

- Platform Setup: Reactions are assembled in an inert-atmosphere glovebox using a liquid-handling robot (e.g., Hamilton STAR) in 96-well glass microtiter plates.

- Reaction Execution: Each well contains substrate (0.05 mmol), catalyst (2 mol%), and base in degassed solvent. Plates are irradiated in a parallel photoreactor (420 nm LEDs) for 24 hours at 25°C.

- Analysis: Quantification is performed via unified UPLC-MS with an autosampler. Calibration curves are generated for each plate.

- Data Deposition: All raw UPLC-MS files, processed yield data, and robot scripts are uploaded to a public repository (e.g., Figshare) with a DOI immediately upon manuscript submission. The chemical structures are deposited in PubChem.

Protocol 3.2: Proprietary HTE for Hit-to-Lead Optimization (Industrial)

Objective: To optimize a lead compound series for potency and ADMET properties while generating protected IP.

- Library Design: A proprietary virtual library of ~10,000 analogs is generated based on a confidential lead. A focused subset of 1,536 compounds is selected for parallel synthesis.

- Platform Setup: Synthesis is performed on an automated, closed-system synthesis platform (e.g., Chemspeed) within a secure, access-controlled lab. All electronic notebooks are digitally signed and stored on firewalled servers.

- Biological Assay: All synthesized compounds are tested in a high-throughput, target-specific biochemical assay (e.g., TR-FRET) and a counter-screen for selectivity in 384-well format. Cytotoxicity is assessed in parallel.

- Data Management: All data flows into a proprietary informatics platform (e.g., Dotmatics). Structure-activity relationships (SAR) are analyzed internally using machine learning models. Access is role-based and audited.

- IP Generation: Chemists and patent liaisons review SAR weekly. Novel, potent compounds are flagged for immediate patent application filing before any external disclosure.

Visualizing Workflows and Decision Pathways

Title: Open Publication HTE Workflow

Title: Proprietary IP Management HTE Workflow

Title: Data Culture Decision Pathway for HTE

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for HTE Platforms

| Item | Function | Typical Example in Open Model | Typical Example in Proprietary Model |

|---|---|---|---|

| Chemical Building Blocks | Core units for compound library synthesis. | Purchased from public catalogs (e.g., Enamine, Sigma-Aldrich). Listed in SI. | Sourced from custom vendors under CDA; often proprietary intermediates. |

| Assay Kits | For high-throughput biological screening. | Commercial kits (e.g., Promega Glo assay) with published protocols. | Licensed kits or fully developed, internally validated proprietary assays. |

| Catalyst Libraries | Diverse catalysts for reaction discovery/optimization. | Commercially available sets (e.g., Strem Catalyst Kit). | Custom-synthesized, novel ligand/metal complexes. |

| Informatics Software | For data analysis, SAR, and visualization. | Open-source (e.g., RDKit, KNIME, Jupyter). | Commercial/proprietary (e.g., Dotmatics, Schrödinger Suite, internal ML tools). |

| Data Repository | For storing, sharing, and curating experimental data. | Public (e.g., Zenodo, PubChem, GitHub). | Secure, internal database with audit trails (e.g., ELN/LIMS integration). |

| Automation Hardware | Liquid handlers, robotic arms, reactors. | Shared core facility equipment (e.g., Hamilton, Biotage). | Dedicated, owned systems often in sealed environments (e.g., Chemspeed, HighRes Biosolutions). |

Synthesis and Strategic Considerations

The choice between open and proprietary data cultures is not merely philosophical but defines the technical architecture of HTE platforms. Open models accelerate methodological innovation and validation through peer scrutiny, while proprietary models secure the commercial investment required for translational development. Emerging hybrid models, such as consortia (e.g., Structural Genomics Consortium) or pre-competitive public-private partnerships, attempt to leverage the strengths of both by delineating open foundational research from proprietary product development. The optimal data strategy must be consciously selected at the project's inception, as it fundamentally directs library design, platform security, informatics infrastructure, and ultimately, the societal and commercial impact of the research.

High-Throughput Experimentation (HTE) has become a cornerstone of modern molecular discovery and optimization. This guide provides a technical comparison of scale and throughput between academic and industrial HTE platforms, framed within a broader thesis that examines the distinct yet complementary roles these sectors play in advancing drug and materials discovery. The focus is on quantifying library sizes, screening capacities, and the underlying methodologies that enable such scale.

Library Scale: Academic vs. Industrial Platforms

A primary differentiator is the sheer size of compound and reaction libraries accessible for screening. Industrial platforms, backed by substantial capital investment, operate at a vastly larger scale.

Table 1: Typical Library and Screening Scale Comparison

| Platform Type | Typical Compound Library Size | Reaction Library/Matrix Size | Primary Screening Throughput (wells/day) | Hit Validation Capacity (compounds/week) |

|---|---|---|---|---|

| Academic Core Facility | 10,000 - 100,000 compounds | 96 - 384 reaction conditions | 10,000 - 50,000 | 100 - 500 |

| Industrial Discovery (Pharma/Biotech) | 1 - 5+ million compounds | 1,536 - 6,144 reaction conditions | 100,000 - 500,000+ | 5,000 - 20,000+ |

| Industrial Specialized (DEL, ASIN) | 10^8 - 10^12 DNA-encoded compounds | N/A (Library is the screen) | Billions (via NGS) | 1,000 - 5,000 (post-decoding) |

Key Definitions:

- DNA-Encoded Library (DEL): A technology where each small-molecule compound is tagged with a unique DNA barcode, allowing for pooled screening of billions of compounds in a single tube.

- ASIN: Acronym for "Automated Synthesis and Intrinsic Screening Network," representing platforms that integrate automated synthesis with immediate biological or physicochemical analysis.

Core Experimental Protocols for HTE Screening

The high throughput in both sectors is enabled by standardized, miniaturized protocols.

Protocol for Industrial Ultra-High-Throughput Screening (uHTS)

- Objective: Identify primary hits from a multi-million compound library against a purified protein target.

- Method:

- Assay Miniaturization: Reformulate biochemical assay for 1,536-well plate format, with reaction volumes of 1-10 µL.

- Liquid Handling: Use acoustic droplet ejection (ADE) or pintool transfer to dispense nanoliter volumes of compounds from source libraries into assay plates.

- Reagent Dispensing: Add assay buffer, enzyme, and substrate via high-speed, non-contact dispensers.

- Incubation & Detection: Incubate plates under controlled conditions. Read output (e.g., fluorescence, luminescence) using a plate imager or high-speed multimode plate reader.

- Data Processing: Robotic plate stackers feed readers. Data is automatically uploaded to an informatics pipeline for normalization (Z'-factor calculation), hit identification (typically >3σ from median), and compound management integration.

Protocol for Academic HTE Reaction Screening

- Objective: Rapidly optimize reaction conditions (e.g., ligand, base, solvent) for a given transformation.

- Method:

- Library Design: Create a 96- or 384-condition matrix varying key parameters (catalyst, ligand, base, solvent, temperature).

- Plate Preparation: Use a liquid handler to aliquot stock solutions of reagents into designated wells of a microtiter plate.

- Substrate Addition: Dispense a common stock solution of starting materials to all wells.

- Sealing & Reaction: Seal plate with a gas-permeable membrane. Agitate and heat/cool as needed.

- Quenching & Analysis: Add a standard quenching solution via dispenser. Analyze yields via high-throughput UPLC-MS or GC-MS with automated sample injection from the plate.

Visualizing HTE Workflows and Platforms

HTE Screening Core Process Flow

Academic vs. Industrial HTE Focus & Scale

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for HTE Operations

| Item | Function in HTE | Example Vendor/Product (Illustrative) |

|---|---|---|

| Source Compound Plates | Pre-dispensed, formatted libraries for screening. Essential for reproducibility and speed. | Labcyte Echo Qualified Plates, Greiner Bio-One polypropylene plates. |

| Liquid Handling Reagents | Buffers, DMSO, assay substrates, and quenching solutions optimized for nanoliter dispensing. | Sigma-Aldrich HTS-grade DMSO, Promega Ultra-Glo Luciferase. |

| Detection Reagents | Fluorescent/luminescent probes, antibodies, or dyes compatible with miniaturized formats. | Thermo Fisher Scientific CellTiter-Glo, Cisbio HTRF reagents. |

| Assay-Ready Kits | Pre-optimized, validated biochemical or cellular assay systems in plate format. | Reaction Biology Corporation Kinase HotSpot, Eurofins Panlabs Profiling. |

| High-Throughput Catalysis Kits | Pre-weighed, arrayed sets of ligands, bases, and metal catalysts for reaction screening. | Sigma-Aldrix HTE Catalyst Library, Strem Chemicals Screening Sets. |

| Automation-Compatible Consumables | Microtiter plates, seals, and tip boxes designed for robotic arms and dispensers. | Agilent SureTect Seals, Eppendorf epT.I.P.S. Motion. |

Industrial platforms lead in raw throughput and library size, driven by the need for probability-based discovery and comprehensive pipeline support. Academic platforms, while smaller in scale, excel in developing novel HTE methodologies, exploring unconventional chemical space, and acting as testbeds for new assay technologies. The synergy arises when industrial-scale capacity is applied to novel paradigms pioneered in academia, such as new DNA-encoded chemistry or automated synthesis cycles, accelerating the overall pace of discovery.

Strategic Deployment: How to Apply Academic and Industrial HTE in the R&D Lifecycle

Within the broader thesis contrasting academic and industrial High-Throughput Experimentation (HTE) platforms, a critical distinction emerges in their primary use cases. Industrial HTE is predominantly optimized for pipeline acceleration and process optimization within defined chemical and biological spaces. In contrast, academic HTE platforms are uniquely positioned to tackle high-risk, fundamental exploratory research. This whitepaper details two ideal academic use cases: Exploratory Reaction Discovery and New Modality Tool Development, arguing that these areas leverage the academic environment's freedom to pursue long-term, foundational questions that underpin future industrial innovation.

Exploratory Reaction Discovery

Academic HTE excels in probing uncharted chemical space to discover novel reactions and catalytic processes, a pursuit often deemed too risky or non-applicative for immediate industrial ROI.

Core Methodology & Protocol

The workflow integrates automated synthesis, rapid analysis, and data informatics in an iterative cycle.

Protocol for High-Throughput Exploratory Catalysis Screening:

- Library Design: Prepare a diverse array of substrate pairs (e.g., 96–384 variants) featuring unexplored functional group combinations using a liquid handler.

- Reaction Assembly: In a glovebox under inert atmosphere, distribute aliquots of each substrate into wells of a microtiter plate.

- Catalyst/Additive Dispensing: Using a non-contact acoustic dispenser, add nanomole quantities of potential catalyst libraries (e.g., 50+ phosphine ligands, 20+ metal precursors, bases, additives) to create a full matrix.

- Sealing & Reaction: Seal plates with PTFE sheets and heat/stir in a dedicated HTE incubator/agitator block.

- Quenching & Analysis: After reaction, automatically quench and dilute aliquots. Analyze via UPLC-MS/MS with a dual autosampler for high-throughput.

- Data Processing: Convert chromatograms to quantified yields and conversion using cheminformatics software (e.g.,

Chemplexity,Methanolysis). Apply statistical analysis (PCA) to identify hit conditions.

Quantitative Data from Recent Studies

Table 1: Representative Output from Academic HTE Reaction Discovery Campaigns

| Study Focus | Library Size Screened | Hit Rate | Novel Reactions Identified | Key Metric |

|---|---|---|---|---|

| C-N Coupling with Redox-Active Esters | 1,536 conditions | ~2.1% | Dual catalytic Ni/Photoredox amination | 89% yield (best hit) |

| Selective Heteroarene Functionalization | 2,880 experiments | 1.5% | Electrochemical C-H sulfonylation | 7-fold selectivity improvement |

| Small-Ring Strain Release Chemistry | 576 substrates | 4.8% | New [3+2] cycloaddition pathway | 15 novel compound classes |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for HTE Reaction Discovery

| Item | Function & Key Feature |

|---|---|

| Pre-weighed Catalyst/ Ligand Plates | Commercial 96-well plates with pre-dispensed, nanomole-scale catalysts (e.g., RuPhos Pd G3, Ni(COD)₂). Eliminates weighing, enables rapid matrix assembly. |

| Diverse Building Block Sets | Curated sets of electrophiles, nucleophiles, and functionalized arenes with broad reactivity scopes, designed for direct use in HTE platforms. |

| Deuterated Internal Standard Mix | A multi-component MS standard for rapid UPLC-MS calibration and quantitative yield determination without pure analytical standards. |

| Gas-Manifold Equipped Microtiter Plates | Plates with integrated valve systems for performing parallel reactions under controlled atmospheres (CO₂, H₂, O₂). |

| Cheminformatics & Visualization Software | Platforms like Spotfire or TIBCO for visualizing multi-dimensional screening results and identifying hit clusters. |

Academic HTE Reaction Discovery Workflow

New Modality Tool Development

Academic HTE is pivotal for developing the foundational chemical and screening tools required for emerging therapeutic modalities (e.g., PROTACs, molecular glues, covalent inhibitors, RNA-targeted small molecules).

Core Methodology & Protocol

This involves creating and profiling large libraries of bespoke chemical probes to map structure-activity relationships (SAR) against novel biological targets or mechanisms.

Protocol for HTE Synthesis & Profiling of Covalent Fragment Libraries:

- Design & Docking: Design a library of 500-1000 electrophilic fragments, docking against a cysteine-specific target of interest.

- Parallel Synthesis: Execute parallel synthesis in 96-well format using solid-phase or solution-phase methods with automated liquid handling for coupling, washing, and cleavage steps.

- QC & Purification: Perform rapid analytical LC-MS on each crude product. Use preparative HPLC-MS with fraction collection for all compounds meeting purity thresholds (>85%).

- Concentration Normalization: Use an acoustic dispenser to transfer equal nanomole quantities of each compound into assay-ready daughter plates, followed by solvent evaporation.

- Functional & Covalent Screening: Re-dissolve compounds in buffer. Run two parallel assays: (a) a high-throughput biochemical activity assay, and (b) a mass spectrometry-based intact protein assay to confirm covalent modification.

- Data Triangulation: Cross-reference functional activity with MS-based covalent hit identification to eliminate false positives and generate robust SAR.

Quantitative Data from Recent Studies

Table 3: HTE Contributions to New Modality Toolkits

| Modality Class | Library Size Profiled | Primary Screening Assay | Key Output | Success Metric |

|---|---|---|---|---|

| PROTAC Prototypes | 240 heterobifunctional molecules | NanoBRET target engagement | 6 potent degraders (DC₅₀ < 100 nM) | >50-fold selectivity over related kinases |

| Covalent Fragments | 1,120 acrylamides | LC-MS/MS (intact protein) | 12 distinct covalent chemotypes | Modification efficiency (kᵢₙₐcₜ/Kᵢ) up to 250 M⁻¹s⁻¹ |

| RNA-Binder Libraries | 384 aminoglycoside analogs | Differential Scanning Fluorimetry (DSF) | 3 compounds stabilizing target RNA fold | ΔTₘ > 3.0°C, IC₅₀ ~ 5 µM in cell assay |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for New Modality Development

| Item | Function & Key Feature |

|---|---|

| Bifunctional Linker Building Blocks | E3 ligase ligands (e.g., Thalidomide, VH032) pre-functionalized with PEG/alkyl linkers and terminal chemical handles (azide, alkyne, NH₂) for modular PROTAC synthesis. |

| Diverse Electrophile "Warhead" Sets | Plates containing arrays of acrylamides, chloroacetamides, vinyl sulfonates, etc., for rapid assembly of targeted covalent libraries. |

| Assay-Ready, Concentration-Normalized Plates | Commercially available plates where each well contains a pre-dispensed, known quantity of a unique compound, ready for direct biochemical assay addition. |

| Cellular Target Engagement Kits | Live-cell compatible reporter assays (e.g., NanoBRET, NanoBIT) for high-throughput measurement of compound binding or degradation in cells. |

| Label-Free Biosensor Systems | Instruments like BLI (Bio-Layer Interferometry) or SPR (Surface Plasmon Resonance) in HT format for characterizing binding kinetics of novel modalities. |

HTE Workflow for New Modality Tool Development

Academic HTE platforms, unconstrained by immediate commercial pipelines, serve as essential engines for foundational discovery. In Exploratory Reaction Discovery, they systematically map unknown chemical territory, generating the novel reactions and catalysts that will define future industrial synthesis. In New Modality Tool Development, they build the essential chemical and biological understanding—and the physical toolkits of probes and prototypes—required to drug challenging targets. These use cases underscore the thesis that academic and industrial HTE are not competitors but complementary components of the innovation ecosystem, with academic efforts providing the fundamental tools and discoveries that de-risk and propel long-term industrial translation.

High-Throughput Experimentation (HTE) represents a cornerstone of modern industrial research and development. While academic HTE platforms often prioritize fundamental discovery, proof-of-concept studies, and methodological innovation, industrial HTE platforms are engineered with a distinct mandate: to derisk and accelerate the critical path from candidate molecule to viable product. This operational thesis dictates a focused application on three high-impact, high-value domains: Lead Optimization, Route Scouting, and Formulation Screening. This whitepaper provides a technical guide to the implementation, protocols, and strategic advantages of HTE within these industrial sweet spots, contextualized against the broader landscape of HTE research.

Lead Optimization: Accelerating SAR to Clinical Candidate

The primary goal is to rapidly elucidate Structure-Activity Relationships (SAR) and refine compound properties (potency, selectivity, ADMET) to identify a clinical candidate.

Experimental Protocol: Parallel Medicinal Chemistry (pMC) and Biochemical Screening

- Library Design: Use design-of-experiments (DoE) software to plan a focused library around a core scaffold, varying R-groups to probe steric, electronic, and lipophilic parameters.

- Automated Synthesis: Employ liquid handling robots for parallel synthesis in 24-, 96-, or 384-well microtiter plates. Common reactions (e.g., amide couplings, Suzuki-Miyaura cross-couplings, SNAr) are pre-optimized for a plate-based format.

- Purification & Analysis: Integrated high-throughput purification (e.g., mass-directed preparative HPLC) is followed by automated LC-MS analysis for purity and identity confirmation.

- Assay Cascade:

- Primary Assay: High-throughput biochemical assay (e.g., fluorescence polarization, TR-FRET) against the primary target. Run in 1536-well format.

- Counter-Screen: Selectivity panel against related targets (e.g., kinase family members).

- Physicochemical & Early ADMET: Parallel measurements of solubility (nephelometry), metabolic stability (microsomal incubation + LC-MS/MS), and permeability (PAMPA or cell-based assays like Caco-2).

Key Data Output Table: Lead Optimization HTE Campaign

| Parameter | Assay Format | Throughput (Compounds/Week) | Key Industrial Benchmark |

|---|---|---|---|

| Synthesis | 96-well plate | 50-200 | >95% purity for >80% of library |

| Biochemical Potency | 1536-well, TR-FRET | 10,000+ | IC50/EC50 determination |

| Selectivity (Kinase Panel) | 384-well, binding | 500-1000 | Selectivity index >100x |

| Aqueous Solubility | 96-well, nephelometry | 1,000+ | >100 µM at pH 7.4 |

| Microsomal Stability | 96-well, LC-MS/MS | 500 | % parent remaining >30% (human) |

| Permeability (PAMPA) | 96-well, UV/LC-MS | 1,000+ | Effective permeability >1 x 10⁻⁶ cm/s |

Route Scouting: Defining the Synthetic Blueprint

HTE is indispensable for rapidly identifying safe, scalable, and cost-effective synthetic routes for Active Pharmaceutical Ingredients (APIs).

Experimental Protocol: Reaction Screening and Condition Optimization

- Retrosynthetic Analysis: Identify 3-5 potential disconnections for the key bond-forming step.

- Reagent & Catalyst Screening: Prepare a matrix of catalysts (e.g., Pd, Cu, Ni complexes), ligands (phosphines, N-heterocyclic carbenes), bases, and solvents using automated liquid handlers.

- Parallel Reaction Execution: Reactions are set up in sealed microtiter plates or arrays of microvials (0.2-1 mL volume) on robotic platforms, often under controlled atmosphere.

- High-Throughput Analysis: Use UPLC-MS with fast gradients for quantitative yield analysis (via internal standard) and byproduct identification.

- DoE for Optimization: For the most promising conditions, a multivariate DoE (e.g., varying temperature, concentration, stoichiometry) is performed to define the optimal process window.

Key Data Output Table: Catalytic Cross-Coupling Route Scouting

| Condition Variable | Screening Range | Analysis Method | Industrial Success Criteria |

|---|---|---|---|

| Catalyst | 10-20 metal complexes | UPLC-MS | >80% conversion, <5% of key impurity |

| Ligand | 20-50 bidentate/monodentate ligands | UPLC-MS | Robust performance at low loading (<2 mol%) |

| Base | Carbonates, phosphates, amines | UPLC-MS | Full conversion, minimal side reactions |

| Solvent | 5-10 (e.g., toluene, dioxane, DMF, water) | UPLC-MS | Suitable for temperature range, facilitates work-up |

| Temperature | 60-150°C (via heating blocks) | UPLC-MS | Identified optimal ±10°C window |

Formulation Screening: Ensuring Developability

HTE enables the empirical identification of stable, bioavailable formulations early in development.

Experimental Protocol: Solid-State and Solution Stability Screening

- Salt/Polymorph Screen: Co-crystallize the API with multiple counterions (e.g., HCl, mesylate, sodium) under varied conditions (solvent, temperature, evaporation rate) in 96-well plates.

- High-Throughput Characterization: Rapid analysis via parallel XRPD (X-ray powder diffraction) and Raman microscopy to identify unique crystalline forms.

- Excipient Compatibility: Blend API with common excipients (fillers, binders, disintegrants, lubricants) in 96-well format.

- Forced Degradation Studies: Subject formulations to stressed conditions (40-75°C, 75% relative humidity) in climate-controlled chambers. Monitor appearance, assay, and related substances by UPLC at defined timepoints.

- Dissolution Profiling: Use miniaturized dissolution apparatus (e.g., 10-50 mL media) with UV fiber-optic probes to generate early dissolution curves.

Key Data Output Table: Formulation HTE Matrix

| Screen Type | Format | Variables Tested | Primary Analytical Readout |

|---|---|---|---|

| Salt Selection | 96-well crystallization plate | 8-12 counterions, 3-5 solvents | XRPD, Raman for form identity |

| Polymorph | 96-well plate | 5-10 solvent/anti-solvent systems, temperature gradients | XRPD for crystallinity & phase |

| Excipient Compatibility | 96-well glass vials | 15-20 GRAS excipients, binary/ternary blends | UPLC for potency & degradants after stress |

| Early Dissolution | 24- or 96-well micro-dissolution | pH 1.2, 4.5, 6.8 buffers | UV concentration vs. time profile |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Function in Industrial HTE | Example/Note |

|---|---|---|

| Prefabricated Catalyst/Ligand Kits | Accelerate route scouting by providing standardized, pre-weighed aliquots of diverse catalysts and ligands. | Commercially available kits from suppliers like Sigma-Aldrich (e.g., Solvias ligands) for cross-coupling, hydrogenation, etc. |

| DoE Software Suites | Enable systematic experimental design, data analysis, and model building to maximize information per experiment. | JMP, Modde, or Design-Expert for planning optimization campaigns. |

| Automated Liquid Handlers | Core platform for reproducible nanoliter-to-milliliter dispensing of reagents, catalysts, and substrates. | Hamilton STAR, Tecan Freedom EVO, or Echo acoustic dispensers. |

| High-Throughput Parallel Synthesizers | Conduct chemical reactions under controlled, varied conditions (temp, pressure, atmosphere) in parallel. | Unchained Labs Big Kahuna, Asynt Multi-React, or Heated/Stirred Microtiter Plates. |

| UPLC-MS Systems with Autosamplers | Provide rapid, quantitative analysis of reaction outcomes and purity for hundreds of samples per day. | Waters Acquity, Agilent InfinityLab with integrated plate samplers. |

| Integrated Purification-MS Systems | Automate the purification of synthesized libraries by triggering fraction collection based on MS detection. | Agilent Prep-MS, Waters FractionLynx. |

| Robotic XRPD Systems | Automate sample mounting and data collection for crystalline form identification from 96-well plates. | Rigaku G9, Malvern Panalytical Empyrean with robotic stage. |

| Microscale Dissolution Profilers | Enable dissolution testing with minimal API consumption, crucial for early-stage formulations. | Pion µDiss, or in-house setups with fiber-optic UV probes in 96-well plates. |

Visualizing the Industrial HTE Workflow and Strategic Context

Industrial vs. Academic HTE Focus

Lead Optimization HTE Workflow

Route Scouting HTE Workflow

Formulation Screening HTE Workflow

This case study is framed within a comparative thesis examining the distinct philosophies and outputs of academic versus industrial high-throughput experimentation (HTE) platforms. While industrial platforms are optimized for pipeline throughput and direct application, academic HTE often prioritizes fundamental discovery, mechanistic understanding, and the development of radically novel methodologies. This guide details how academic HTE is applied to invent and optimize new catalytic transformations, using recent exemplars from the literature.

Academic HTE: Philosophy and Infrastructure

Academic HTE for catalysis focuses on exploring vast, multidimensional chemical spaces (ligands, catalysts, substrates, additives, conditions) to uncover unexpected reactivity. The goal is discovery-led innovation rather than iterative optimization of a known process.

Key Differentiators from Industrial HTE:

- Objective: Novelty & mechanistic insight vs. process optimization.

- Library Design: Broad, diverse, often hypothesis-driven vs. focused, lead-oriented.

- Automation Level: Modular, adaptable, sometimes "home-built" vs. integrated, turnkey.

- Success Metrics: New reactions, selectivity paradigms, structure-activity relationships vs. yield, cost, scalability.

Core Experimental Protocol: HTE Workflow for Reaction Discovery

The following generalized protocol is standard for academic catalyst screening.

Protocol: Parallelized Microscale Reaction Screening

- Plate Preparation: A 96-well or 384-well glass-coated or polymer plate is used as the reaction block.

- Stock Solution Dispensing: Using an automated liquid handler or multichannel pipette:

- Add constant volumes of substrate stock solution (typically 0.1 M in substrate) to each well.

- Add variable volumes of catalyst/ligand/additive stock libraries to designated wells.

- Solvent & Atmosphere Control: Evaporate solvent (if needed) under vacuum. Refill wells with anhydrous solvent in an inert atmosphere glovebox.

- Initiation: Add a constant volume of a second substrate or reagent stock solution to all wells simultaneously to initiate reactions.

- Parallel Execution: Seal the plate and allow it to react under controlled temperature (ambient or heated/shaken incubator) for a set time.

- Quenching & Analysis: Add a standard quenching/dilution solution to each well.

- Primary Analysis: Analyze an aliquot directly via ultra-high-performance liquid chromatography (UPLC) or LC-MS, using a autosampler configured for microtiter plates.

- Data Processing: Conversion/yield is calculated by integration of analyte peaks relative to an internal standard.

Diagram: Academic HTE Catalyst Screening Workflow

Case Study: Discovery of a Novel Photoredox-Nickel Dual Catalytic C-O Coupling

A live search reveals a seminal 2014 Science paper (Macmillan et al.) as a paradigm. Academic HTE was crucial in identifying the effective combination of two distinct catalysts for a challenging cross-coupling.

Protocol: HTE for Dual Catalytic System Optimization

Variable Space Definition:

- Photoredox Catalyst Library: [Ir(dF(CF₃)ppy)₂(dtbbpy)]PF₆, Ru(bpy)₃Cl₂, eosin Y, etc. (8 candidates).

- Nickel Catalyst/Ligand Library: Ni(COD)₂ with bipyridines, phosphines, diamines (12 combinations).

- Additives: Bases (K₃PO₄, DIPEA), salts (LiCl).

- Conditions: Solvent (DME, DMF), light source (blue LED), concentration.

Matrix Setup: A partial factorial design was used to efficiently sample the 8x12 catalyst matrix in a 96-well format, holding other conditions constant initially.

Execution & Analysis: Reactions were run in parallel under blue LED irradiation. Analysis via UPLC determined yields of the target aryl ether.

Key Quantitative Findings:

Table 1: HTE Screening Results for Photoredox/Nickel Catalyst Pairs

| Photoredox Catalyst (5 mol%) | Nickel Ligand (10 mol%) | Average Yield (%)* | Key Observation |

|---|---|---|---|

| [Ir(dF(CF₃)ppy)₂(dtbbpy)]PF₆ | 4,4'-di-tert-butyl-2,2'-bipyridine | 92 | Optimal combination identified |

| Ru(bpy)₃Cl₂ | 4,4'-di-tert-butyl-2,2'-bipyridine | 78 | Active but less efficient |

| Eosin Y | 4,4'-di-tert-butyl-2,2'-bipyridine | <5 | Organic photocatalyst inactive |

| [Ir(dF(CF₃)ppy)₂(dtbbpy)]PF₆ | Tri-tert-butylphosphine | 15 | Phosphine ligands ineffective |

| None | 4,4'-di-tert-butyl-2,2'-bipyridine | 0 | No reaction without light |

*Yields are representative from initial screening.

Diagram: Dual Catalytic Cycle Relationship

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Academic Catalytic HTE

| Item | Function/Description | Example in Case Study |

|---|---|---|

| Modular HTE Rig | Customizable platform for liquid handling, reaction execution, and quenching. | Home-built 96-well reactor block with liquid handler. |

| Catalyst/Ligand Libraries | Pre-weighed, soluble stocks of diverse structural motifs to probe chemical space. | Ir/Ru photocatalyst set; Ni salts with bpy/P/N-ligand library. |

| Automated Chromatography | UPLC or LC-MS with plate autosampler for rapid (<5 min) analysis. | Acquity UPLC with PDA detector. |

| Microtiter Plates | Chemically resistant, glass-coated or polymer 96/384-well plates. | Glass-coated 96-well plate from ChemGlass. |

| Internal Standard | Chemically inert compound added pre-analysis for quantitative yield determination. | Trifluoromethylbenzene or similar. |

| Data Analysis Software | Platform to process chromatographic data into visual heatmaps for hit ID. | Custom Python scripts or commercial software (e.g., Mosaic). |

The application of High-Throughput Experimentation (HTE) in drug discovery represents a critical point of divergence between academic and industrial research. While academic platforms excel in developing novel methodologies and probing fundamental science, industrial HTE platforms are engineered for seamless integration into the pipeline, emphasizing robustness, reproducibility, and direct impact on candidate progression. This case study examines the industrial deployment of HTE to optimize Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, a decisive factor in clinical candidate selection.

Core Industrial HTE Infrastructure for ADMET

Industrial ADMET-HTE relies on integrated systems combining parallel synthesis, rapid purification, and automated biological and physicochemical screening.

Key ADMET Property Screens in Industrial HTE

The following table summarizes primary in vitro ADMET endpoints addressed via industrial HTE campaigns.

Table 1: Core ADMET-HTE Screening Cascade

| ADMET Property | Primary Assay(s) | Throughput (Compounds/Week) | Industrial Target Threshold |

|---|---|---|---|

| Aqueous Solubility | Kinetic Turbidimetry, Nephelometry | 10,000+ | >100 µM (pH 7.4) |

| Metabolic Stability | Microsomal/Hepatocyte Half-life (T1/2) | 5,000 | Human T1/2 > 30 min |

| Permeability | PAMPA, Caco-2 / MDCK | 3,000 | Papp > 10 x 10⁻⁶ cm/s |

| CYP Inhibition | Fluorescent / LC-MS/MS probe assays | 5,000 | IC50 > 10 µM (CYP3A4/2D6) |

| hERG Liability | hERG binding assay, Patch-clamp (secondary) | 2,000 | IC50 > 10 µM |

| Plasma Protein Binding | Rapid Equilibrium Dialysis (RED) | 4,000 | Fu > 5% |

| Chemical Stability | PBS, Simulated Gastric Fluid assay | 5,000 | >80% remaining (24h) |

Detailed Experimental Protocols

Protocol 1: Parallel Microsomal Stability Screening

Objective: Determine intrinsic clearance (CLint) for a 384-member library.

- Incubation: In 96-well polypropylene plates, combine test compound (1 µM final) with human liver microsomes (0.5 mg/mL) in 100 mM potassium phosphate buffer (pH 7.4).

- Reaction Initiation: Pre-incubate for 5 min at 37°C. Initiate reaction by adding NADPH regeneration system (final 1 mM NADP+, 3 mM glucose-6-phosphate, 1 U/mL G6PDH).

- Timepoints: Aliquot 50 µL at t = 0, 5, 15, 30, 45 min into a quench plate containing 100 µL of cold acetonitrile with internal standard.

- Analysis: Centrifuge. Analyze supernatant via UPLC-MS/MS. Quantify parent compound peak area ratio (compound/IS) over time.

- Data Processing: Calculate T1/2 = (0.693)/k, where k is the elimination rate constant from linear regression of ln(peak area) vs. time. CLint = (0.693/T1/2) / (mg microsomal protein/mL).

Protocol 2: High-Throughput Equilibrium Solubility (CheqSol Turbidimetry)

Objective: Measure thermodynamic solubility of 100s of purified compounds.

- Sample Preparation: Dispense solid compound (0.5-1 mg) into 96-well glass plate. Add 200 µL of phosphate buffer (pH 7.4) via liquid handler.

- Titration & Monitoring: Use an integrated pH-stat and turbidimeter. Titrate between undersaturated and supersaturated states using HCl or NaOH while monitoring light scattering at 620 nm.

- Endpoint Determination: The point at which the solution transitions from clear to turbid (and vice versa) upon pH adjustment defines the solubility-pH profile. The solubility at pH 7.4 is interpolated.

- Analysis: Reported as µg/mL or µM solubility. Compounds are ranked, and structures below threshold trigger iterative chemistry design.

Visualization of Industrial ADMET-HTE Workflow

Diagram 1: Industrial HTE-ADMET Optimization Cycle

Diagram 2: Industrial ADMET-HTE Triaging Cascade

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for ADMET-HTE

| Item | Supplier Examples | Function in ADMET-HTE |

|---|---|---|

| Pooled Human Liver Microsomes (pHLM) | Corning, XenoTech, BioIVT | Standardized enzyme source for high-throughput metabolic stability and CYP inhibition assays. |

| Multiplexed CYP Inhibition Assay Kits | Promega, Thermo Fisher | Enable simultaneous assessment of inhibition against five key CYP isoforms (3A4, 2D6, 2C9, 2C19, 1A2) in a single well. |

| 96-Well Rapid Equilibrium Dialysis (RED) Plates | Thermo Fisher | Facilitate high-throughput measurement of unbound fraction (Fu) for plasma protein binding. |

| Ready-to-Use PAMPA Plates | pION, MilliporeSigma | Pre-coated plates for parallel artificial membrane permeability assays, critical for predicting passive absorption. |

| Cryopreserved Hepatocytes | BioIVT, Lonza | Gold-standard cell-based system for evaluating metabolic stability, clearance, and metabolite identification. |

| hERG Binding Assay Kits | Eurofins, PerkinElmer | Non-electrophysiological, high-throughput screening for initial hERG potassium channel liability. |

| LC-MS/MS Compatible Solvent/Plates | Agilent, Waters, Labcyte | Acetonitrile, methanol, and 384-well plates designed for minimal leachables and maximal MS sensitivity in HT analysis. |

| Automated Liquid Handlers | Hamilton, Beckman Coulter, Tecan | Enable precise, nanoliter-to-microliter dispensing for assay setup, quenching, and transfer across 100s of plates. |

Data Integration and Machine Learning

Industrial platforms feed all HTE data into centralized data lakes. Structure-Property Relationship (SPR) models, often using graph neural networks or random forests, are trained to predict ADMET outcomes for virtual libraries, guiding the next design-make-test-analyze (DMTA) cycle. This closed-loop system dramatically accelerates the optimization of challenging property trade-offs, such as balancing solubility against permeability or potency against metabolic clearance.

This case study underscores that industrial HTE for ADMET is not merely scaling up assays. It is a disciplined engineering of an integrated, decision-driving system. The contrast with academic HTE is stark: industrial platforms prioritize standardized protocols, rigorous quality control, and data integration directly into project timelines. The result is a drastic reduction in late-stage attrition due to poor pharmacokinetics or toxicity, enabling the delivery of safer, more viable clinical candidates at an unprecedented pace.

This whitepaper examines the growing convergence of academic and industrial high-throughput experimentation (HTE) platforms within drug discovery. While academic institutions excel in exploratory, tool-developing research, industrial platforms are optimized for scale, reproducibility, and pipeline throughput. This "platform capability gap" often hinders the translation of novel biological insights into robust therapeutic candidates. We argue that structured hybrid partnership models are essential for bridging this divide, combining academic innovation with industrial rigor to accelerate the discovery and development cycle. This guide details the technical frameworks, shared protocols, and co-developed infrastructure that make these partnerships successful.

High-Throughput Experimentation has become a cornerstone of modern biomedical research. However, a significant divergence exists in the objectives and capabilities of platforms housed in academic versus industrial settings.

| Platform Dimension | Academic HTE Focus | Industrial HTE Focus |

|---|---|---|

| Primary Goal | Novel target/mechanism discovery, tool development | Pipeline progression, lead optimization, safety assessment |

| Throughput Scale | Moderate (10^2 - 10^4 compounds/experiment) | Ultra-High (10^4 - 10^6 compounds/experiment) |

| Automation Level | Often modular, flexible | Fully integrated, highly standardized |

| Data Infrastructure | Often bespoke, focused on analysis depth | Enterprise-scale, built for audit and traceability (ALCOA+) |

| Metric of Success | Publication, grant renewal, biological insight | Target product profile, probability of technical success (PTS) |

This gap creates a "valley of death" for promising early-stage discoveries. Hybrid models formalize collaboration to leverage the strengths of both worlds.

Core Partnership Architectures

Three prevalent models structure these partnerships.

Diagram Title: Three Hybrid Partnership Architectures for HTE

Technical Implementation: Bridging the Workflow Gap

A key challenge is integrating academic assay biology with industrial automation. The following protocol exemplifies a co-developed workflow for a phenotypic screen.

Co-Development Protocol: Complex Phenotypic Screen Transfer

Objective: Transfer a novel academic 3D co-culture assay to an industrial HTE platform for a 100k-compound screen.

Materials & Reagents: The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Material | Function in Protocol | Critical Specification |

|---|---|---|

| Primary Patient-Derived Cells (Academia) | Biologically relevant model system | Low passage ( |

| Matrigel Matrix | 3D culture scaffold | Lot-to-lot consistency, high protein concentration |

| Industrial QC'd Media | Standardized cell culture medium | Serum-free, chemically defined, performance-validated |

| Cypher-Encoded Compound Library (Industry) | High-density small molecule library | 1mM in DMSO, 1536-well format, QC'd purity/stability |

| Multiparametric Dye Set (Co-developed) | Live-cell imaging of 4 phenotypes | Non-overlapping emission, minimal cytotoxicity |

| Automation-Compatible 1536-Well Microplate | Platform standardization | Ultra-low attachment, black-walled, optically clear bottom |

Detailed Protocol:

- Assay Miniaturization & QC (Weeks 1-4):

- Academic partner prepares master cell banks and validates assay in 384-well format using a 2,000-compound benchmark library.

- Industrial partner performs liquid handling compatibility tests, determining optimal dispense parameters for viscous matrices.

- Jointly define QC metrics: Z'-factor >0.5, signal-to-background >3.

Process Automation & Integration (Weeks 5-8):

- Industrial engineers script the workflow on an integrated system (e.g., HighRes Biosolutions or Hamilton platform).

- Key steps automated: matrix dispensing (20 nL), cell seeding (500 cells/well in 2 μL), compound pin-transfer (23 nL), reagent addition.

- Academic partner trains on the automated system remotely via digital twin software.

Pilot Screen & Data Handshake (Weeks 9-12):

- Execute a 10k-compound pilot screen in duplicate.

- Industrial pipeline generates raw intensity data.

- Academic partner provides custom image analysis algorithm (e.g., CellProfiler pipeline) which is containerized and deployed on the industrial cloud.

- Data is processed; hit criteria are jointly set (e.g., >3σ from median in 2+ phenotypes).

Full Screen & Triaging (Weeks 13-16):

- Execute full 100k screen.

- Hit triage uses industrial ADMET prediction tools and academic functional genomics data to prioritize 500 leads for validation.

Diagram Title: Workflow for Academic-to-Industrial Assay Transfer

Data & Informatics: The Critical Bridge

Sustainable partnerships require interoperable data systems.

| Data Challenge | Academic Standard | Industrial Standard | Hybrid Solution |

|---|---|---|---|

| Metadata Capture | Minimal, in lab notebooks | Extensive, structured (ISA-Tab) | Co-developed minimal metadata schema (e.g., based on ACEA-Tab) |

| Primary Analysis | Custom scripts (Python/R) | Vendor software or internal pipelines | Containerized academic code (Docker/Singularity) deployed on industrial cloud |

| Data Sharing | Supplementary files, public repos | Secure, access-controlled portals | FAIR-compliant project portal with tiered access (e.g., using KNIME or Databricks) |

Case Study & Quantitative Outcomes

A recent partnership between the Academic Screening Center (ASC) and PharmaCo targeted undruggable transcription factors.

Experiment: A novel nanoBRET assay developed in academia to measure target-protein degradation was scaled for an industrial DEL (DNA-Encoded Library) screen of 5 billion compounds.

Key Hybrid Protocol Steps:

- Cell Line Engineering (Academic): Stable cell line expressing tagged transcription factor was created using CRISPR-HITI, validated via Western and microscopy.

- Assay Reformulation (Joint): Media optimized for industrial 1536-well cell dispensers; luciferase substrate stability tested over 72h.

- DEL Screening (Industrial): Screen performed in 4 pools, with deconvolution and synthesis of 300 hit structures.

- Triaging (Joint): Academic provided orthogonal cellular fitness assays; Industrial provided rapid PK/PD modeling.

Results Summary:

| Metric | Academic-Lab Scale | Industrial-Hybrid Scale | Improvement Factor |

|---|---|---|---|

| Compounds Screened | 50,000 (small library) | 5,000,000,000 (DEL) | 100,000x |

| Screen Duration | 3 weeks | 1 week | 3x faster |

| Confirmed Hit Rate | 0.1% | 0.05% (higher specificity) | Comparable |

| Time to Validated Lead | 18 months (projected) | 7 months (achieved) | >2.5x faster |

The platform capability gap between academia and industry is a significant bottleneck in therapeutic discovery. Hybrid partnership models, built on clearly defined technical workflows, shared reagent toolkits, and interoperable data systems, provide a robust framework for bridging this gap. By formalizing the integration of exploratory biology with industrialized HTE, these collaborations de-risk translation and accelerate the delivery of novel medicines to patients. The future of HTE lies not in isolated platforms, but in interconnected ecosystems that leverage the distinct and complementary strengths of both sectors.

Within the ongoing research discourse contrasting academic and industrial high-throughput experimentation (HTE) platforms, a transformative convergence is emerging: the integration of Artificial Intelligence and Machine Learning (AI/ML) for autonomous experiment design. While industrial platforms have traditionally led in scale and automation, and academic labs in fundamental methodological innovation, AI/ML is dissolving these boundaries. This technical guide examines the core architectures, algorithms, and protocols enabling this integration, providing a framework for researchers and drug development professionals to implement these approaches in both domains.

Core AI/ML Paradigms for Experiment Design

Live search results confirm the dominance of several key paradigms. The following table summarizes their characteristics, prevalence, and primary application contexts.

Table 1: Core AI/ML Paradigms in Experiment Design

| Paradigm | Key Algorithm Examples | Primary Application | Typical Platform Context | Reported Efficiency Gain |

|---|---|---|---|---|